Overview

Overview¶

Open Policy Agent is a general-purpose policy engine that unifies policy enforcement across the stack. OPA provides a high-level declarative language that let’s you specify policy as code and simple APIs to offload policy decision-making from your software. You can use OPA to enforce policies in Kubernetes.

Gatekeeper¶

Gatekeeper provides a Kubernetes admission controller built around the OPA engine to integrate OPA and the Kubernetes API service. Although there are other methods to integrate OPA with kubernetes, Gatekeeper has the following capabilities making it more kubernetes native. - An extensible, parameterized policy library - Native Kubernetes CRDs for instantiating the policy library (aka "constraints") - Native Kubernetes CRDs for extending the policy library (aka "constraint templates") - Audit functionality

Rego¶

OPA policies are expressed in a high-level declarative language called Rego. Rego is purpose-built for expressing policies over complex hierarchical data structures.

What Will You Do¶

In this exercise,

- You will create a cluster blueprint with a "gatekeeper" addon

- You will then apply this cluster blueprint to a managed cluster

Important

This tutorial describes the steps to create and use a gatekeeper based blueprint using the Web Console. The entire workflow can also be fully automated and embedded into an automation pipeline.

Assumptions¶

- You have already provisioned or imported a Kubernetes cluster using the controller

Step 1: Download YAML¶

Download the yaml file from Gatekeeper's official repo.

curl -o gatekeeper.yaml https://raw.githubusercontent.com/open-policy-agent/gatekeeper/master/deploy/gatekeeper.yaml

Step 2: Create Addon¶

- Login into the Web Console and navigate to your Project as an Org Admin or Infrastructure Admin

- Under Infrastructure, select "Namespaces" and create a new namespace called "gatekeeper-system"

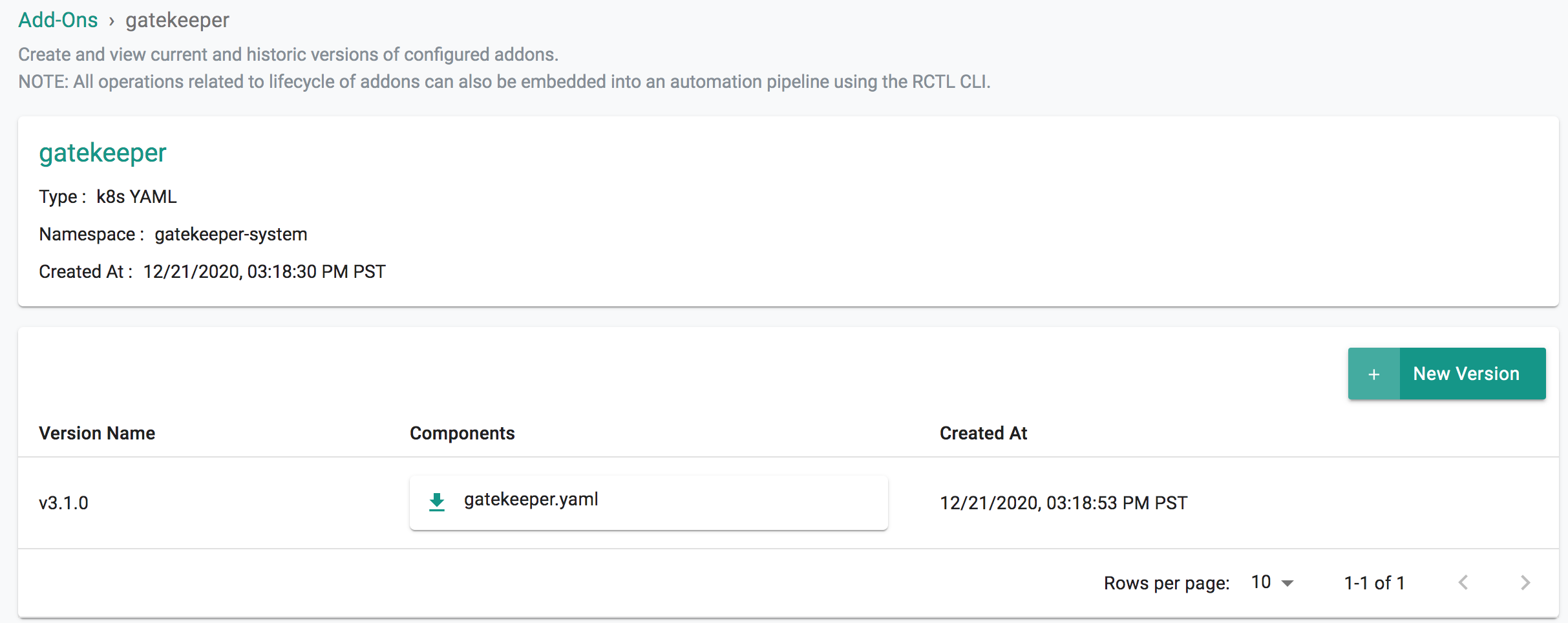

- Select "Addons" and "Create" a new Addon called "gatekeeper"

- Ensure that you select "K8s YAML" for type and select the namespace as "gatekeeper-system"

- Click CREATE to goto next step

- Select "New Version" and give it a name called "v3.1.0"

- Select Upload and chose the downloaded gatekeeper.yaml from previous step.

- Click "SAVE CHANGES"

Step 3: Create Blueprint¶

Now, we are ready to assemble a custom cluster blueprint using this addon.

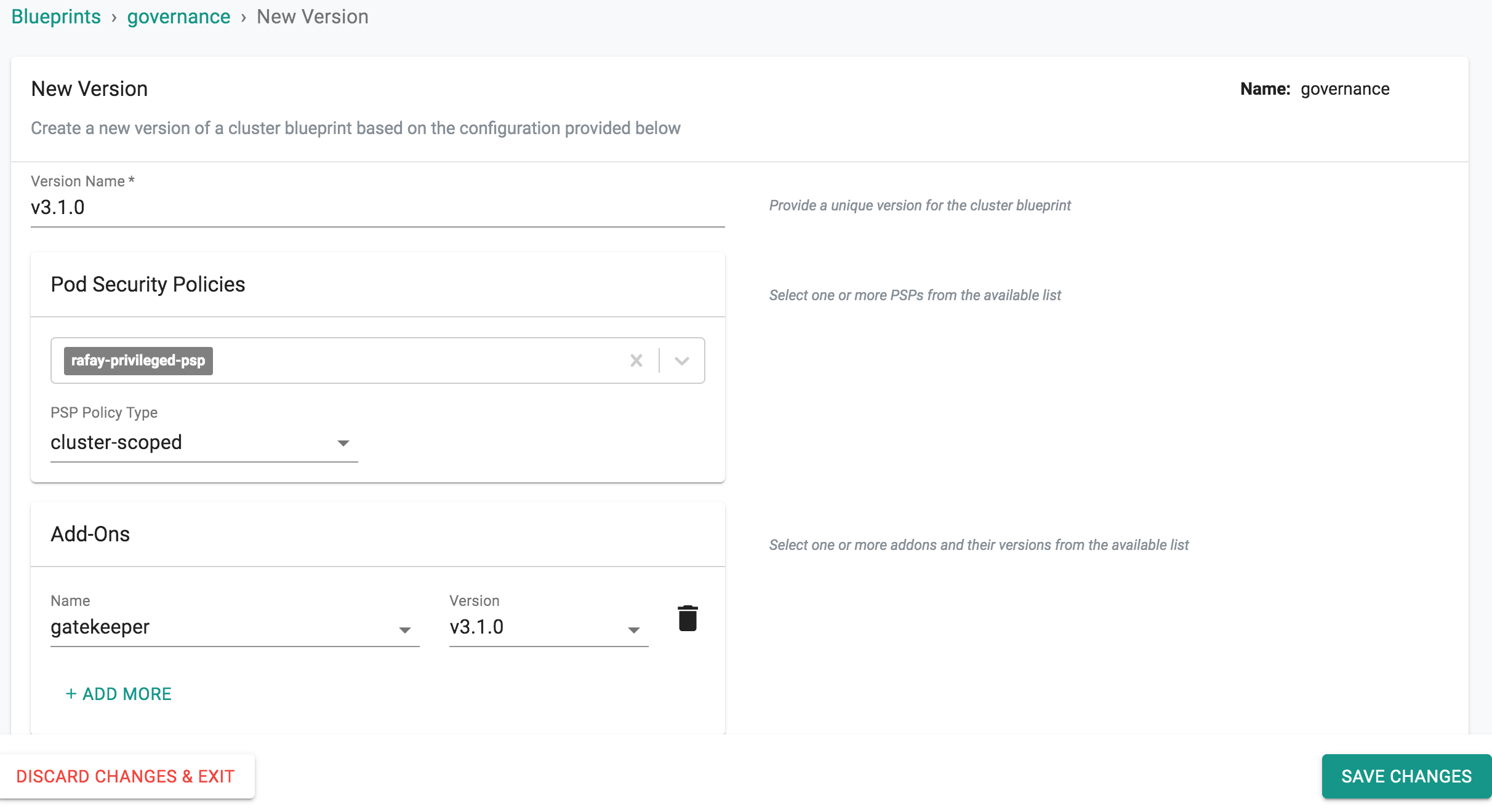

- Under Infrastructure, select "Blueprints"

- Create a new blueprint and give it a name such as "governance"

- Select "New Version" and give it a version name. Ex: v3.1.0

- Under Add-Ons, select "ADD MORE" and choose the "gatekeeper" addon created in Previous Step.

- Click "SAVE CHANGES"

Step 4: Apply Blueprint¶

Now, we are ready to apply this blueprint to a cluster.

- Click on Options for the target Cluster in the Web Console

- Select "Update Blueprint" and select the "governance" blueprint from the from the dropdown and for the version select "v3.1.0" from the dropdown.

- Click on "Save and Publish".

This will start the deployment of the addons configured in the "governance" blueprint to the targeted cluster. The blueprint sync process can take a few minutes. Once complete, the cluster will display the current cluster blueprint details and whether the sync was successful or not.

Step 5: Verify Deployment¶

Users can optionally verify whether the correct resources have been created on the cluster.

- Click on the Kubectl button on the cluster to open a virtual terminal

First, we will verify if the gatekeeper-system namespace has been created

kubectl get ns gatekeeper-system

Next, we will verify if the pods are healthy in the "gatekeeper-system" namespace

kubectl get po -n gatekeeper-system

Gatekeeper creates a number of Custom Resources-CRDs on the cluster. You can view them by issuing the following command in KubeCTL.

kubectl get crd |grep gatekeeper

configs.config.gatekeeper.sh 2020-08-22T01:18:56Z

constraintpodstatuses.status.gatekeeper.sh 2020-08-22T01:18:58Z

constrainttemplatepodstatuses.status.gatekeeper.sh 2020-08-22T01:19:01Z

constrainttemplates.templates.gatekeeper.sh 2020-08-22T01:19:04Z

Recap¶

Congratulations! You have successfully created a custom cluster blueprint with the "gatekeeper" addon and applied to a cluster.