Workflow

Important

The scope for cluster overrides is now project-wide. So, the cluster overrides configured in a project will apply only to clusters within that project and any projects they are shared with.

By default, K8s objects require certain values be set inside their specs that match their cluster's configuration. If we were to do this for a single workload across a fleet of clusters we could have many duplicate workloads. To mitigate this the platform supports cluster overrides. These allow the customer to define values that will be applied to K8s objects defined in workloads as they are deployed via the controller. This allows the customer to use a single workload org-wide and override the values when workloads are deployed.

Platform resources such as addons and workloads utilize labels which are added by the platform or by the end user. These labels are used as selectors for both the resource they will be applied as well as the clusters where the cluster override will be applied.

Important

You can manage the lifecycle of cluster overrides using the Web Console or RCTL CLI or REST APIs. It is strongly recommended to automate this by integrating RCTL with your existing CI system based automation pipeline.

In the example below we are using the AWS Load Balancer Controller workload we have created through the platform. The workload called "aws-lb-controller" is configured with the helm chart provided by AWS. The helm chart requires that a "clusterName" be set before being deployed. By default the value is left blank inside the chart. We will utilize a cluster override to set the value when the helm chart is deployed.

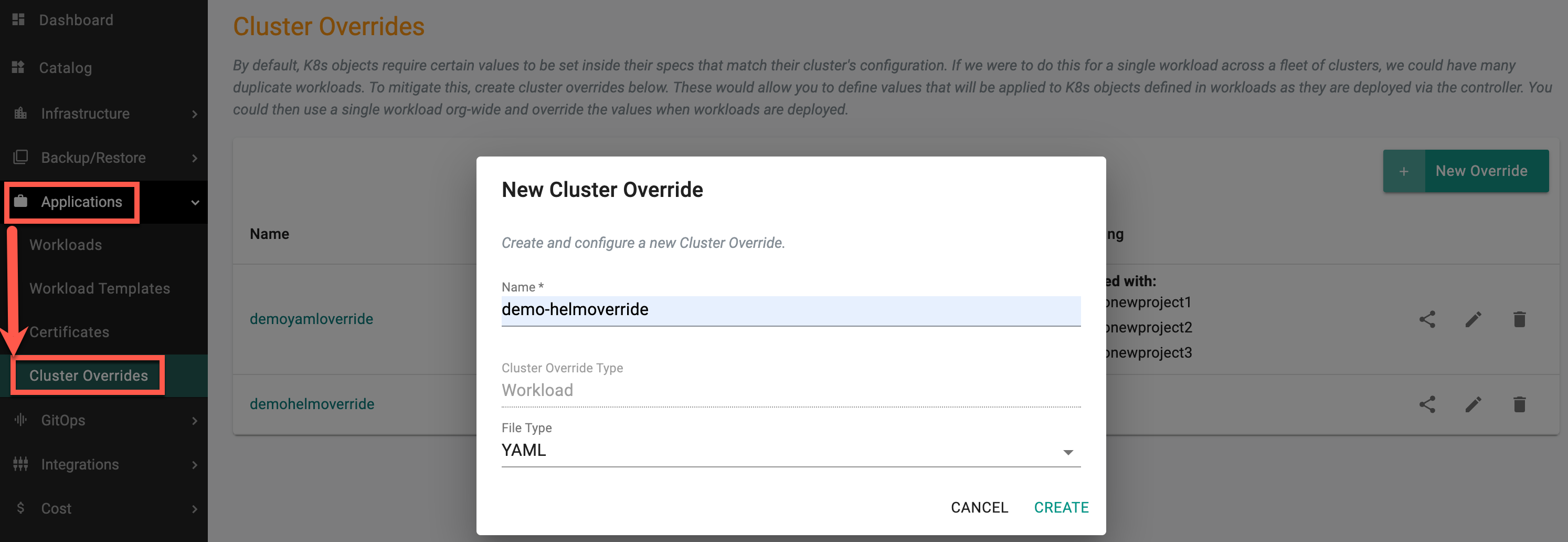

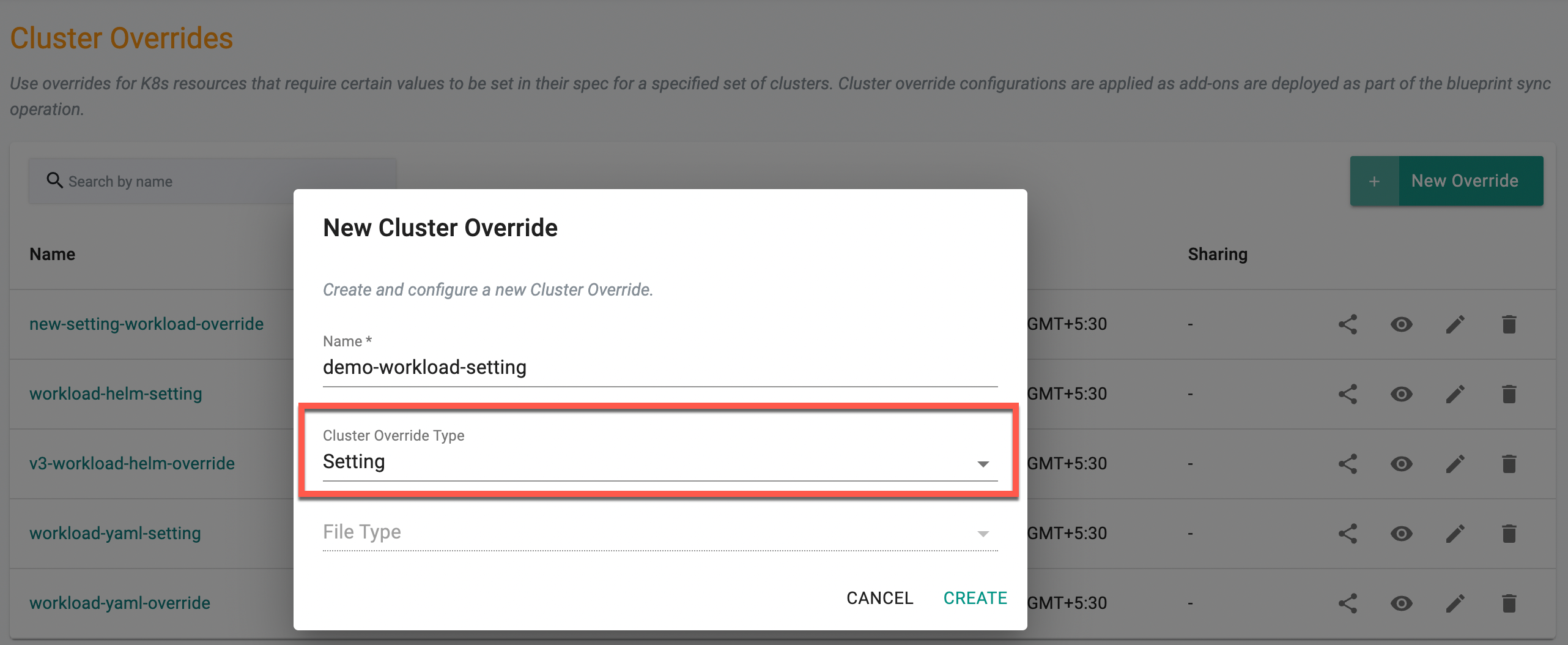

Create Cluster Override¶

As an Admin in the Web Console,

- Navigate to the Project

- Click on Cluster Overrides under Workloads

- Click on New Override and provide a name. By default, the Cluster Override Type is selected as Workload

- Select the required File Type and click Create

Both Helm and Yaml Types are supported for overrides

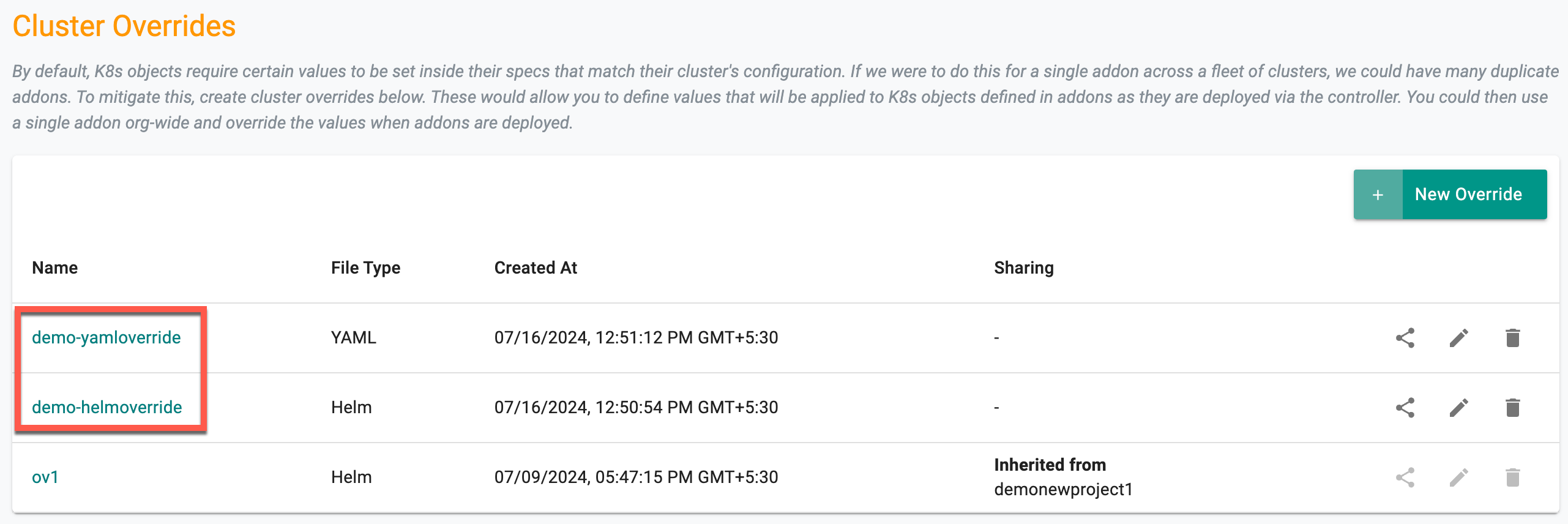

You can view the newly override listed in the Cluster Overrides page as shown in the below example

Step 2: Edit Cluster Override¶

Click on the newly created Cluster Override or Edit icon to add/edit the required fields

General¶

Name and Cluster Override Type appears by default and non-editable

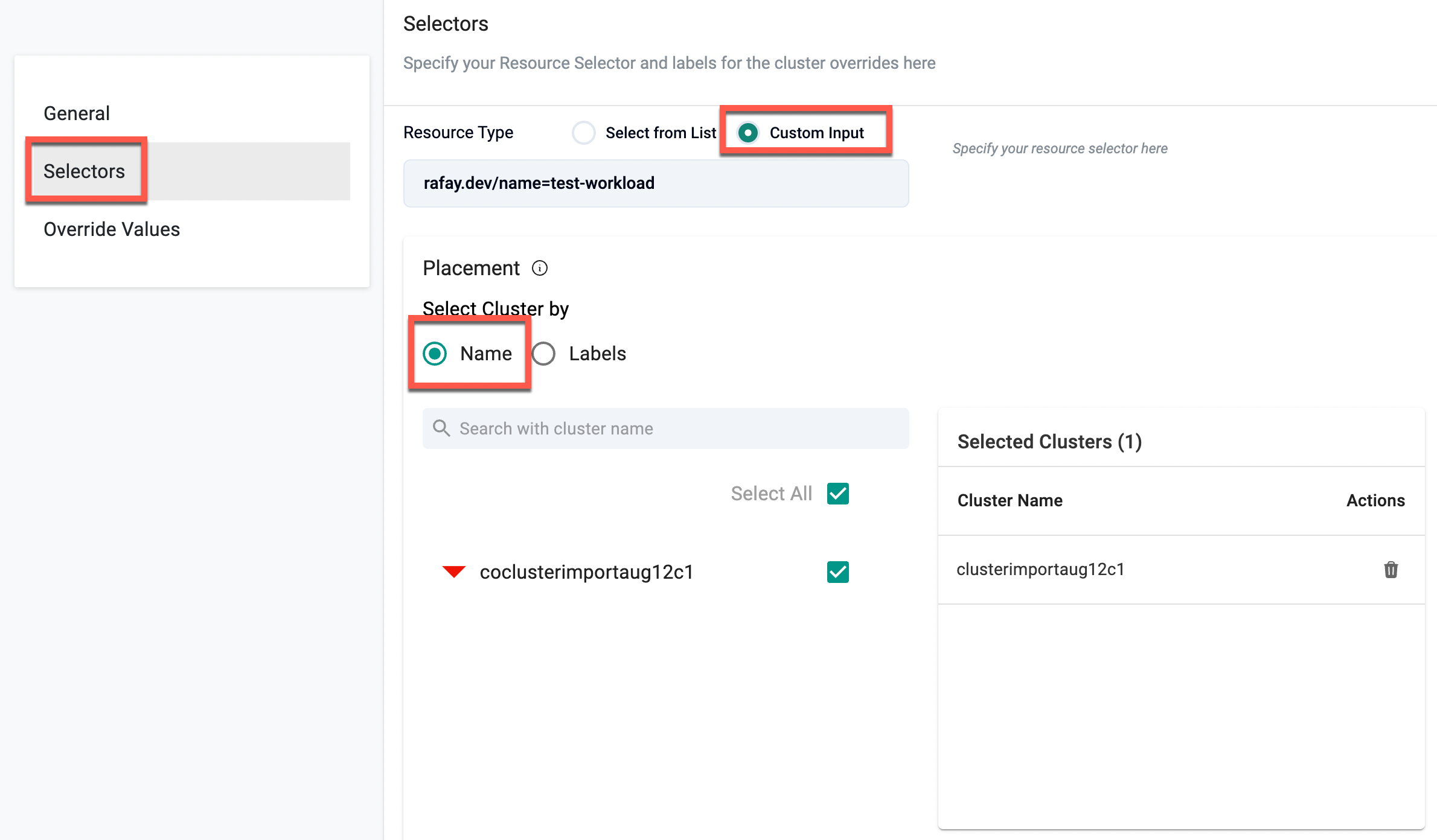

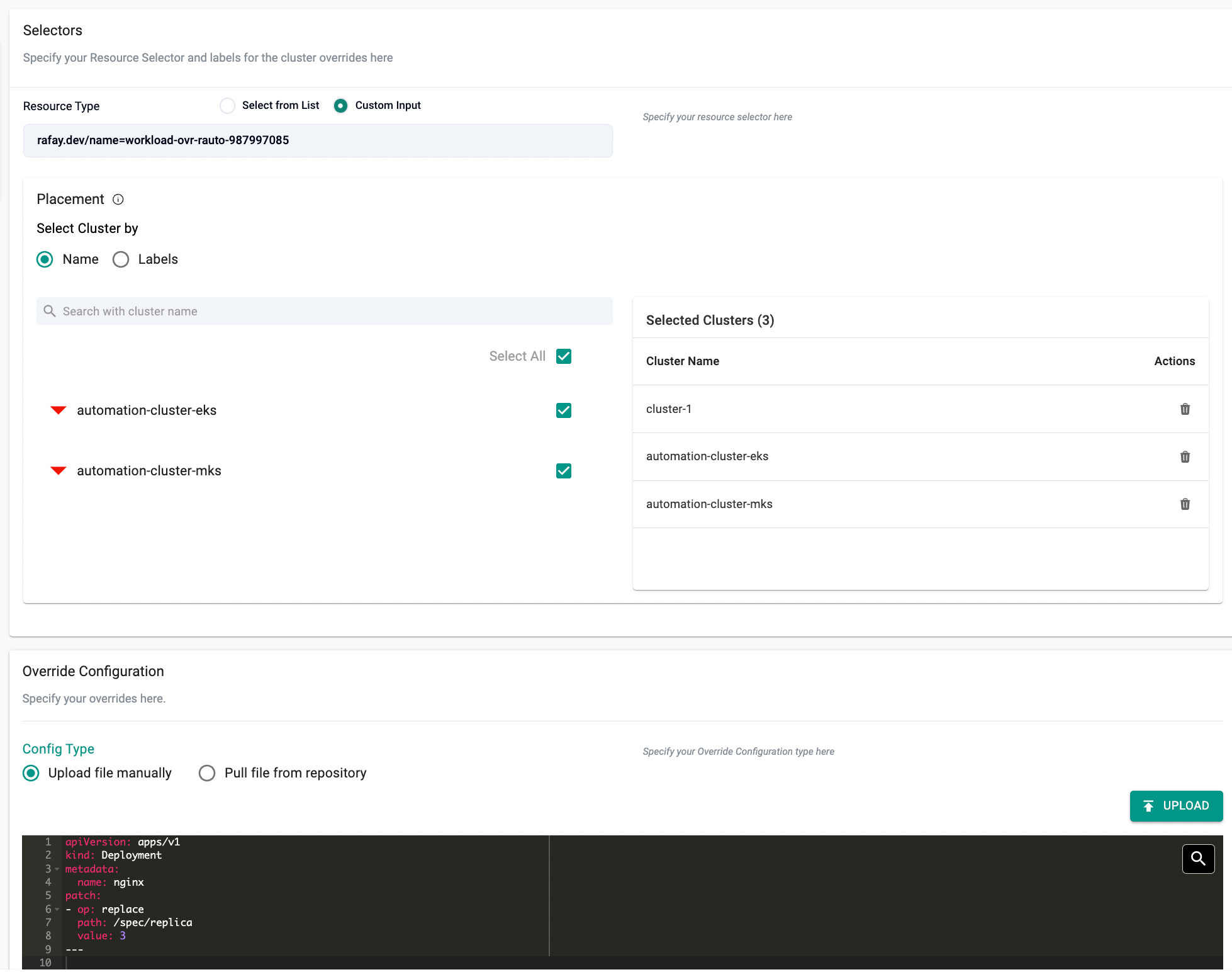

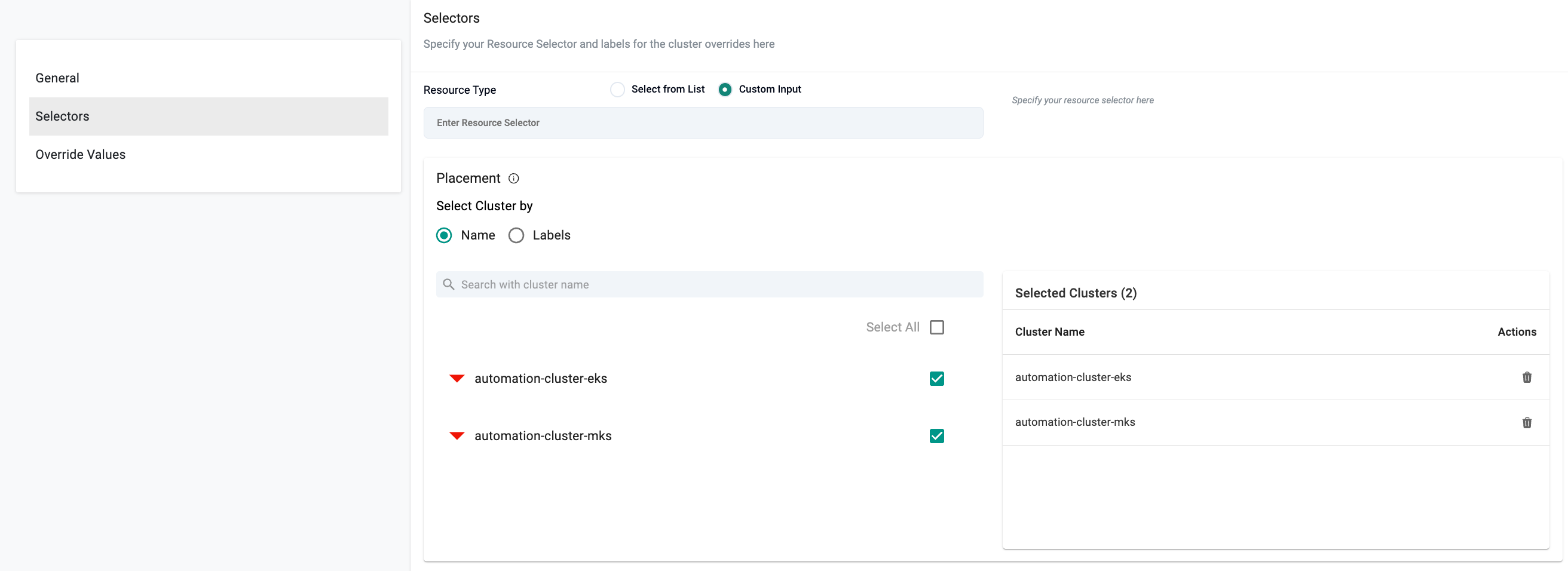

Selectors¶

- For the Resource Selector, choose the workload for which the override will be applied.

- Either Select from List or provide a Custom Input (e.g.,

rafay.dev/name=test-workload).

- Either Select from List or provide a Custom Input (e.g.,

- Under Placement, you can select clusters in two ways:

1. By Cluster Name¶

- Select Name under "Select Cluster by"

- Use the search box to find and select one or more clusters

- Selected clusters will appear in the Selected Clusters list on the right

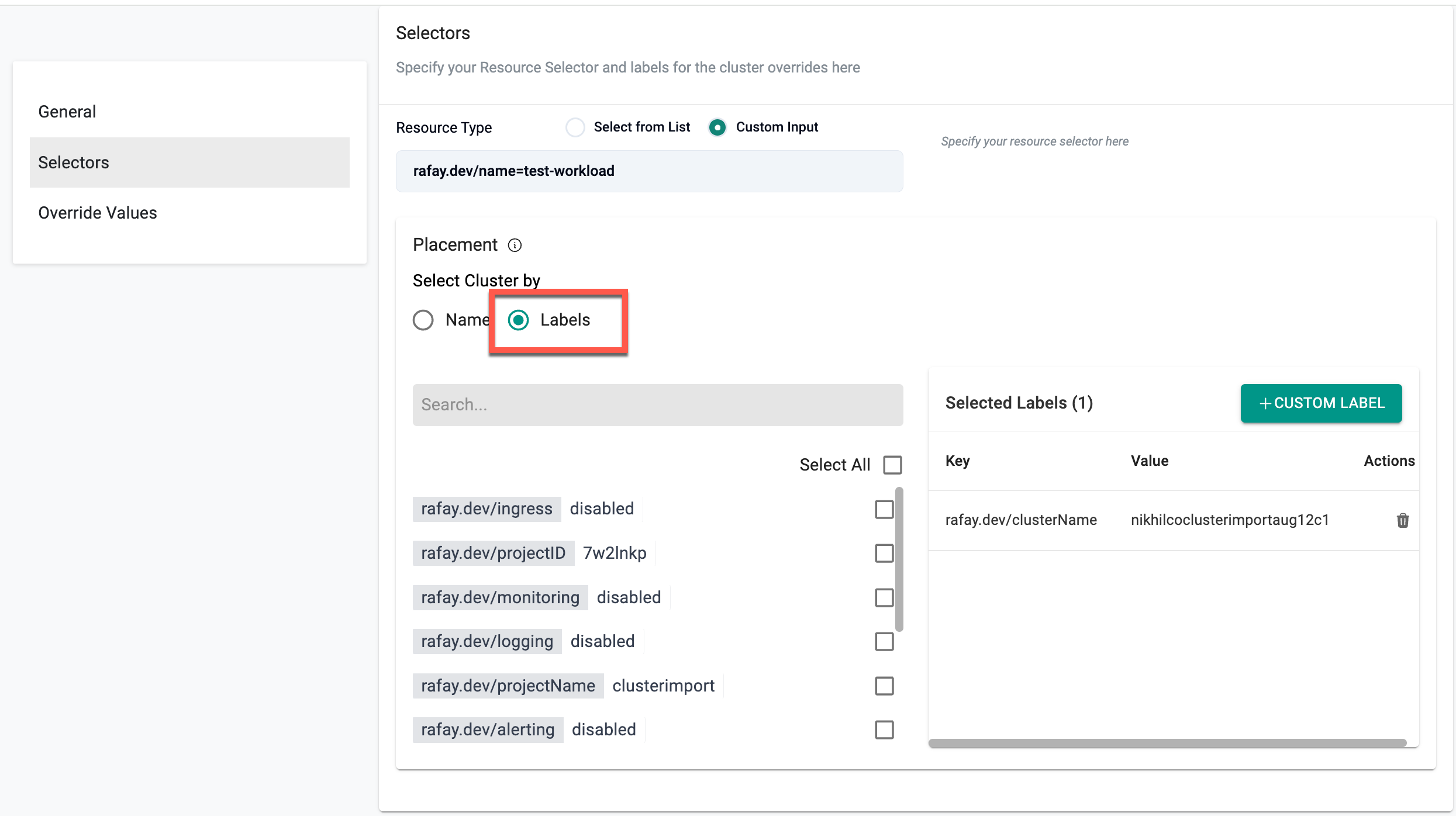

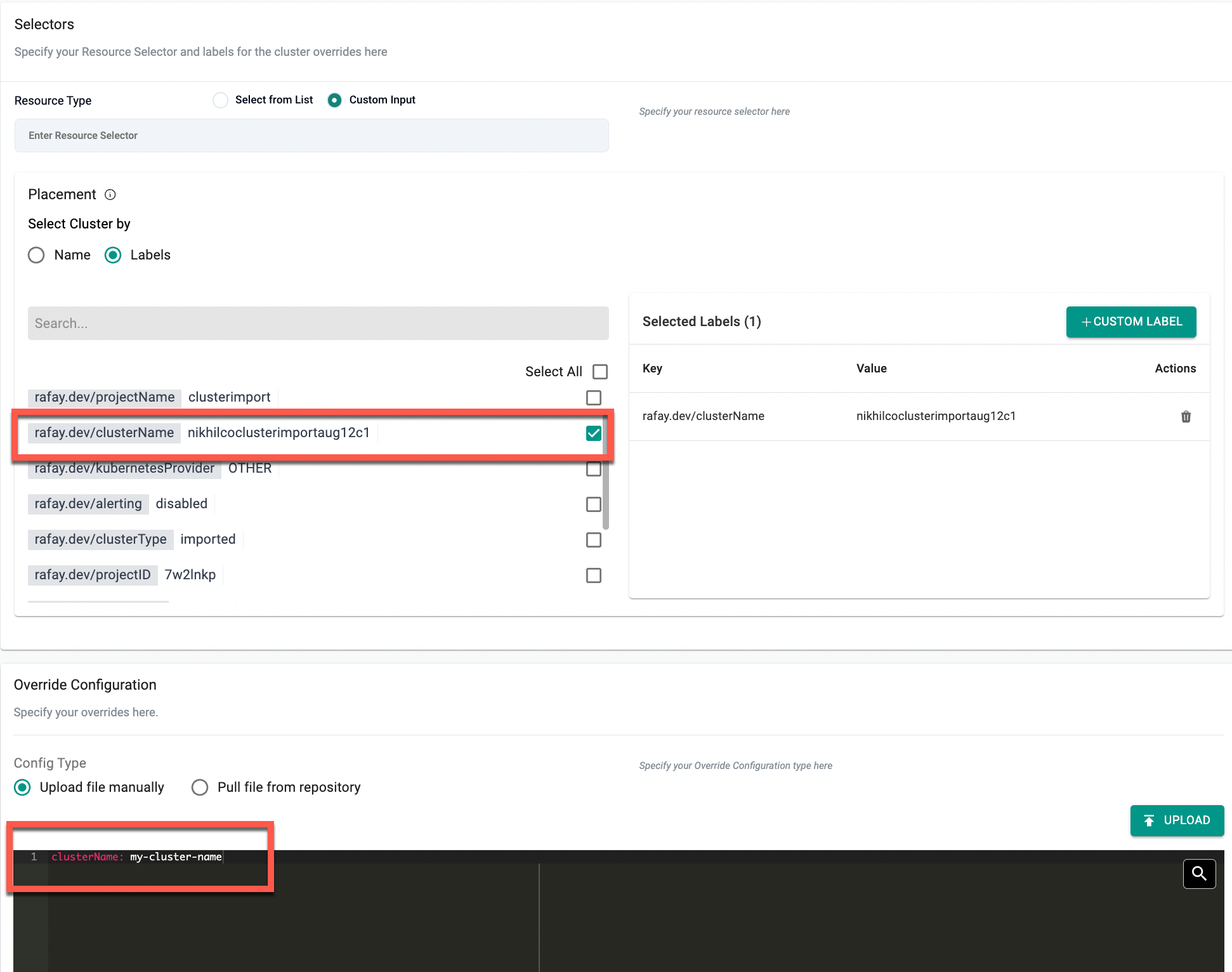

2. By Cluster Labels¶

- Select Labels under "Select Cluster by"

- Use the search box to filter and select one or more existing labels

- Chosen labels will be displayed under Selected Labels with Key/Value details

- Optionally, click + Custom Label to create a new label

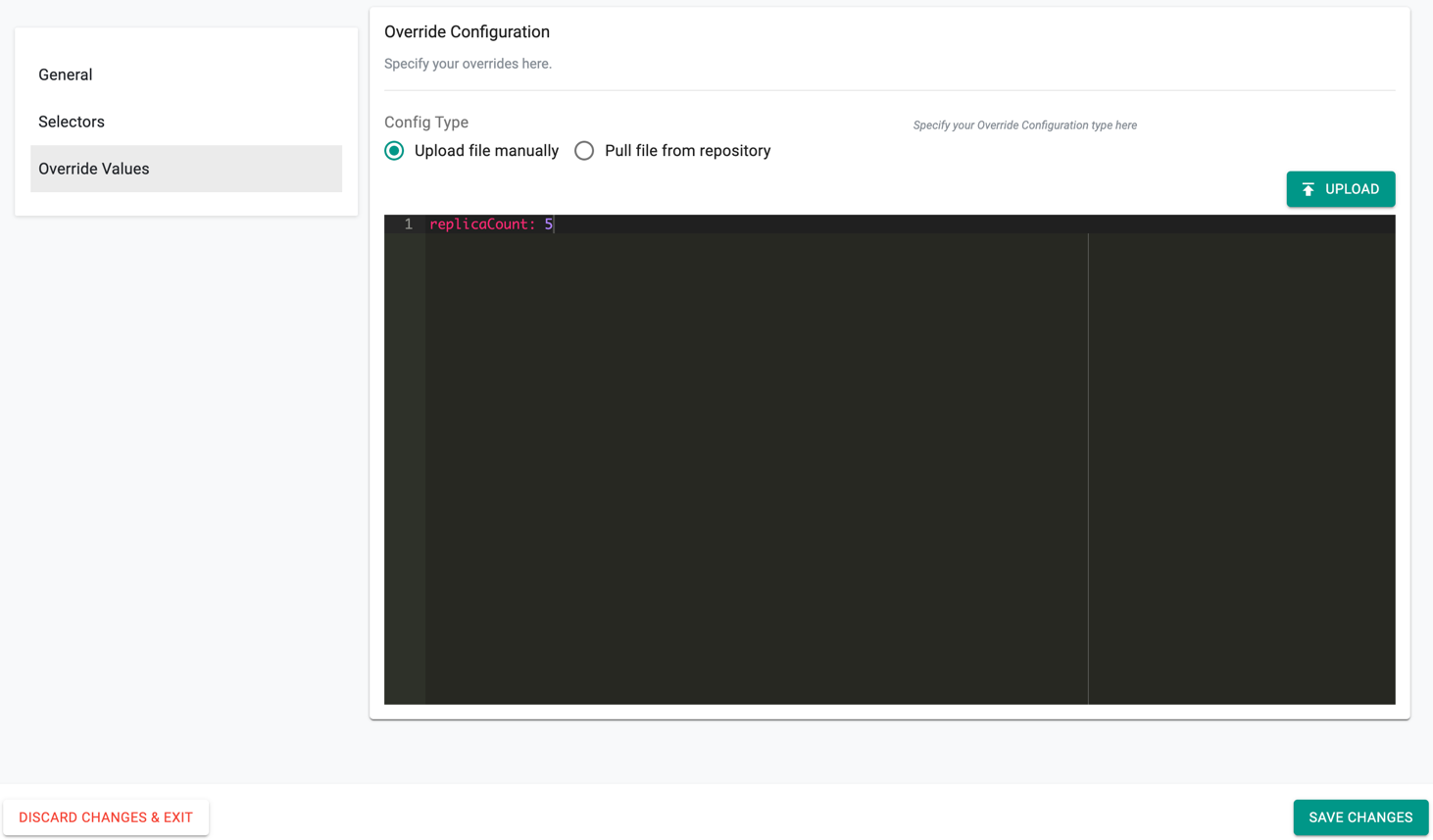

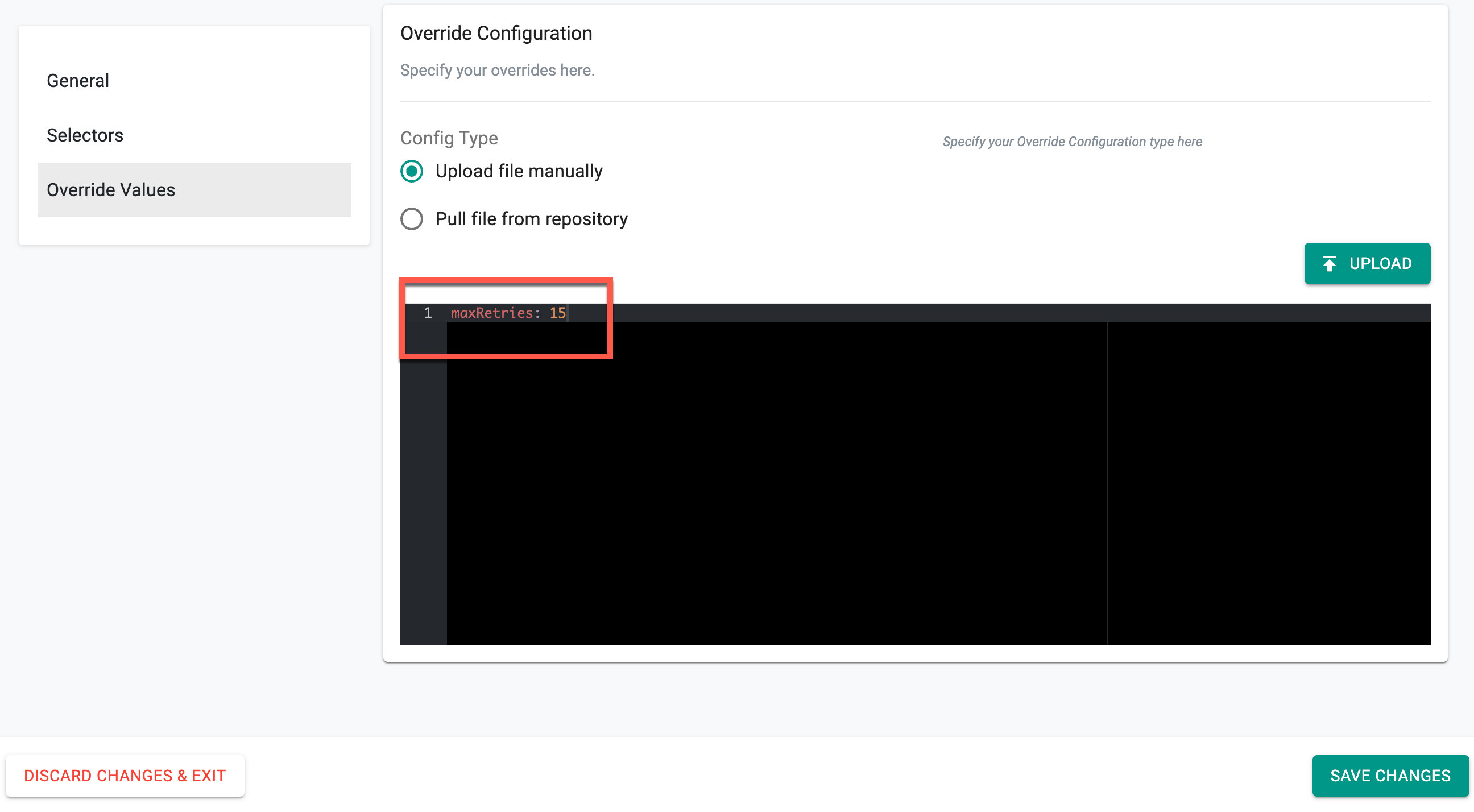

Override Values¶

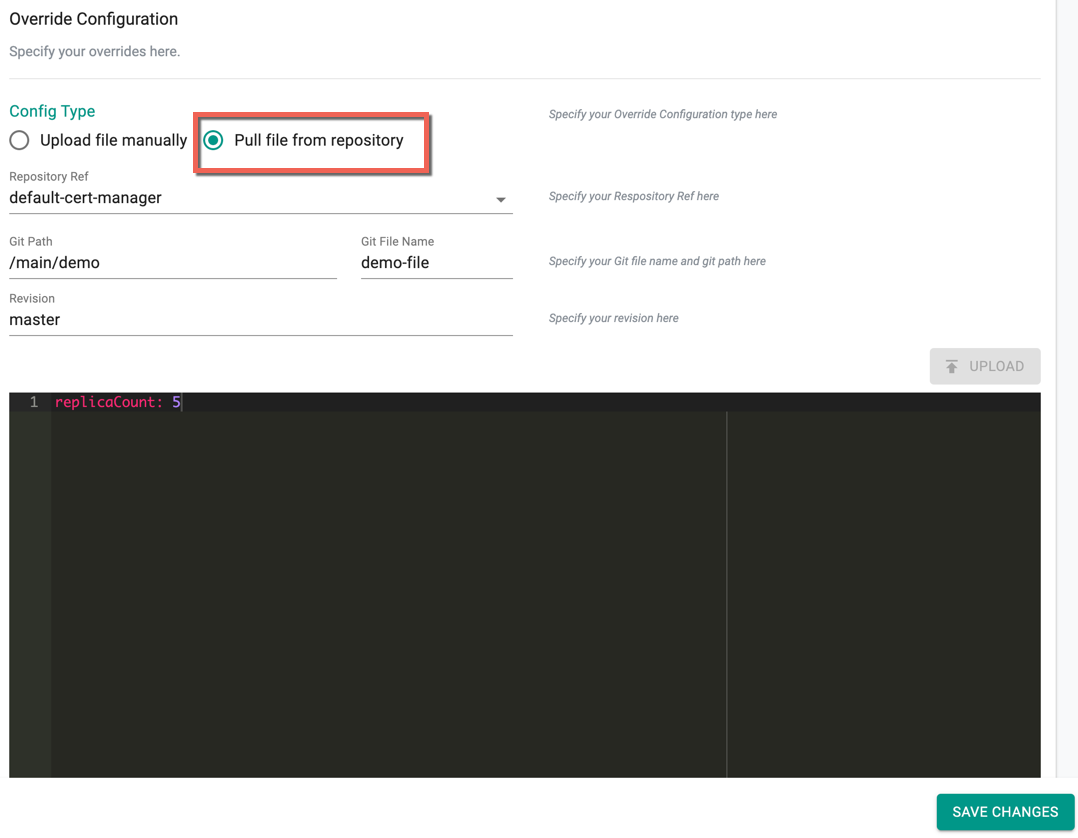

Override Configuration allows the users to specify the override values and apply the values to one or more clusters. You can either provide the values manually or pull file from repository. By default, Upload file Manually is selected

Helm Type Overrides

For the Helm Override Type, add the Override Values directly in the config screen as shown in the below example to override the replica count

- Upload button allows the users to upload an override value(s) file from their system

- On selecting Pull file from repository, provide the Git repo details to pull the required override values from the specified Git path and file as shown in the below example

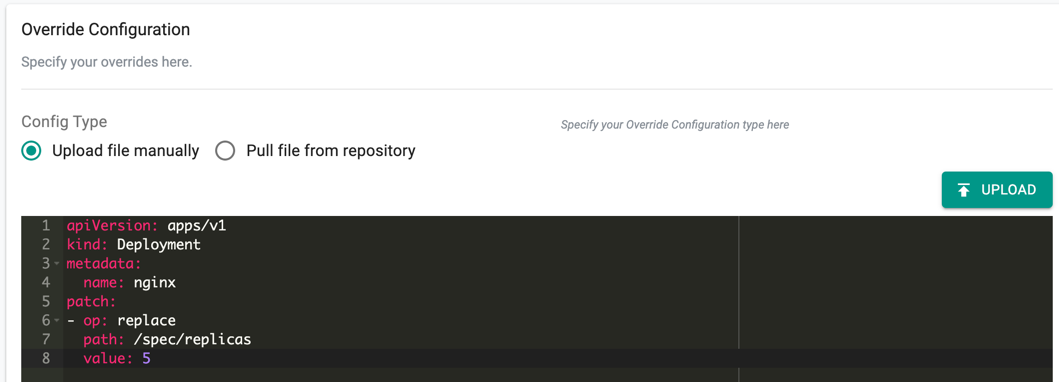

Yaml Type Overrides

For the Yaml Override Type, user must follow the below yaml format to define the override Value

kind: Deployment

metadata:

name: <app-name>

patch:

- op:<operation : replace | add | remove >

path: <atomic path>

key: <object values>

An illustrative example of the yaml config is provided below

apiVersion: apps/v1

kind: Deployment

metadata:

name: nginx

patch:

- op: replace

path: /spec/replicas

value: 5

Similar to Helm overrides, Upload allows the users to upload an override value(s) file from their system. Also, users can select the Pull file from repository and provide the Git repo details to pull the required override values from the specified Git path

- Click Save Changes

Step 3: Publish the Workload¶

- Publish the workload to the cluster utilizing the newly created cluster override

Step 4: Verify the cluster override has been applied to the deployment¶

kubectl describe pod -n kube-system aws-lb-controller-aws-load-balancer-controller-f5f6d6b47-9kjkl

Name: aws-lb-controller-aws-load-balancer-controller-f5f6d6b47-9kjkl

Namespace: kube-system

Priority: 0

Node: ip-172-31-114-123.us-west-1.compute.internal/172.31.114.123

Start Time: Wed, 07 Jul 2021 16:33:19 +0000

Labels: app.kubernetes.io/instance=aws-lb-controller

app.kubernetes.io/name=aws-load-balancer-controller

pod-template-hash=f5f6d6b47

rep-addon=aws-lb-controller

rep-cluster=pk0d152

rep-drift-reconcillation=enabled

rep-organization=d2w714k

rep-partner=rx28oml

rep-placement=k69rynk

rep-project=lk5rdw2

rep-workloadid=kv6p0vm

Annotations: kubernetes.io/psp: rafay-kube-system-psp

prometheus.io/port: 8080

prometheus.io/scrape: true

Status: Running

IP: 172.31.103.206

IPs:

IP: 172.31.103.206

Controlled By: ReplicaSet/aws-lb-controller-aws-load-balancer-controller-f5f6d6b47

Containers:

aws-load-balancer-controller:

Container ID: docker://e115a56b7444ea55bda8f2503b9b046d6fd84dbffd3cbf77090f35f35c2657ef

Image: 602401143452.dkr.ecr.us-west-2.amazonaws.com/amazon/aws-load-balancer-controller:v2.1.3

Image ID: docker-pullable://602401143452.dkr.ecr.us-west-2.amazonaws.com/amazon/aws-load-balancer-controller@sha256:c7981cc4bb73a9ef5d788a378db302c07905ede035d4a529bfc3afe18b7120ef

Ports: 9443/TCP, 8080/TCP

Host Ports: 0/TCP, 0/TCP

Command:

/controller

Args:

--cluster-name=my-cluster-name

--ingress-class=alb

State: Running

Started: Wed, 07 Jul 2021 16:33:25 +0000

Ready: True

Restart Count: 0

Liveness: http-get http://:61779/healthz delay=30s timeout=10s period=10s #success=1 #failure=2

Environment: <none>

Mounts:

/tmp/k8s-webhook-server/serving-certs from cert (ro)

/var/run/secrets/kubernetes.io/serviceaccount from aws-lb-controller-aws-load-balancer-controller-token-dllmd (ro)

Conditions:

Type Status

Initialized True

Ready True

ContainersReady True

PodScheduled True

Volumes:

cert:

Type: Secret (a volume populated by a Secret)

SecretName: aws-load-balancer-tls

Optional: false

aws-lb-controller-aws-load-balancer-controller-token-dllmd:

Type: Secret (a volume populated by a Secret)

SecretName: aws-lb-controller-aws-load-balancer-controller-token-dllmd

Optional: false

QoS Class: BestEffort

Node-Selectors: <none>

Tolerations: node.kubernetes.io/not-ready:NoExecute op=Exists for 300s

node.kubernetes.io/unreachable:NoExecute op=Exists for 300s

Events:

Type Reason Age From Message

---- ------ ---- ---- -------

Normal Scheduled 3m27s default-scheduler Successfully assigned kube-system/aws-lb-controller-aws-load-balancer-controller-f5f6d6b47-9kjkl to ip-172-31-114-123.us-west-1.compute.internal

Normal Pulling 3m26s kubelet Pulling image "602401143452.dkr.ecr.us-west-2.amazonaws.com/amazon/aws-load-balancer-controller:v2.1.3"

Normal Pulled 3m22s kubelet Successfully pulled image "602401143452.dkr.ecr.us-west-2.amazonaws.com/amazon/aws-load-balancer-controller:v2.1.3" in 4.252275657s

Normal Created 3m21s kubelet Created container aws-load-balancer-controller

Normal Started 3m21s kubelet Started container aws-load-balancer-controller

Important

- Users can share the Workload Overrides to one or more projects. For more information, refer to Share Override

- If multiple workload overrides match the resource in a cluster, the overrides are applied in the order they were created, with the latest override taking priority. This applies to overrides shared across projects

Example with Custom Labels Placement Type¶

Override Values¶

clusterName: my-cluster-name

Example with Custom Value Placement Type¶

Override Values¶

replicaCount: 1

image:

repository: nginx

pullPolicy: Always

tag: "1.19.8"

service:

type: ClusterIP

port: 8080

Configurable Workload Retry Limit¶

Users who experience delays in workload deployment due to multiple readiness check retries, especially when dependencies are involved, can now configure the number of retries for workloads. This enhancement allows users to define a retry limit, ensuring faster failure detection when workloads do not become ready within a specified time. By adjusting the retry count, users can optimize workload deployment efficiency and have greater control over workload readiness.

To set the retry limit,

- Click New Override and provide a name

- Select Setting as the Cluster Override type and click Create

- Select the required Resource Selector and Placement type. The example below shows the Specific Cluster type

Note: Both Yaml and Helm types are supported

- Specify the maximum retry count in the configuration editor, as shown below, where maxretry is set to 15.

⚠️ Important: The retry count range must be between 2 and 20.

- Click Save Changes

Once the retry limit is set to 15 and saved, the system enforces this as the maximum number of readiness check retries for the selected cluster workloads. If a workload fails the readiness check initially, it will be retried up to 15 times. If it becomes ready within these attempts, the deployment continues. Otherwise, after 15 failed retries, the system stops further attempts and marks the workload as failed, ensuring timely detection of deployment issues.