2021

v1.9.0¶

17 Dec, 2021

Upstream Kubernetes¶

k8s v1.22¶

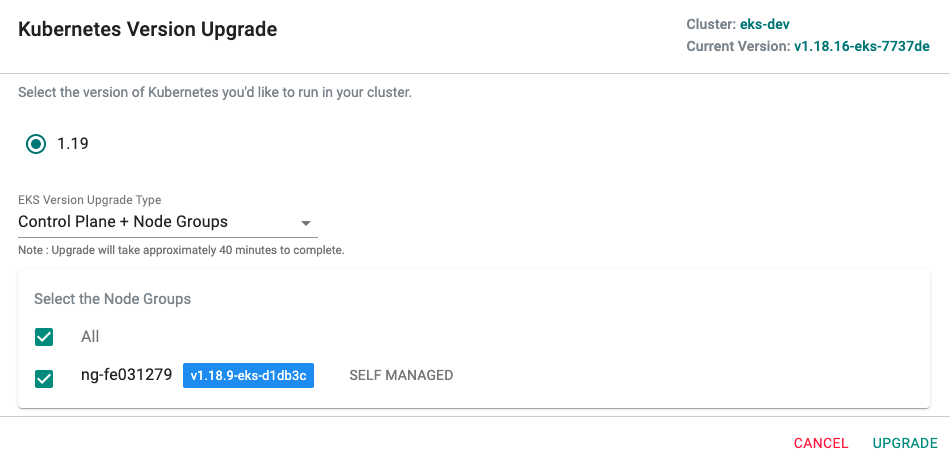

Provisioning of new upstream Kubernetes clusters based on v1.22. Existing clusters based on older versions can be upgraded in-place to v1.22.

Note that Kubernetes v1.22 removes several core APIs. Plan and test before performing upgrades to ensure your applications are not impacted.

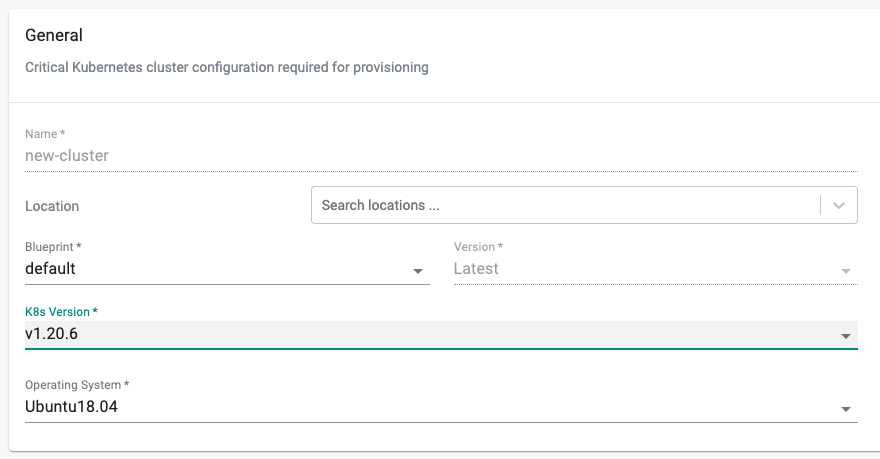

Extended Cluster Name¶

Upstream Kubernetes clusters can now be provisioned with extended cluster names with a maximum length of 63 characters.

Read Only Org Admin Role¶

A new "read only" organization admin role is available. Users with this role have "read only" access to all resources that a regular organization administrator has access to.

Amazon EKS¶

Subnets¶

Users can now specify different subnets for the EKS control plane and node groups during cluster provisioning.

Host Network for Calico¶

Turnkey support for hostnetwork is now available for EKS clusters using the Calico CNI.

Single Sign On (SSO)¶

SSO integration has been enhanced to supportand option where "group information" is not sent by the configured Identity Provider (IdP). These users will be authenticated by the IdP, but the user's role assignments will be performed locally by the Org Administrator.

Zero Trust Kubectl¶

Customers using private relay networks can now configure and use custom certificates for authentication.

Download Container Logs¶

The platform's integrated debugging and troubleshooting capabilities have been enhanced allowing authorized developers to download "container logs" from remote clusters in a single click to their laptops.

GitOps¶

Secrets Encryption¶

GitOps pipelines now support automatic encryption of sensitive data that is stored and accessed from customer Git repositories.

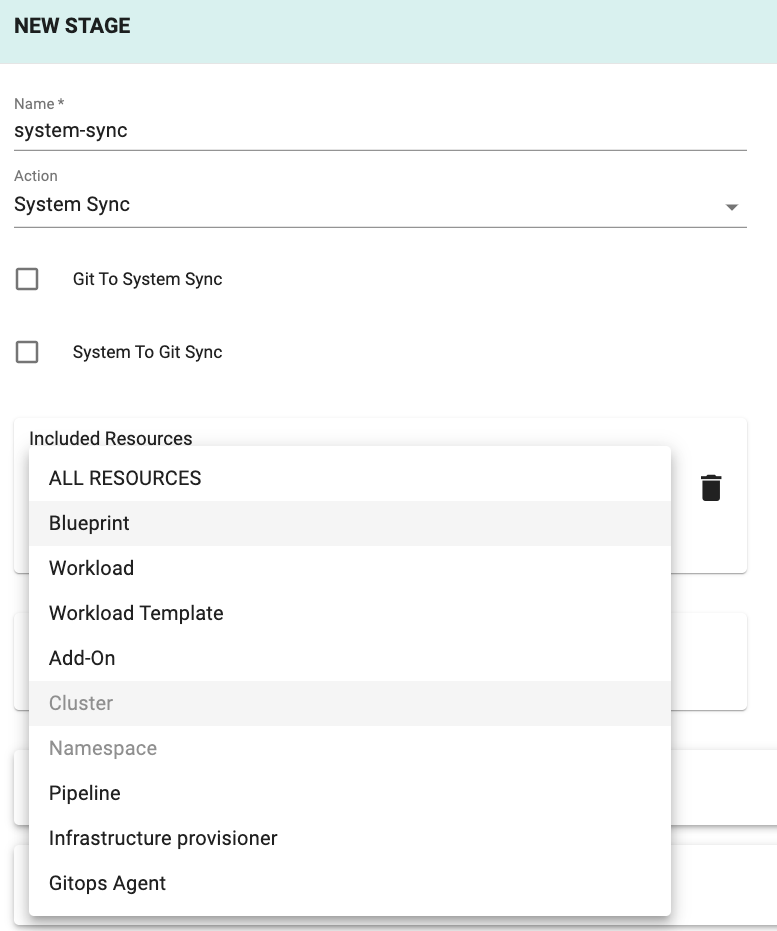

Insert Stages¶

Users can now insert a new stage between existing stages in their pipelines. This allows users to incrementally iterate and tweak workflows in a non-invasive manner.

Bug Fixes¶

| Bug ID | Description |

|---|---|

| RC-12411 | Whitelabeling for Namespace wizard |

| RC-12133 | 'clear filter' button is not working on System > Alert page |

| RC-12132 | No option to delete the Project which has a long name |

| RC-11946 | Provide "Save and Exit" button in IdP configuration page |

| RC-11562 | Unshare the cluster from 1 project causing the cluster set to not shared in the v2 DB causing deployment/gitops agent stopped working in shared projects |

| RC-10847 | EKS Upgrade: When upgrading node group only in an EKS cluster show the nodegroup name in the summary |

| RC-9708 | EKS cluster provisioning still failed after setting the correct permission for the cloud credentials |

| RC-9277 | Kubectl console from the web console is overlapping with the dashboard |

v1.8.0¶

19 Nov, 2021

Amazon EKS¶

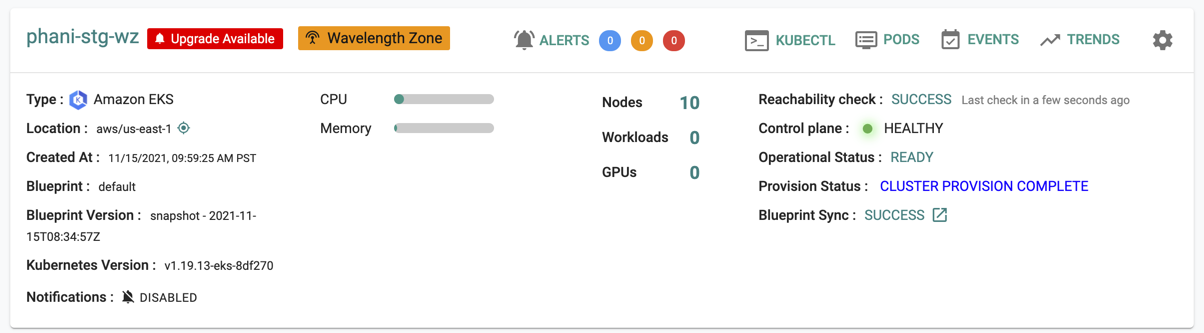

AWS Wavelength¶

AWS Wavelength zones are ‘availability zones’ where compute and storage services are embedded within a telecom provider's data centers at the edge of the 5G network. Wavelength zones bring processing power and storage physically closer to 5G mobile users and wireless devices delivering enhanced user experiences such as near "real-time analytics" for instant decision-making or automated robotic systems in manufacturing facilities.

Infrastructure administrators can now

- Provision node groups in wavelength zones (WZ)

- Automatically assign a Carrier IP to nodes in WZ and display in the dashboard

- Provision a Carrier Gateway.

More here

Application owners can now leverage advanced placement strategies to deploy the "same workload to different wavelength zones in the same EKS cluster".

Scale to 0¶

EKS Clusters with managed node groups can now be scaled to zero (0) worker nodes allowing users to save AWS spend during periods of no utilization.

Blueprints¶

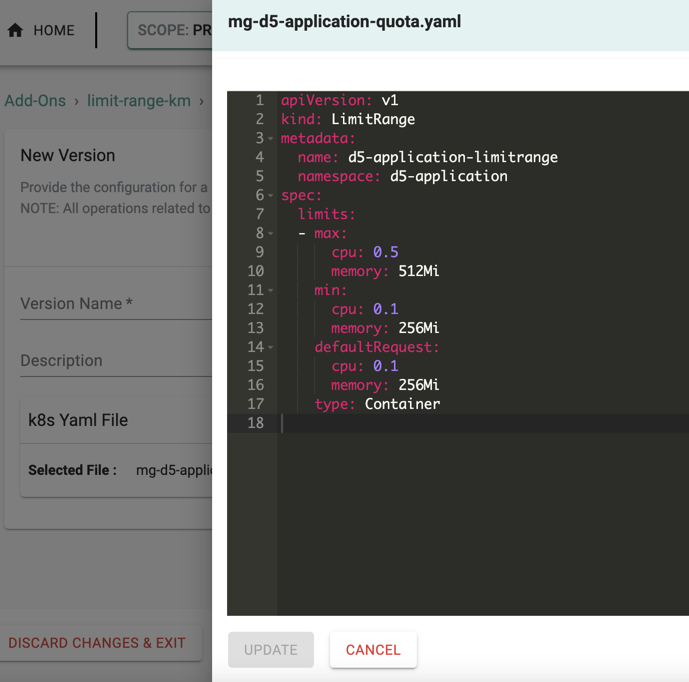

Inline YAML Editor¶

Support for inline editing of YAML based addons in a cluster blueprint

Swagger APIs¶

Swagger APIs have been added to (a) download bootstrap YAML files for imported clusters and (b) Add labels to nodes.

Bug Fixes¶

| Bug ID | Description |

|---|---|

| RC-12096 | Resolved issue applying TLS private key to Wizard workloads |

| RC-12078 | Prevent node delete when it's the only node in the cluster and master |

| RC-12026 | Correctly render Cluster Resource Utilization Chart |

| RC-11828 | Fixed issue with shared Addon/Blueprint and Cluster Override |

| RC-11946 | Added "Save and Exit" to IdP configuration Page |

| RC-10079 | Added validation to reject multiple namespaces in the yaml manifest / yaml workload |

| RC-7965 | Added ability to edit and save managed Addon Config files |

v1.7.0¶

29 Oct, 2021

Cluster Blueprints¶

The RCTL CLI now provides administrators the ability to specify the blueprint version in declarative cluster specification files for imported clusters.

Amazon AWS¶

Tags/Labels¶

Cluster administrators can now use the RCTL CLI to update "tags" and "labels" for managed nodegroup after creation.

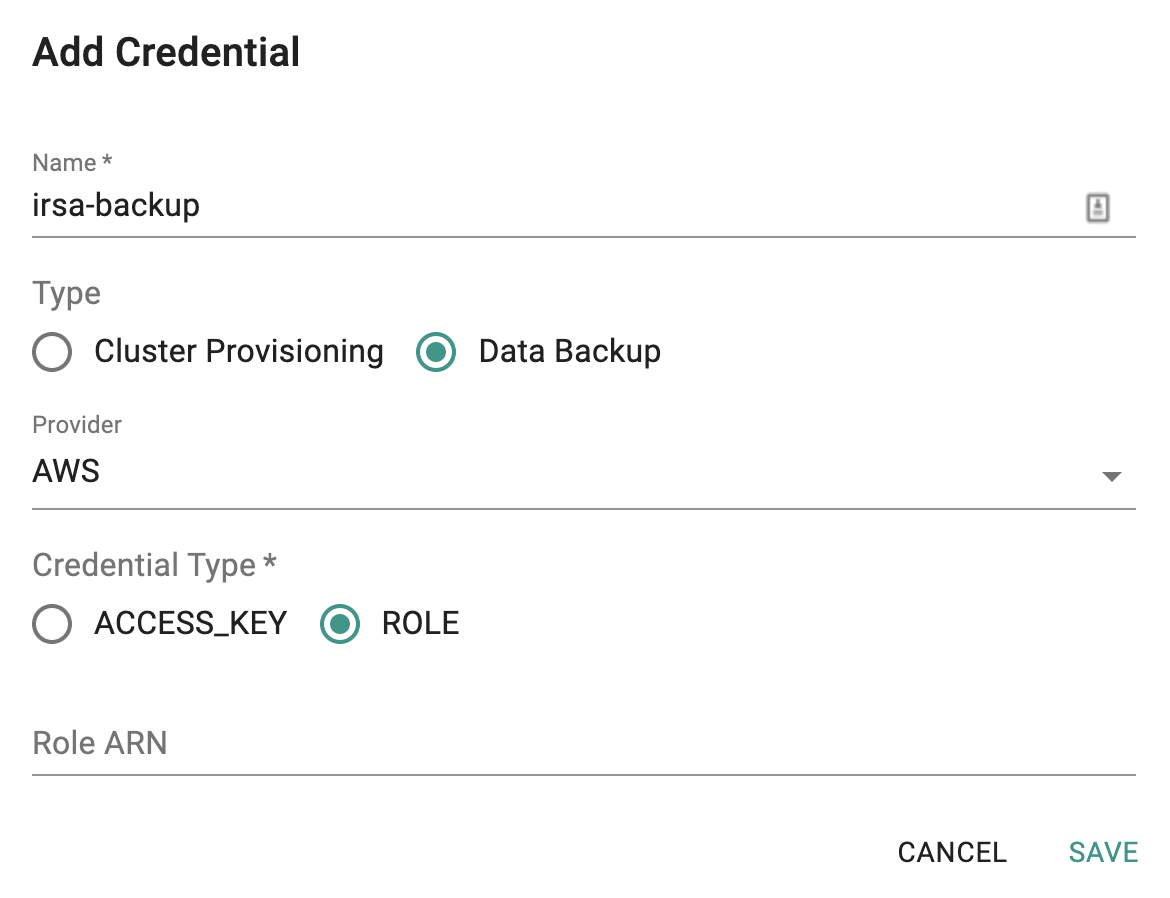

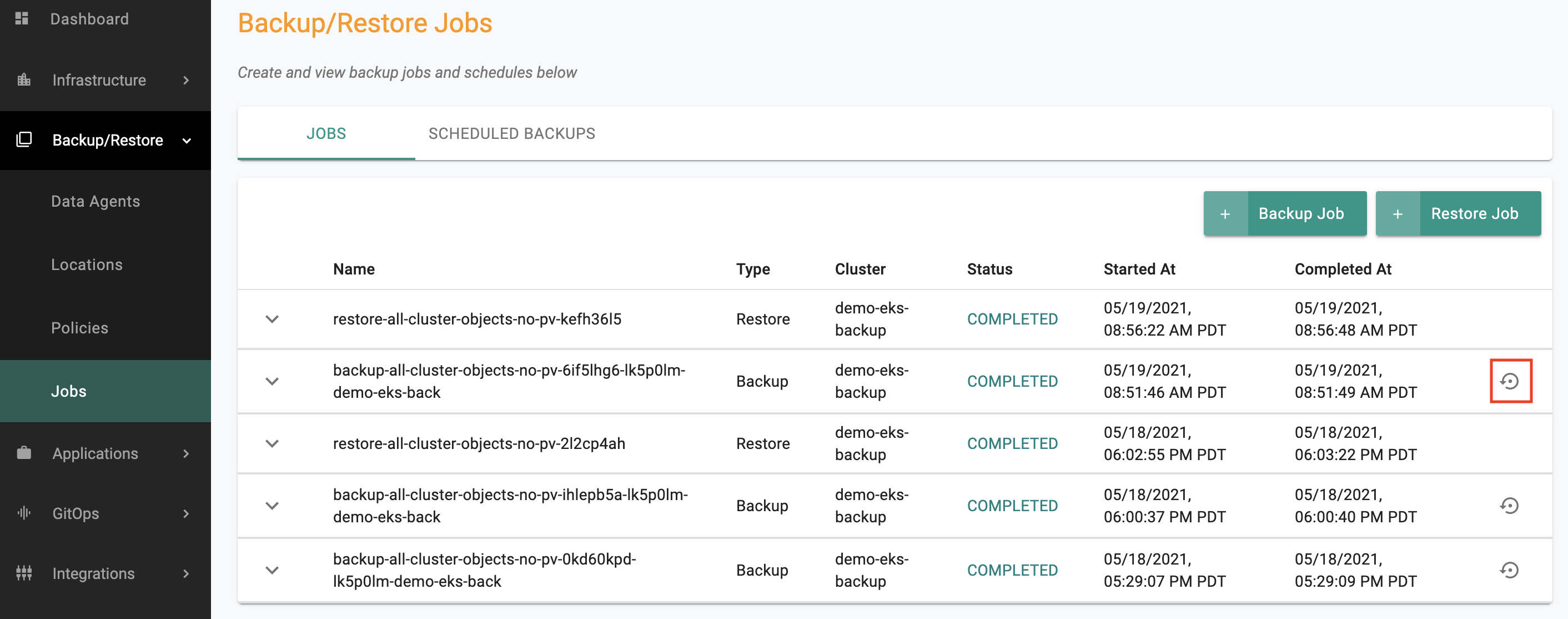

Backup/Restore¶

In addition to access keys, AWS IAM Roles for Service Accounts (IRSA) can now be used as credentials to perform backups/restores of Amazon EKS Clusters to S3. In addition to managing the entire lifecycle and operations using the web console, operations can now also be fully automated using the RCTL CLI.

Custom AMI¶

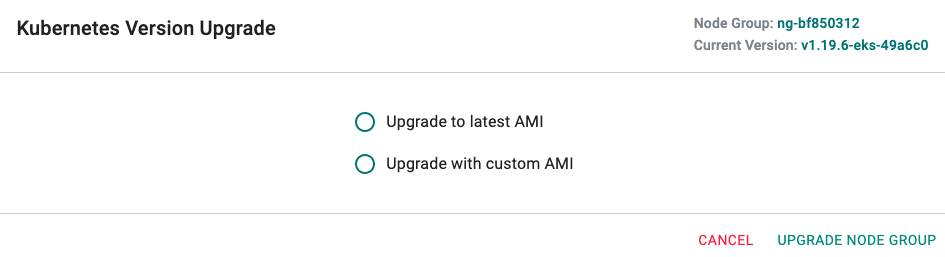

Fully lifecycle management (provisioning, scaling and in-place upgrades) for managed node groups with Custom AMIs is now supported.

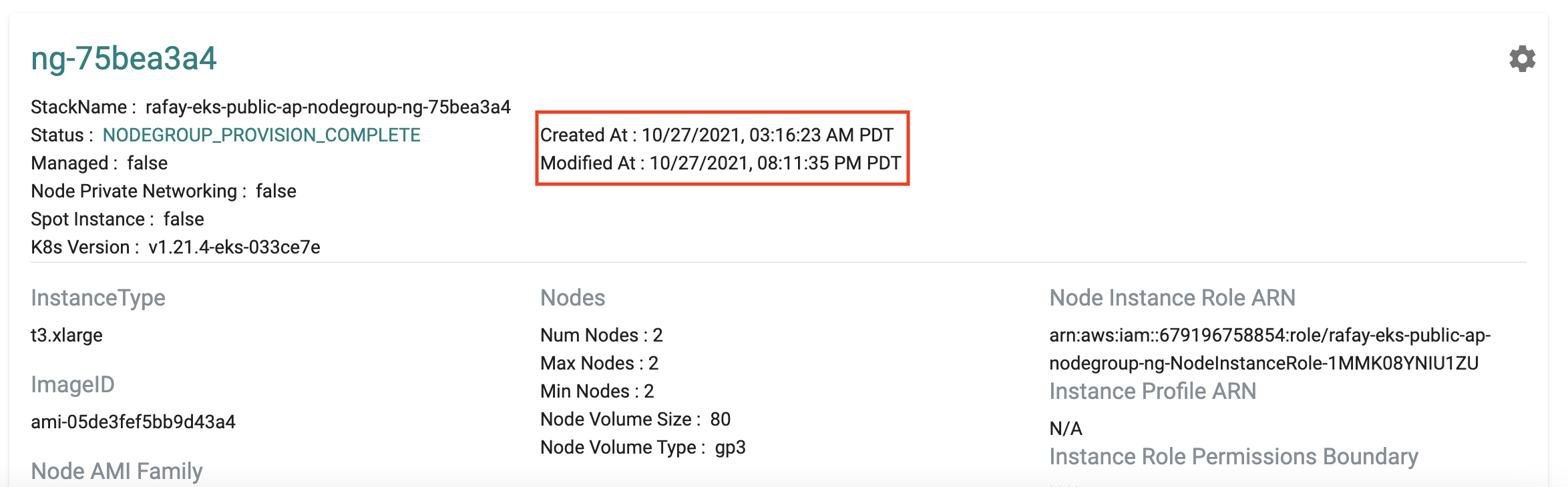

Node Group Timestamps¶

Both creation and last update time stamps for node groups are now displayed on the web console. This allows administrators to quickly answer "when was the last change" made.

Tagging Enhancements¶

Support for tagging of OIDC provider, launch template resources and ASG

Azure¶

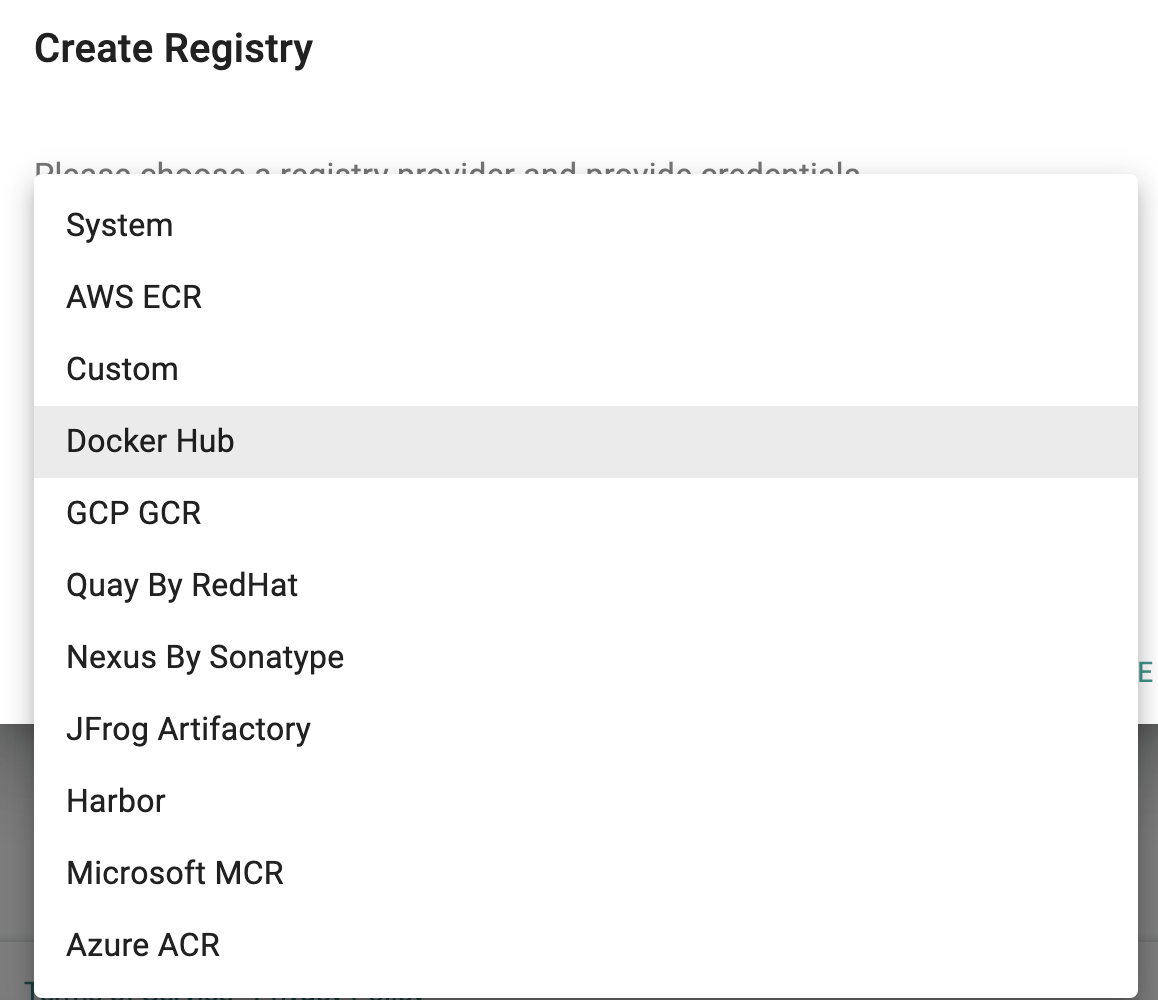

Azure Container Registry¶

A turnkey integration with Azure Container Registry (ACR) is now available

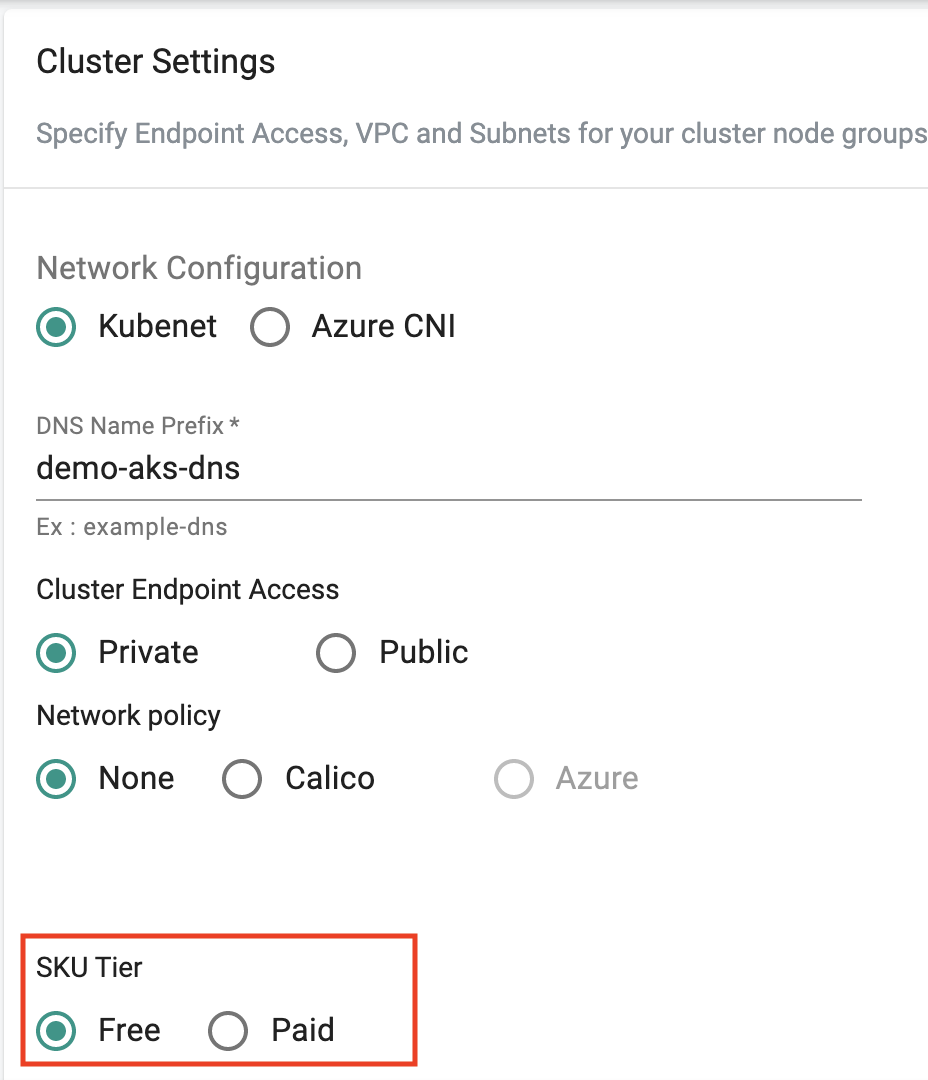

SLA Backed AKS Control Plane¶

Administrators can optionally select a SLA backed control plane for their AKS clusters

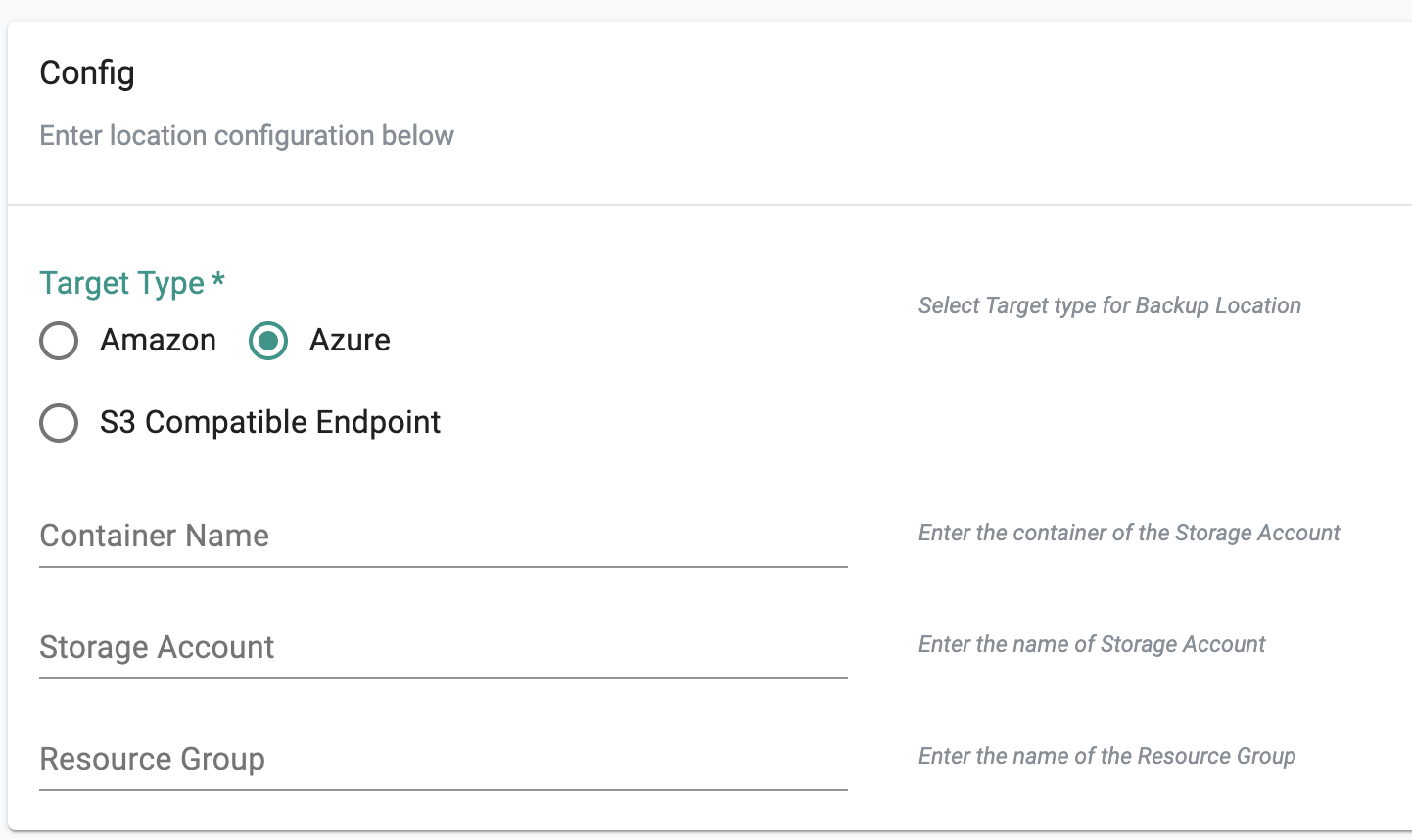

Backup/Restore¶

Streamlined backup and restore operations can now be performed on AKS clusters with backups to Azure storage.

Upstream Kubernetes¶

A declarative cluster specification can be used with the RCTL CLI for lifecycle management of upstream Kubernetes clusters managed by the controller.

SSO Integration¶

The entire lifecycle for integration with an Identity Provider for Single Sign On (SSO) support can be managed entirely via automation using the RCTL CLI.

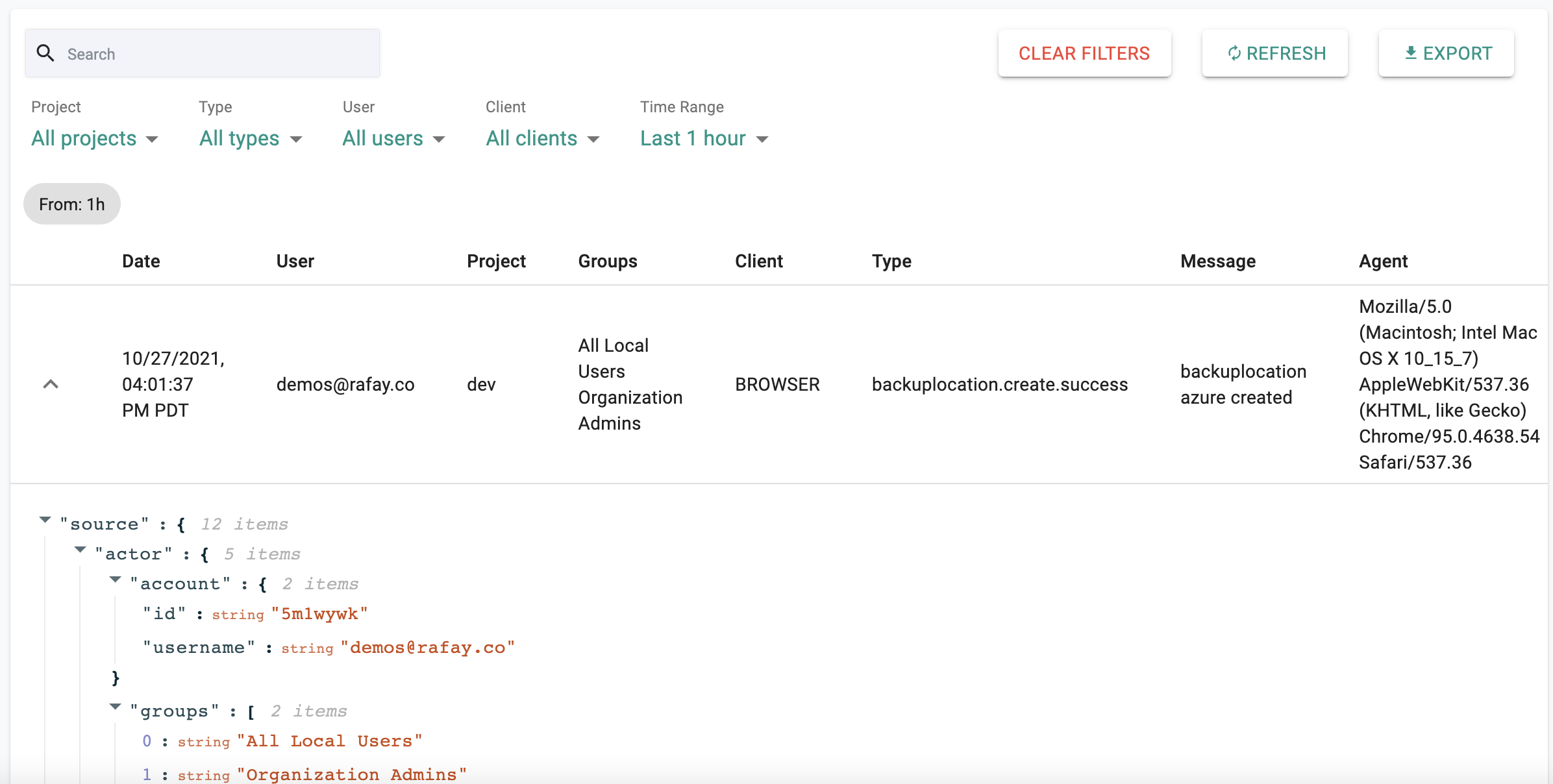

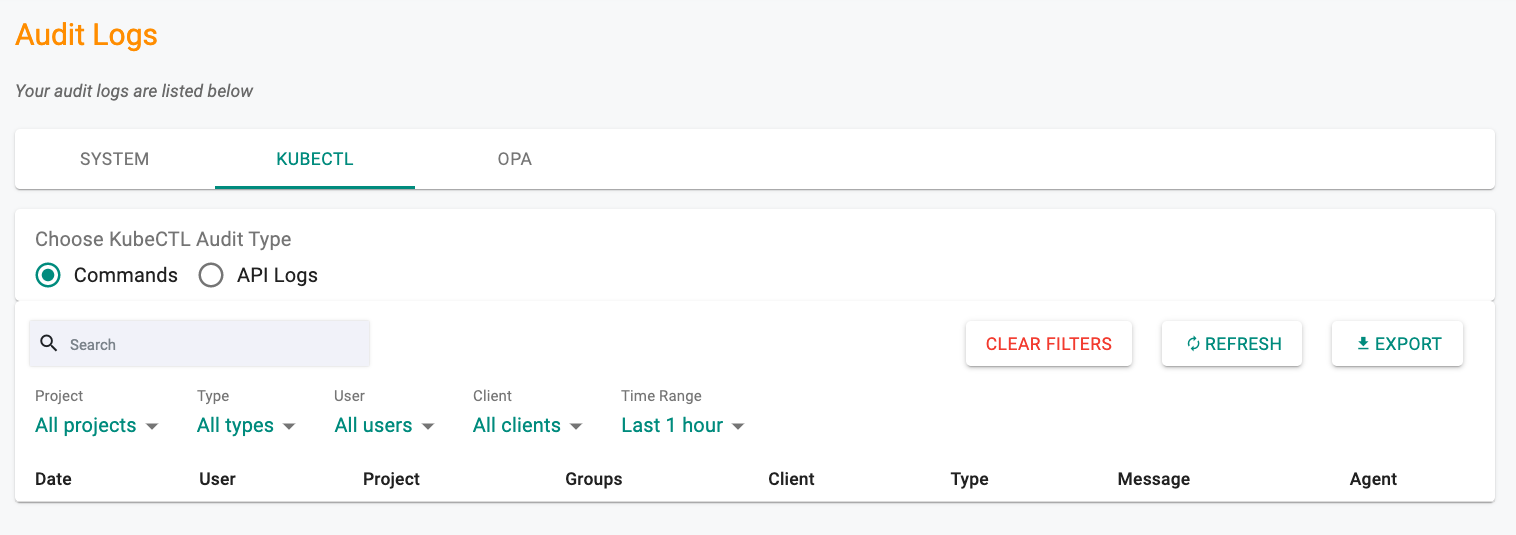

Audit Logs¶

Expandable Column with JSON¶

Admins can now quickly view the entire JSON payload for an audit record allowing them to view all the metadata associated with it.

Swagger API¶

Swagger compliant REST APIs are now available for operations associated with namespaces, pipelines and cluster overrides.

v1.6.0¶

Oct 1, 2021

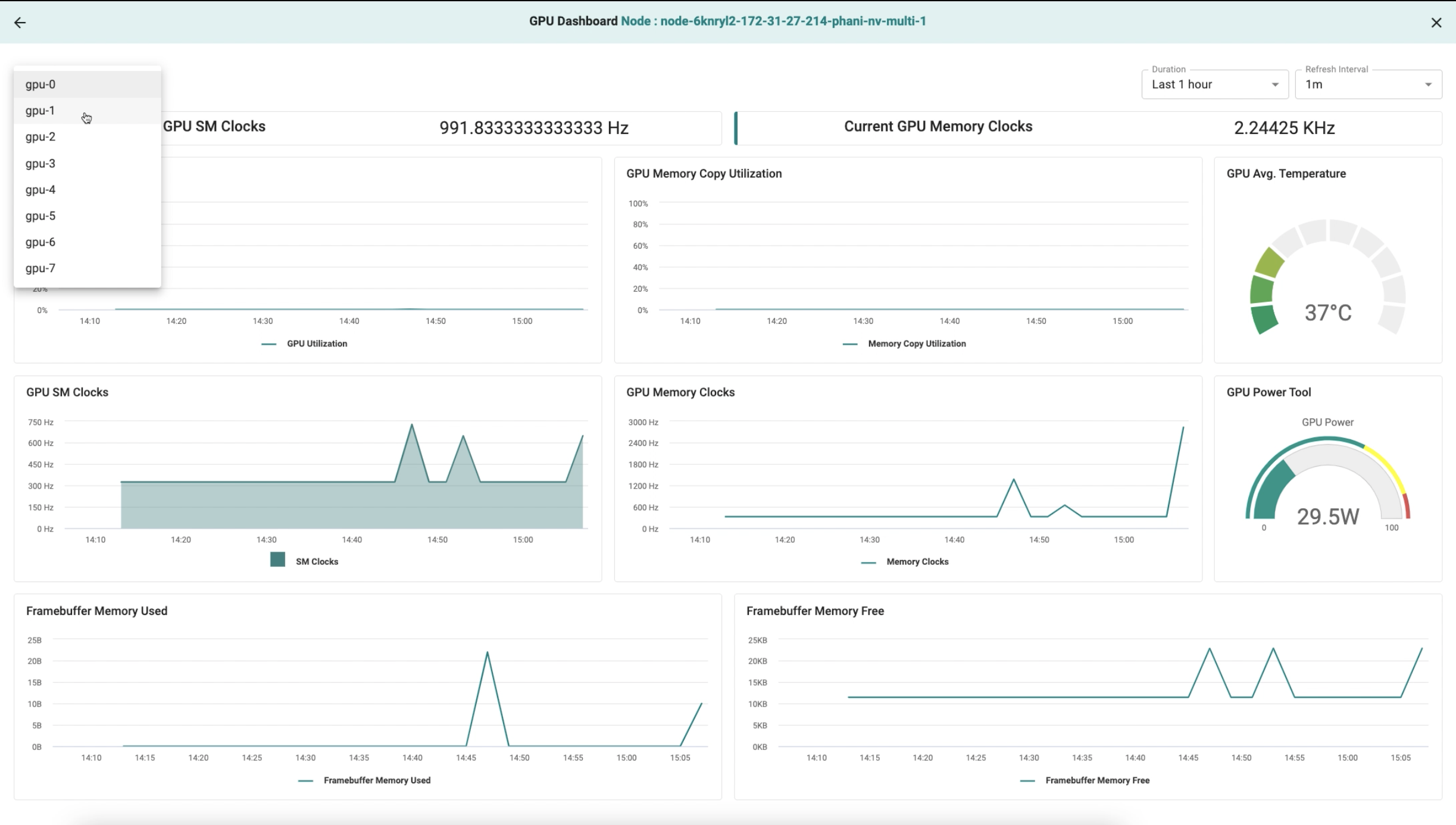

GPU Dashboards¶

GPU metrics are automatically scraped and aggregated in the controller’s time series database. GPU metrics are are presented as integrated dashboards available on the console. More here

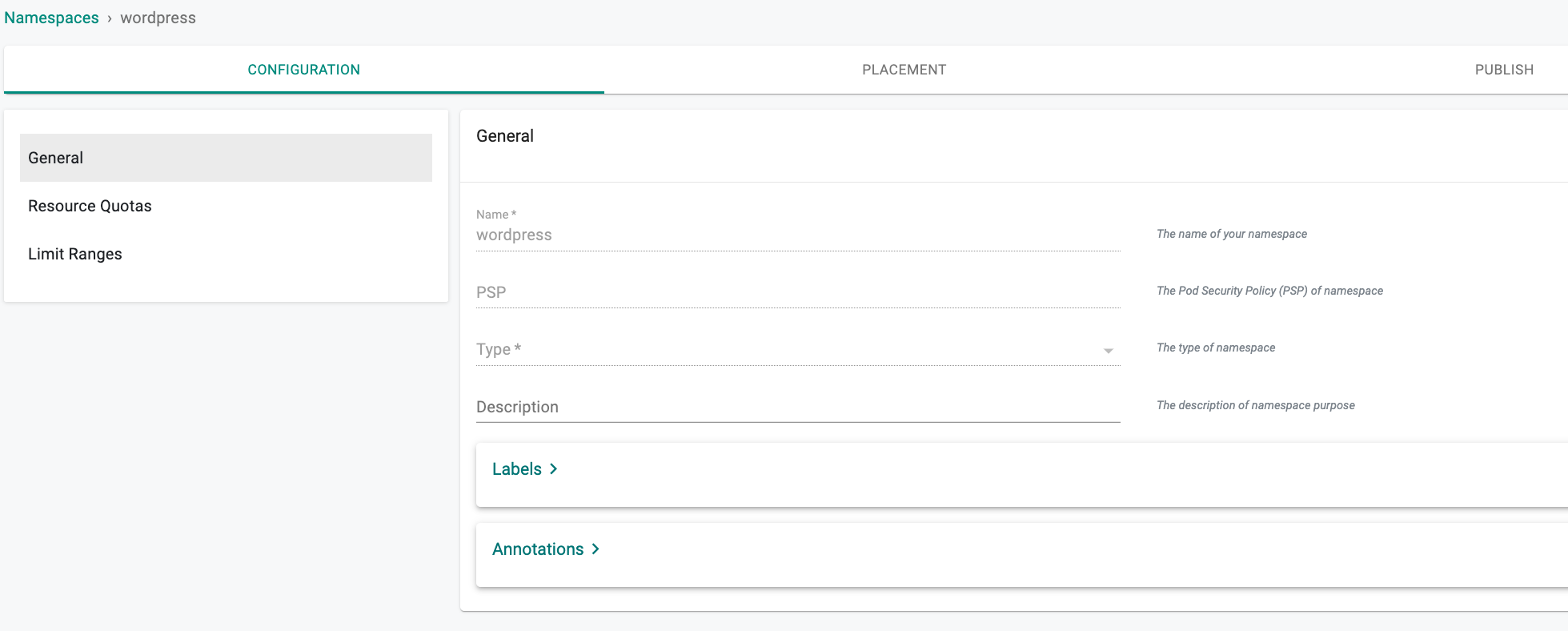

Fleet Operations for Namespaces¶

Perform multi cluster, fleet operations on namespaces eliminating the operational burden of having to manage them one cluster at a time. Configure and enforce critical namespace attributes such as labels, annotations, resource quotas and limit ranges using an intuitive wizard. More here

Security & Governance¶

Policy Management¶

Organizations need to ensure their fleet of clusters are in compliance with company policies to either meet governance and legal requirements or to enforce best practices and organizational conventions. The controller now provides turnkey support for managed OPA Gatekeeper providing enterprise grade workflows to centrally configure, deploy and enforce security policies. More here

Idle Timer Auto Logout¶

Org administrators can now specify idle timer thresholds that will automatically logout users after configured minutes of inactivity.

Cluster Admin Role¶

A new cluster admin role is now available to achieve separation of duties with infrastructure operations. Users with the cluster admin role can manage the lifecycle of Kubernetes clusters, but do not have access to privileged operations such as lifecycle management of addons, cluster blueprints and cloud credentials. More here

Base Blueprint¶

Admins can build custom cluster blueprints using one of the out of box blueprints as the base blueprint.

Blueprint Enforcement¶

Organization admins can now disable the use of default, out of box blueprints org wide. This will force projects to use only one of the custom blueprints for their clusters.

Blueprint Drift Controls¶

Instead of a default “detect and block drift detection” of k8s resources associated with cluster blueprints and namespaces, fine grained controls are now available for admins to select between three options: Ignore, Detect/Report or Detect/Block.

Private Kubectl Proxy¶

Organizations that operate in highly regulated environments can now optionally deploy and operate private proxy networks for zero trust kubectl on their clusters ensuring that this traffic is kept on their networks. This option enables organizations to benefit from being able to use the SaaS controller as the organization-wide orchestration and management platform and still remain in compliance with regulatory requirements.

Kubeconfig for API Users¶

API only users with a namespace admin role are now provided the means to button to download a kubeconfig for this RBAC’ed user to interact with remote k8s clusters using the zero trust kubectl channel. This is particularly useful for applications (e.g. CI systems like Jenkins) that need to interact with remote clusters using kubectl etc.

Kubectl Command Logs¶

In addition to the audit trail of all Kube API calls, an audit trail of the high level Kubectl commands initiated by authorized users on the web based Kubectl shell is now available as well. More here

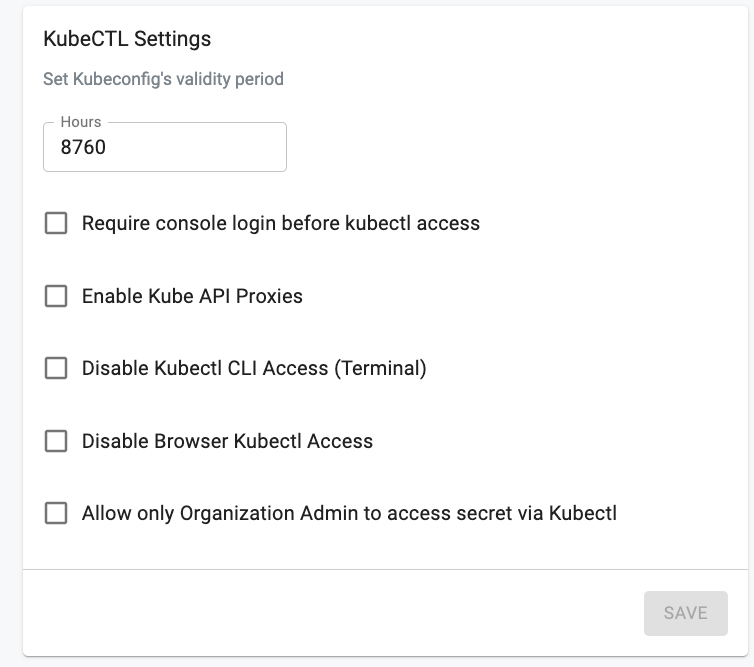

Fine Grained Kubectl Controls¶

Org Administrators are provided additional fine grained controls. They can now (a) configure a setting where k8s secrets on clusters can be viewed and managed only by users that have

- An Org Admin role,

- Enable/Disable Kubectl CLI based access org wide and

- Enable/Disable browser based Kubectl access org wide.

GitOps¶

2-Way Sync with Git Repo¶

Users now have the option to enable "2-way sync" between their clusters and their Git repos. Whenever the user makes changes in the Git repo, these are automatically propagated to the clusters and vice versa. 2-way synchronization is based on Kubernetes style reconciliation of state i.e. changes are compared to the persisted object's declarative state and appropriate action is taken. More here

Workloads¶

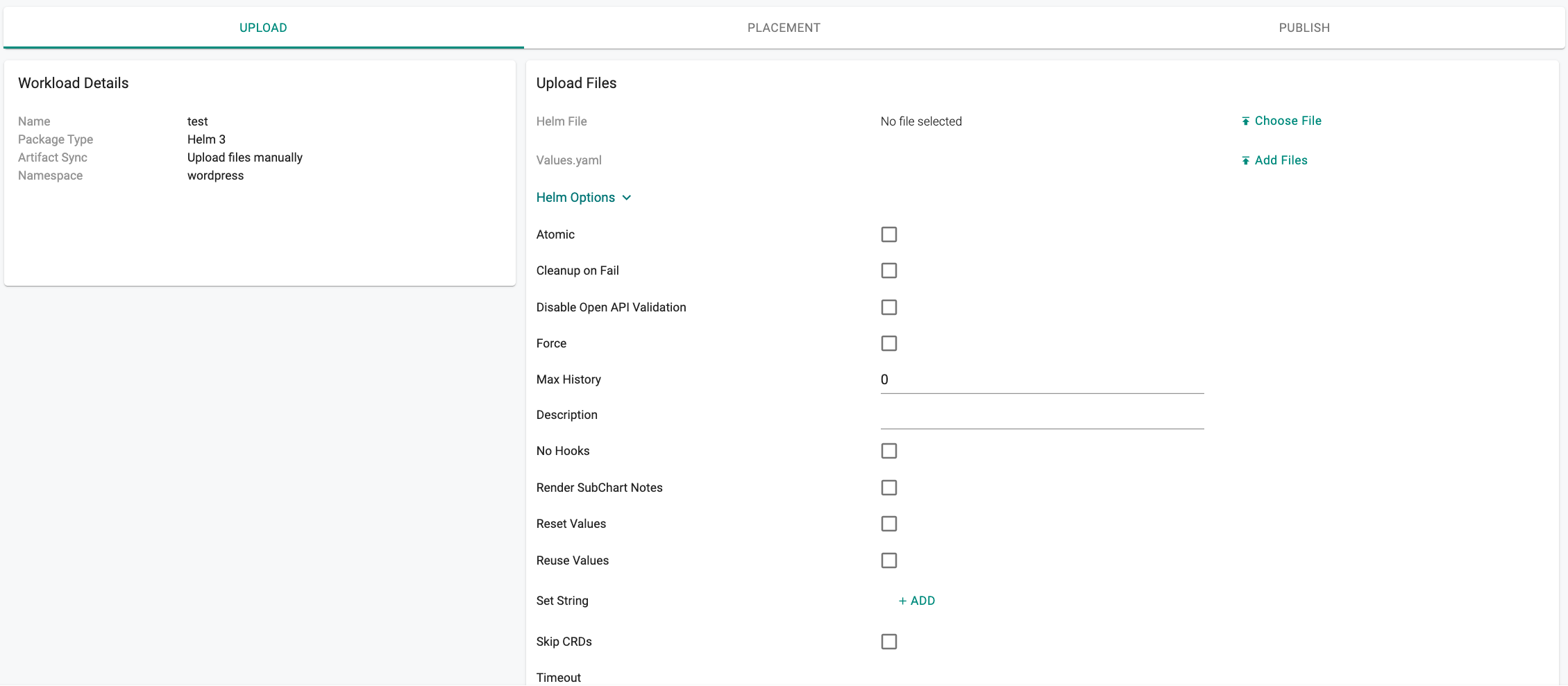

Advanced Helm Settings¶

Advanced Helm options are now available for Helm 3 based workloads. Users can now configure advanced settings such as “wait”, “timeouts”, “execute chart hooks” etc.

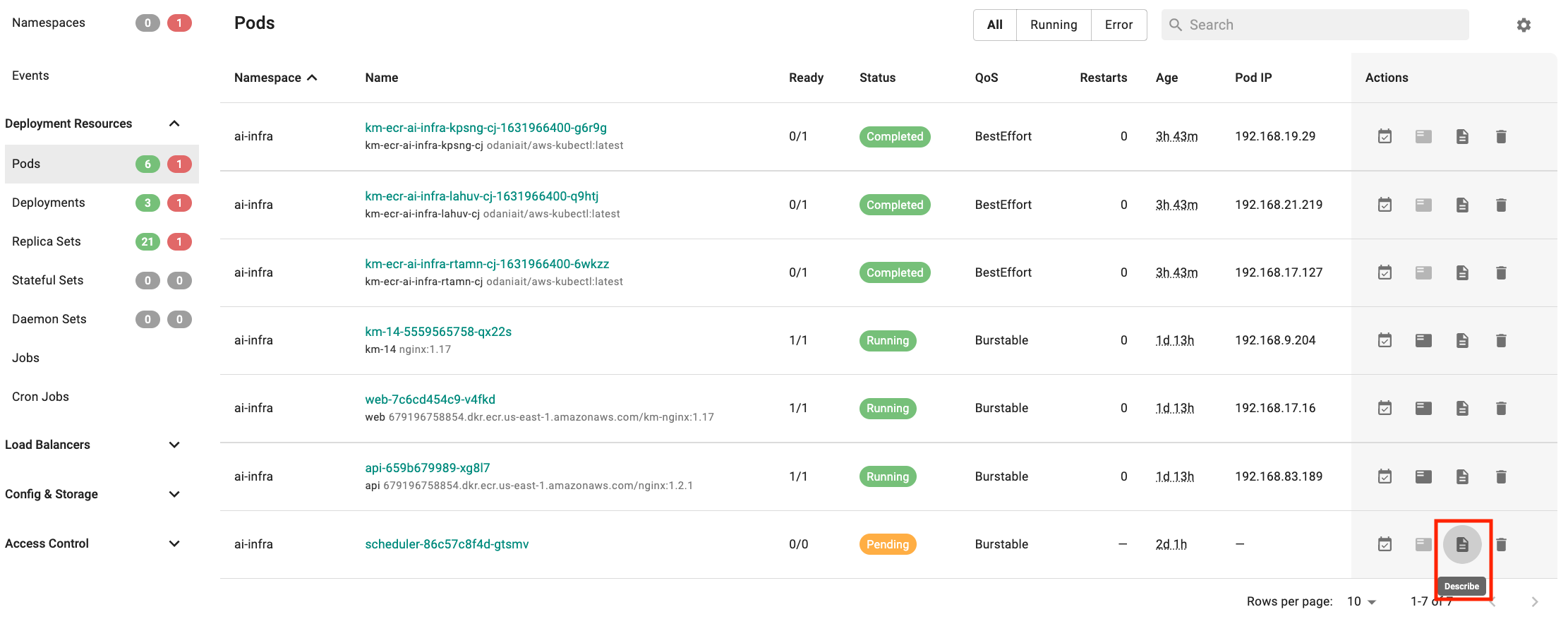

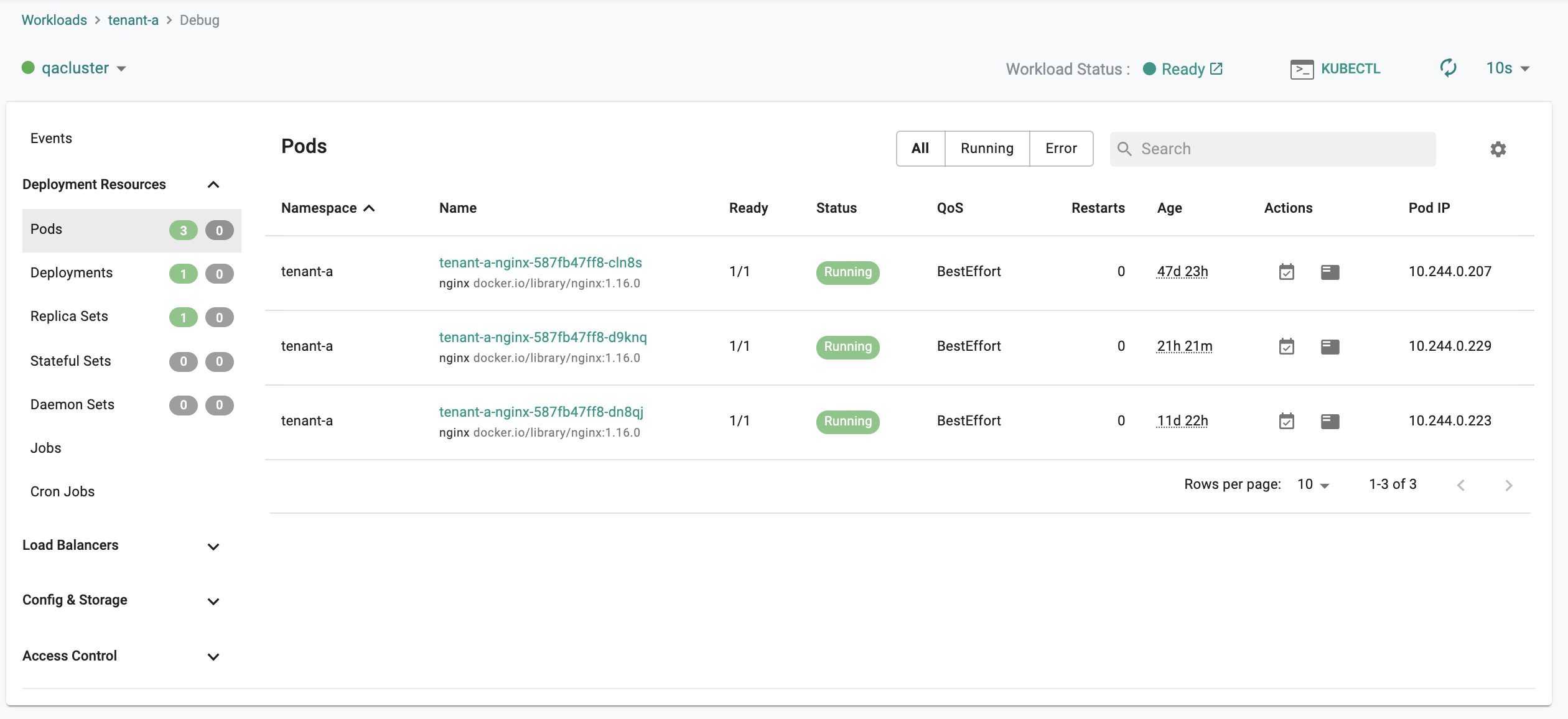

Troubleshooting¶

To enable faster troubleshooting of issues such as misconfigured k8s resources, authorized developers and cluster admins can now “describe and view” Kubernetes pods right with the click of a button via the console.

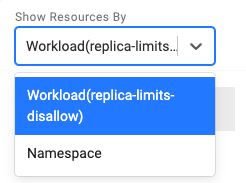

Authorized developers and cluster admins can now "delete” Kubernetes pods right with the click of a button via the console. In addition to the default view of the workload’s k8s resources pre-filtered by an automatically injected workload label, workload administrators can now also optionally view the resources by namespace.

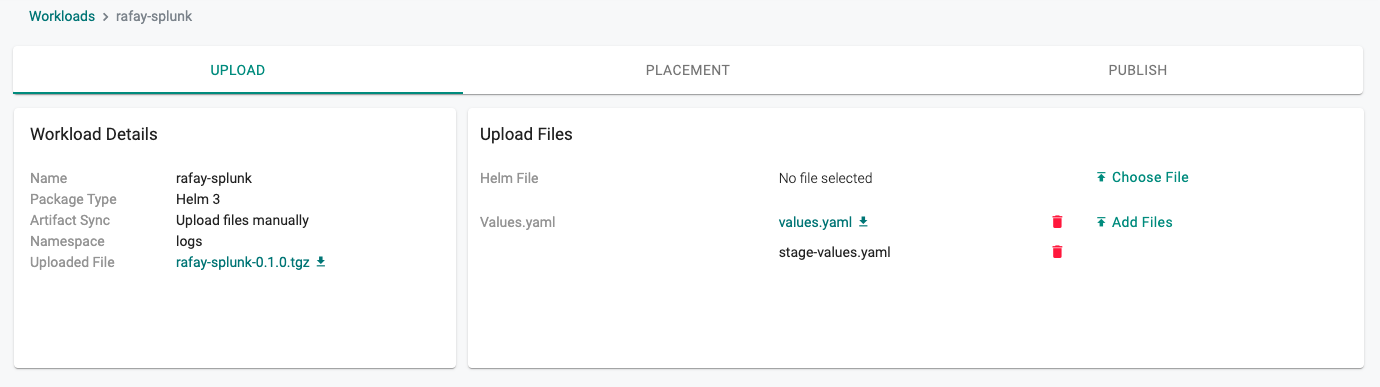

Multiple Values¶

Ability for developers to specify multiple values.yaml files for Git repo based Helm based workloads.

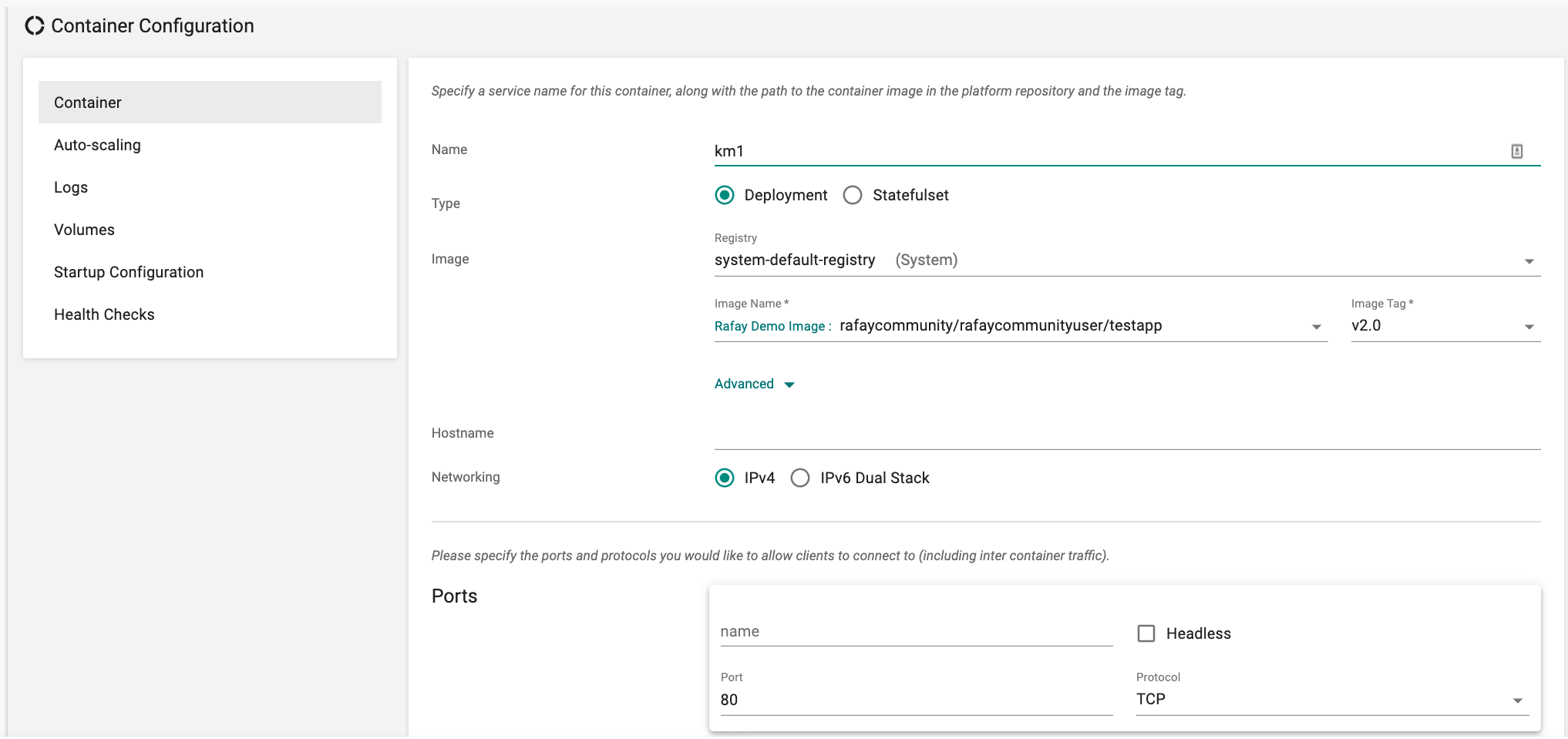

IPv6 for Workload Wizard¶

Developers using the workload wizard can specify support for IPv6 (dual stack) for their containers.

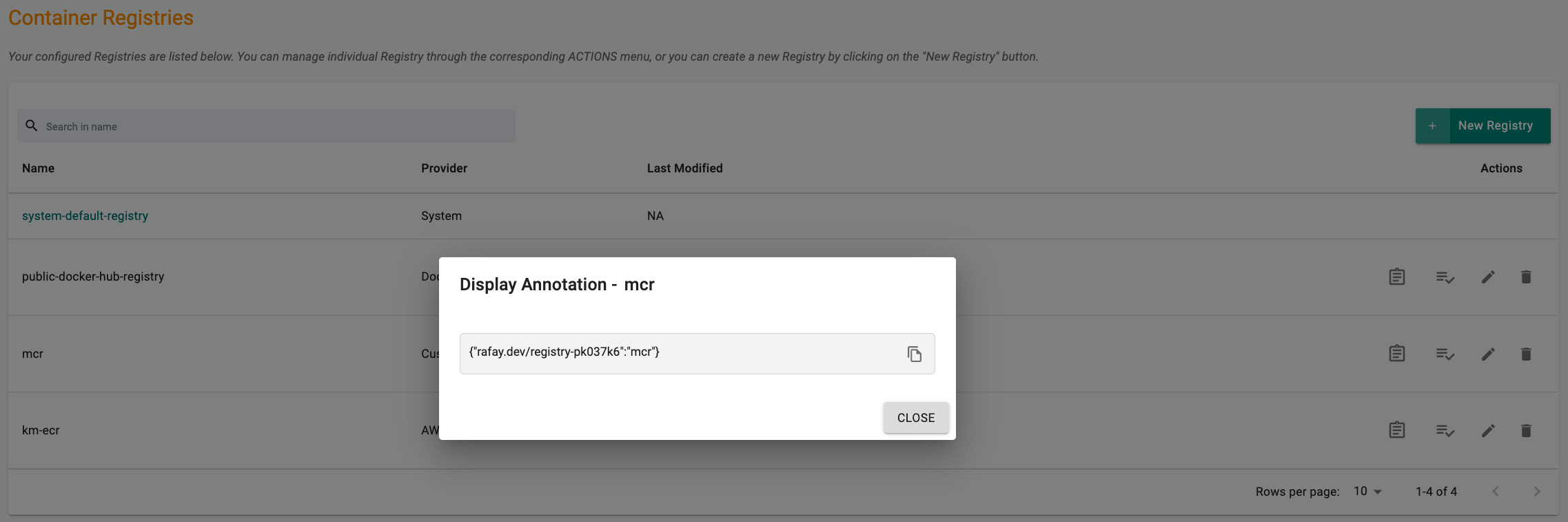

Annotations for Integrations¶

The convenience of a copy/paste experience is now provided to developers for configured registry integrations. This dramatically reduces the associated learning curve and removes the need for troubleshooting and resolution of issues associated with misconfigured annotations.

Amazon EKS¶

K8s Upgrade Optimizations¶

The k8s upgrade workflow for EKS clusters with self managed node groups has been optimized to complete significantly faster and without requiring additional resources for pods.

Fully Private Control Plane¶

EKS clusters can now be provisioned with a fully private control plane ensuring that it is never public at any time during its life.

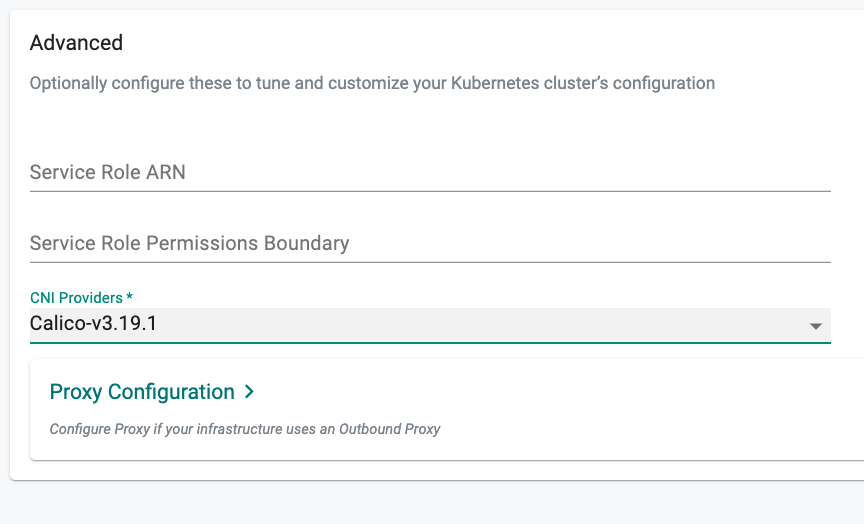

Alternate CNI¶

Administrators can now specify an alternate CNI (e.g. Calico) during EKS cluster provisioning. More here

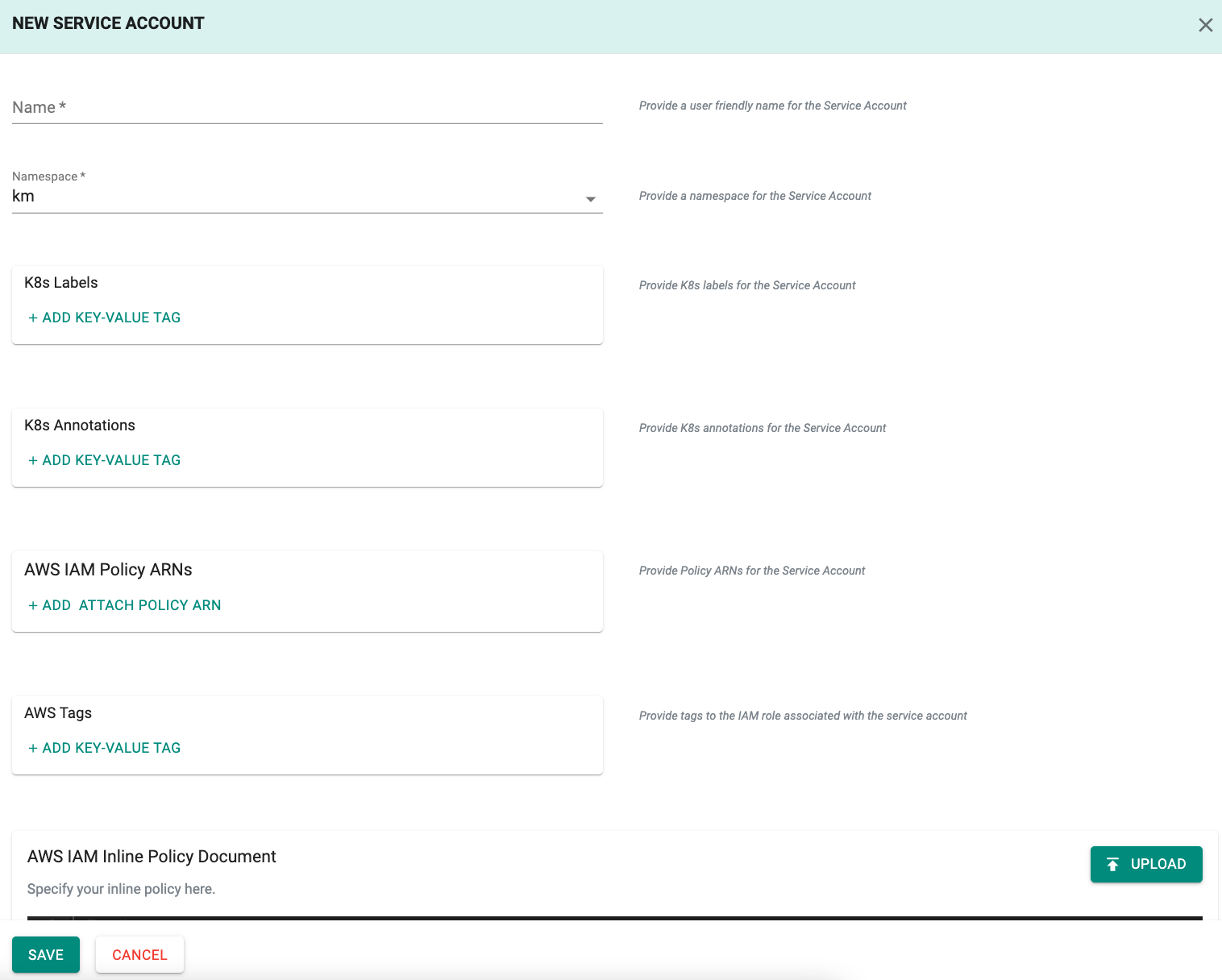

Workflows for IRSA¶

IRSAs allows the association of an AWS IAM role with a Kubernetes service account. This service account can then provide necessary AWS permissions to the containers in any pod that uses that service account. With IRSA, it is not required to provide extended permissions to the Amazon EKS node IAM role so that pods on that node can call AWS APIs.

Intuitive workflows for full lifecycle management of IRSAs is now available in the web console right on the provisioned EKS Cluster (in addition to the RCTL CLI and APIs). More here and here

K8s 1.21¶

Existing controller provisioned and managed EKS clusters can be seamlessly upgraded to k8s 1.21. New EKS clusters can now be provisioned based on k8s 1.21.

Infra GitOps¶

The RCTL CLI based “rctl apply -f

- Per nodegroup upgrades

- Changes in labels and tags

- Changes in logging (audit, api, scheduler, controller manager, authenticators

Upstream Kubernetes¶

ARM Worker Nodes¶

Administrators can now add ARM 64 CPU based worker nodes to controller managed, upstream Kubernetes clusters. More here

Zero Trust Host Access¶

Management of a large, remote distributed fleet of upstream Kubernetes clusters can be operationally challenging. Administrators may need to frequently perform “node OS level” operations to keep the fleet upto date and healthy. For example, they may need to remotely install/update a device driver on a remote node OS that is operating behind a firewall.

Remote access to the nodes via inbound access mechanisms such as SSH and VPNs may be impossible or at best operationally cumbersome. For scenarios like this, swagger compliant APIs for zero trust host access are now available. Authorized users can use this to send/receive commands to nodes in the fleet of managed, upstream Kubernetes clusters. A full audit trail of usage of the APIs is maintained on the controller and can be retrieved via REST APIs for forensic and compliance purposes. More here

Azure AKS¶

Lifecycle Management¶

Administrators can now provision and manage Azure AKS Kubernetes clusters using the controller. In addition to the self service, web console, they also have options for full lifecycle automation using the RCTL CLI and REST APIs. More here

AKS Blueprint¶

A default cluster blueprint tuned and optimized for AKS is now available.

RedHat OpenShift¶

OpenShift Blueprint¶

A default cluster blueprint tuned and optimized for OpenShift Container Platform environments is now available.

Backup/Restore Automation¶

Full automation of entire lifecycle related to backup/restore using the RCTL CLI

Alerts & Notifications¶

Force Close Alerts¶

Authorized administrators can now manually, force close alerts for scenarios that are not recoverable.

User Management¶

Sort¶

The user list can now be sorted by "username" and "last access time" to help admins streamline user search

Bug Fixes¶

| Bug ID | Description |

|---|---|

| RC-10849 | Nodegroup upgrade pre-flight check failure leaves nodes in SchedulingDisabled state |

| RC-10835 | IDP Users which have Project/Infra Admin Roles for a project cannot see the list of alerts from the cluster card |

| RC-11099 | Display the blueprint version applied to the cluster when the cluster is imported via RCTL cluster spec file |

| RC-11149 | Managed fluentd system addon throwing patten matching errors |

| RC-10944 | If the user is added first time in the system to an organization and later removed from that org, the user cannot be added back to that org |

| RC-10838 | For helm3 workload when just values file is modified and uploaded republish button is grayed out |

| RC-10801 | When using multiple values file for helm deployment if values file name is same, we are overwriting the files the same contents |

| RC-10772 | When vault integration is deleted on a cluster workload publish gets stuck in inprogress for ever as it is waiting for auxiliary tasks |

| RC-10902 | When an addon is removed from the blueprint, all the addons after the deleted addons version change to empty in UI |

| RC-10494 | Proper error message while creating the registry with invalid characters |

| RC-10795 | rctl apply for EKS cluster returns RC=0 for validation errors |

| RC-9695 | Namespace -> When we create a namespace and exceed the maximum number of chars, the interface doesn't tell which field is breaking the rule |

| RC-9704 | Communication lost remains opened after the connection was re-established |

| RC-9635 | Right after the workload is published, check the events in the Cluster Dashboard > Resource > Namespace, the "Age" show weird time "-1y-1d" for the 1st minute |

| RC-10839 | Same User Multi Org Access Issue |

| RC-10077 | Not able to put back the default approval window which is forever once a specific approval window has been configured once before |

| RC-9691 | Infrastructure -> PSP -> When we exceed the name field length, no error is raised |

| RC-8407 | Inconsistent data show in Rafay for cpu and memory resources of the node |

| RC-8350 | Improve overall page loading performance for Web Console |

| RC-7699 | Workload publish in progress for a long time for statefulset yaml workload with long container name |

v1.5.3¶

25 June, 2021

Upstream Kubernetes¶

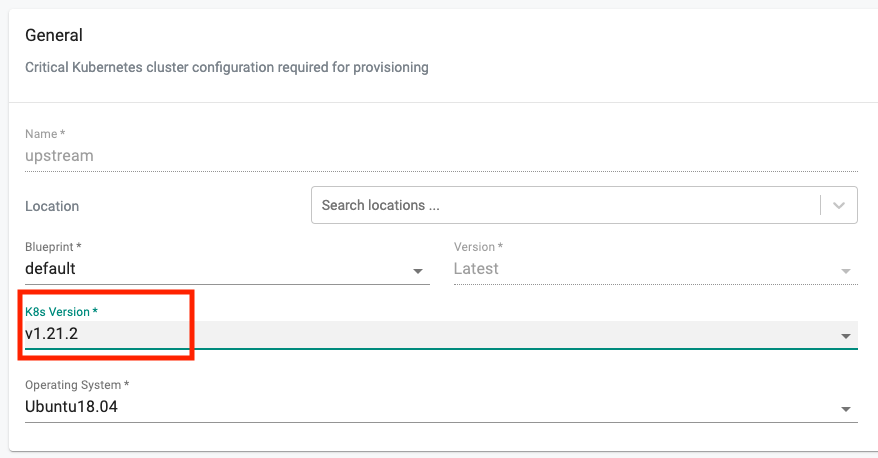

New Kubernetes Versions¶

In addition to Kubernetes 1.18.x, 1.19.x and 1.20.x, customers can now provision clusters based on Kubernetes 1.21.x. Existing upstream Kubernetes clusters running older versions of Kubernetes can be seamlessly upgraded "in-place" to 1.21.x

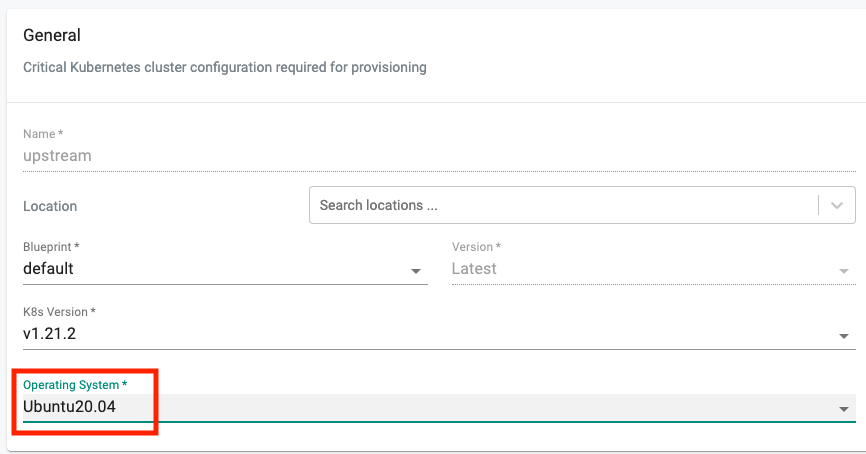

Operating Systems¶

Customers can now provision upstream Kubernetes clusters on instances with Ubuntu 20.04 LTS operating system.

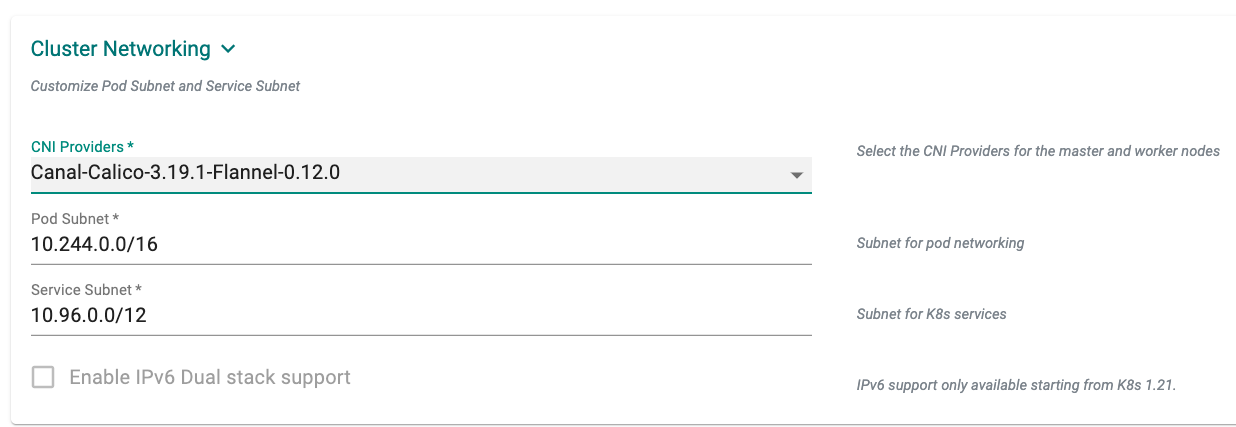

Select Container Network Interface (CNI)¶

Users that wish to override the default CNI and select one from the list of supported CNIs can make this selection during cluster provisioning.

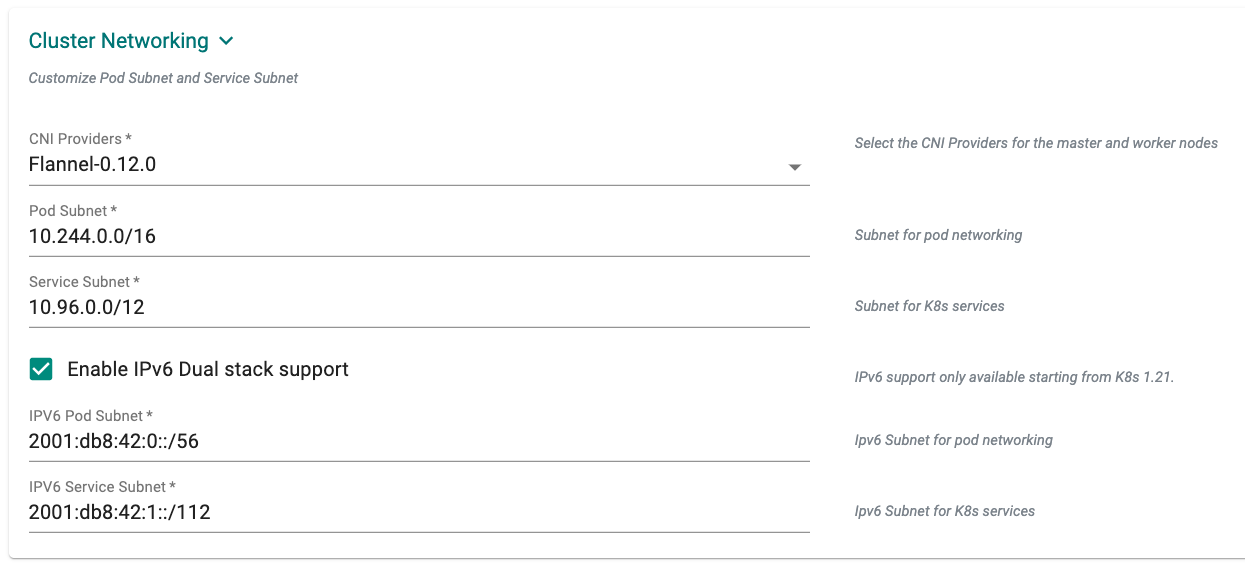

IPv4/6 Dual Stack Support¶

Users can enable IPv4/IPv6 dual-stack networking on Kubernetes v1.21 or higher. This allows the simultaneous assignment of both IPv4 and IPv6 addresses to Pods and Services.

Amazon EKS¶

Graviton 2 ARM based nodes¶

Users can now provision and manage node groups based on AWS's Graviton 2 ARM based processors. Watch a video of this feature in action.

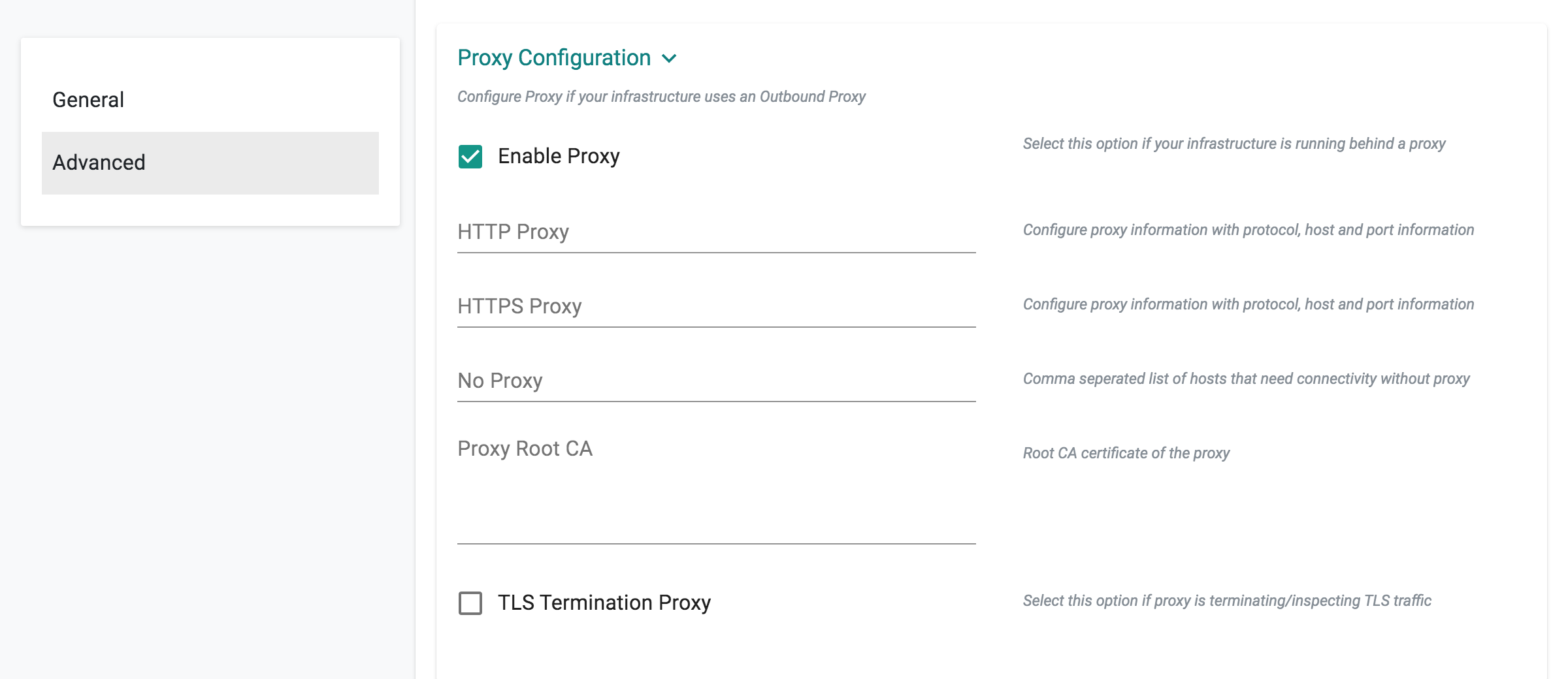

Forward Proxy¶

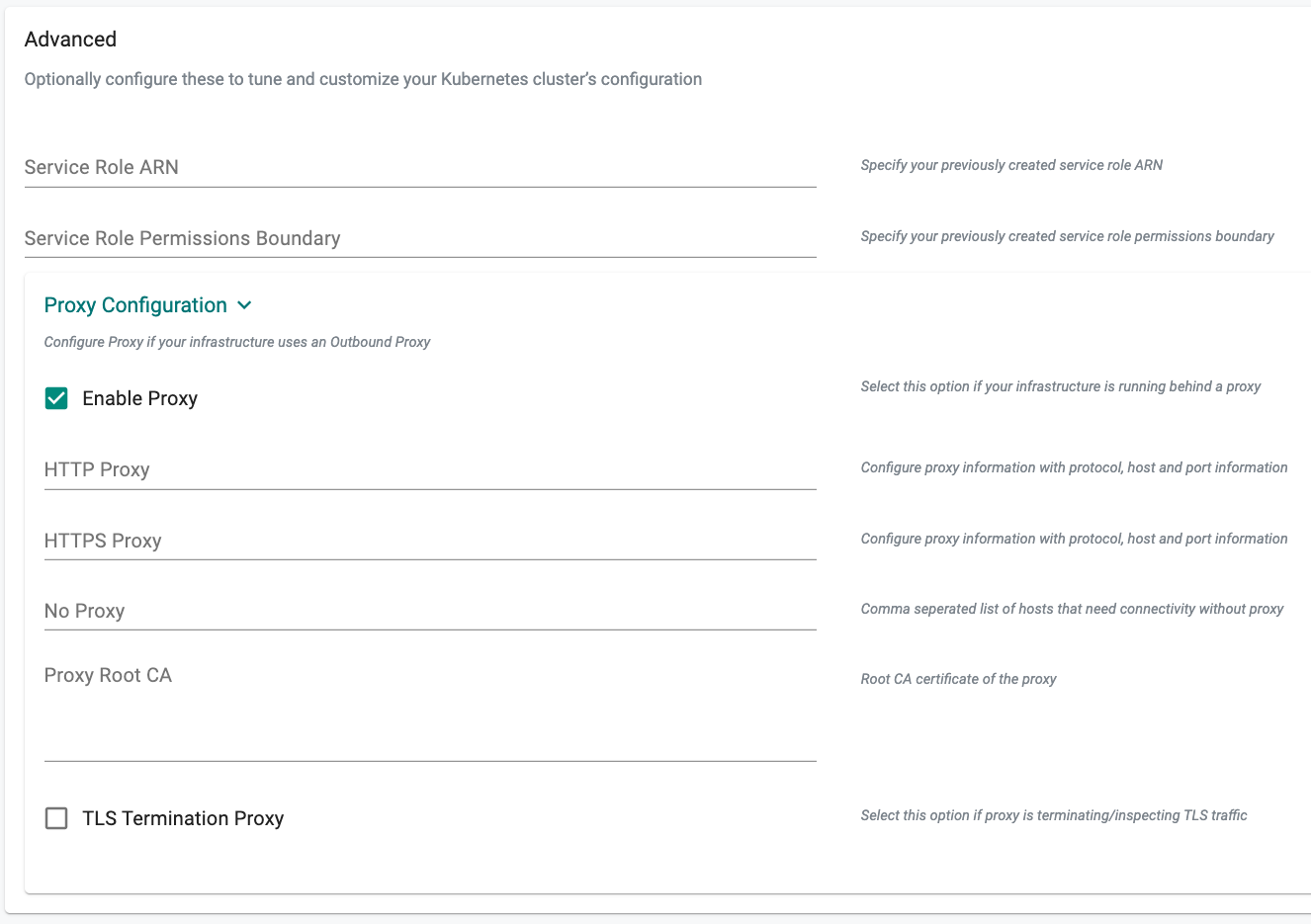

Organizations that are required to use a forward proxy for all outbound connections from their EKS clusters to the SaaS controller etc can now configure and enable this during cluster provisioning.

Support for k8s 1.20¶

Users can now provision EKS clusters based on Kubernetes 1.20 and also seamlessly upgrade their existing fleet to Kubernetes 1.20.

Infra GitOps¶

Users can now upgrade their EKS clusters to a new version of Kubernetes by simply updating the cluster specification file in their Git repo.

Scale to Zero¶

Organizations can now "scale down" their EKS clusters to "zero" worker nodes when not in use to save costs. The worker nodes can be "scaled up" anytime.

Zero Trust Kubectl (ZTKA)¶

Users with "Read Only" roles are now blocked from being able to perform any operations (GET, LIST, VIEW) on Kubernetes secrets on remote clusters.

Workload Wizard¶

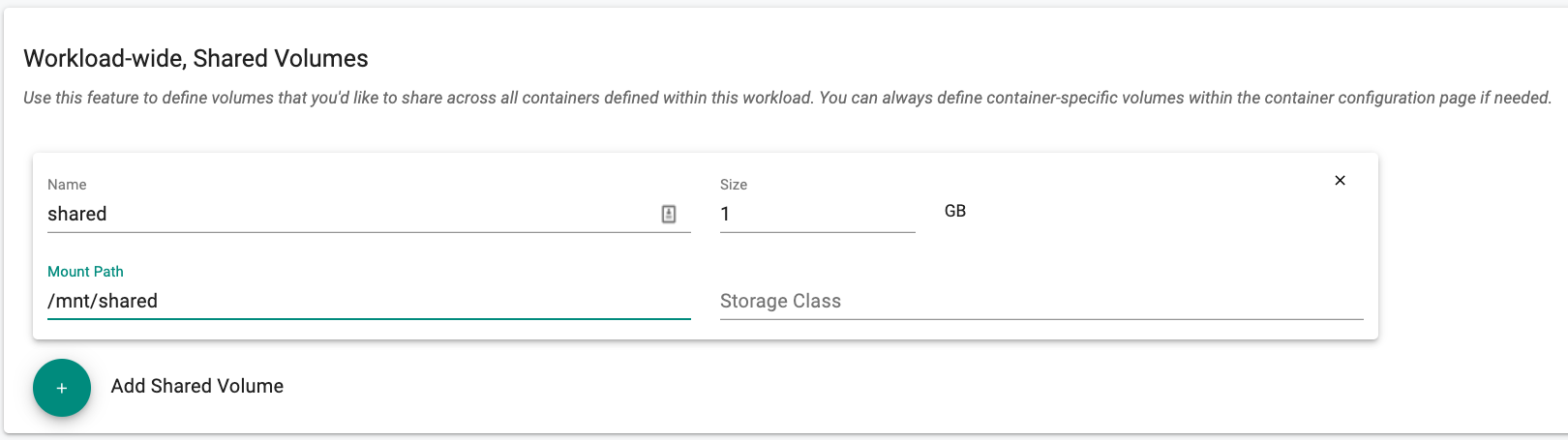

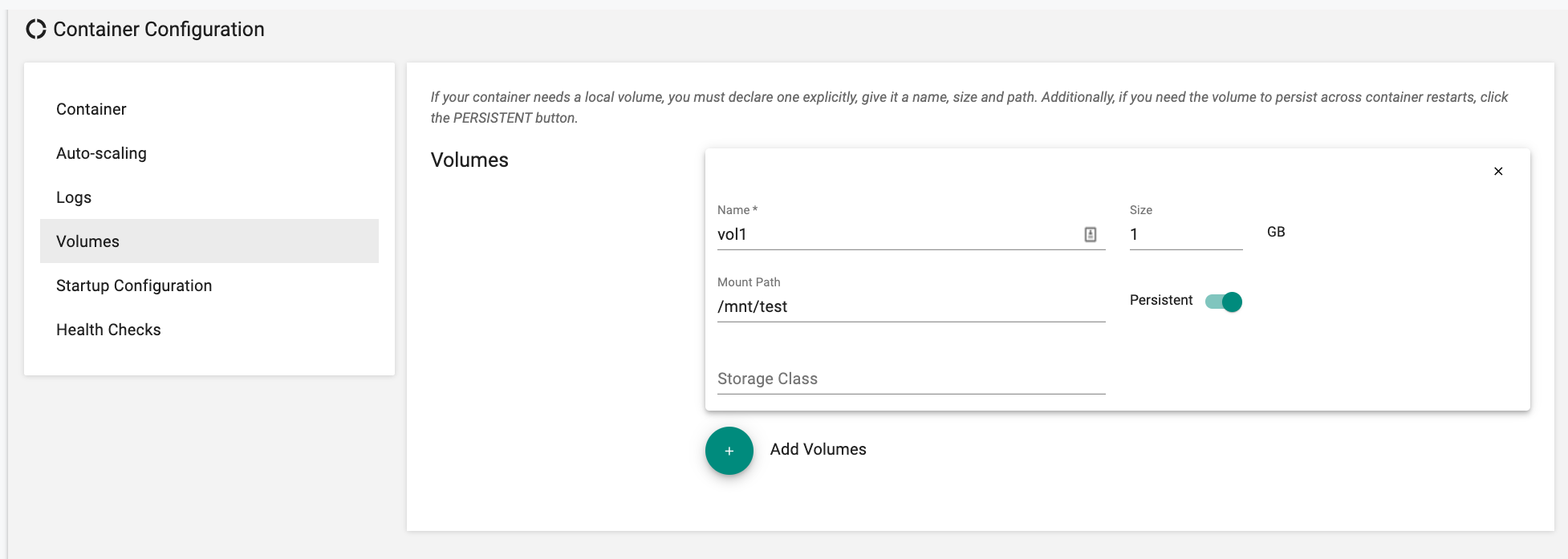

Users of the workload wizard can now specify the storage class for both "shared workload wide volumes" as well as "container volumes".

Workload Wide Shared volumes¶

Container Volumes¶

RCTL CLI¶

Enhancements to RCTL CLI focused on automation.

Project Lifecycle¶

Users with Org Admin roles can now use the RCTL CLI to "Create", "Read", "Update" and "Delete" Projects in their Orgs

Credential Provider Lifecycle¶

Authorized users can now use the RCTL CLI to "Create", "Read", "Update" and "Delete" Cloud Credentials in their Projects

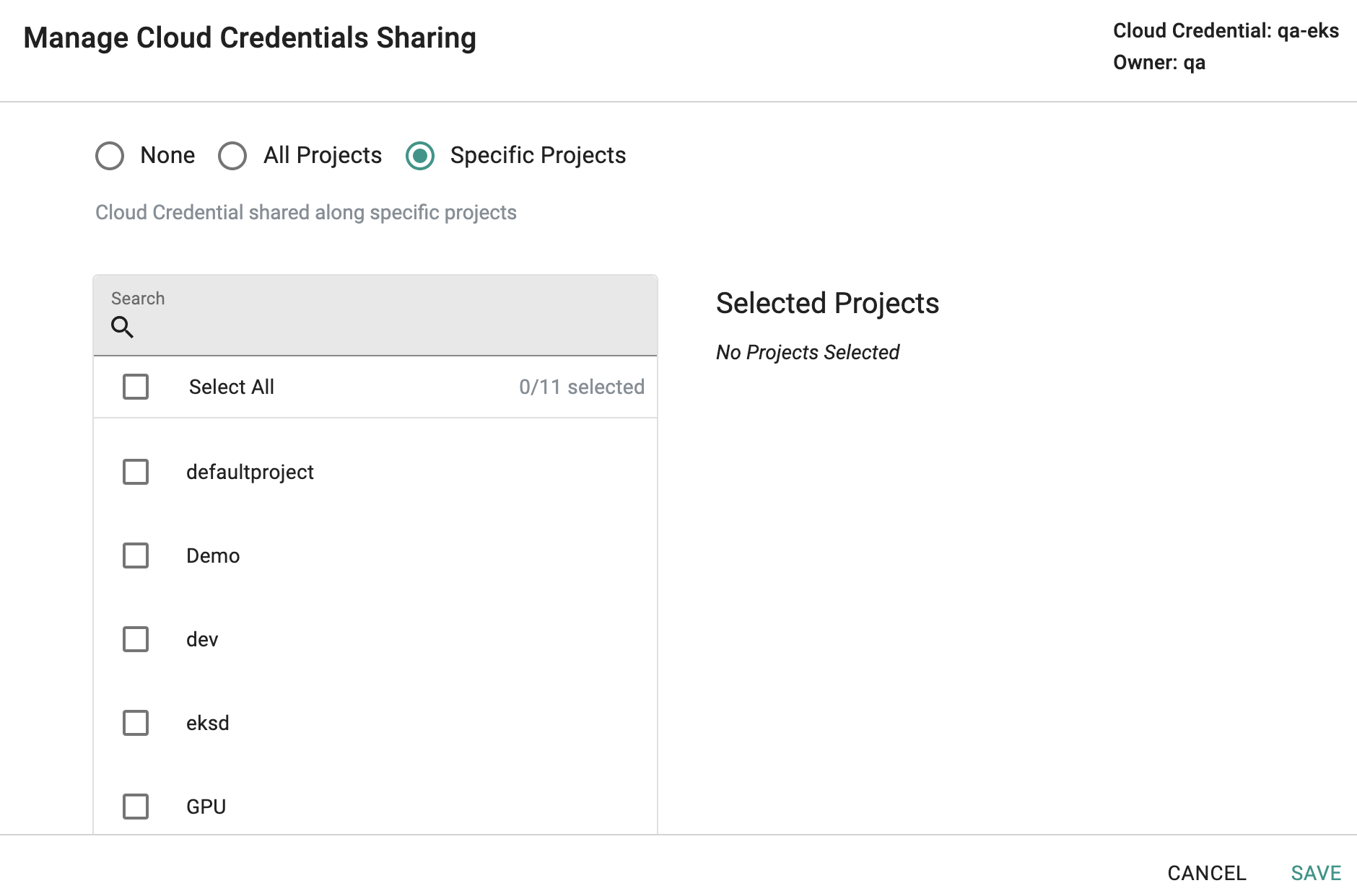

Resource Sharing¶

Authorized users can now use the RCTL CLI to "Share" resources in their projects with "selected" or "all" projects in the Org

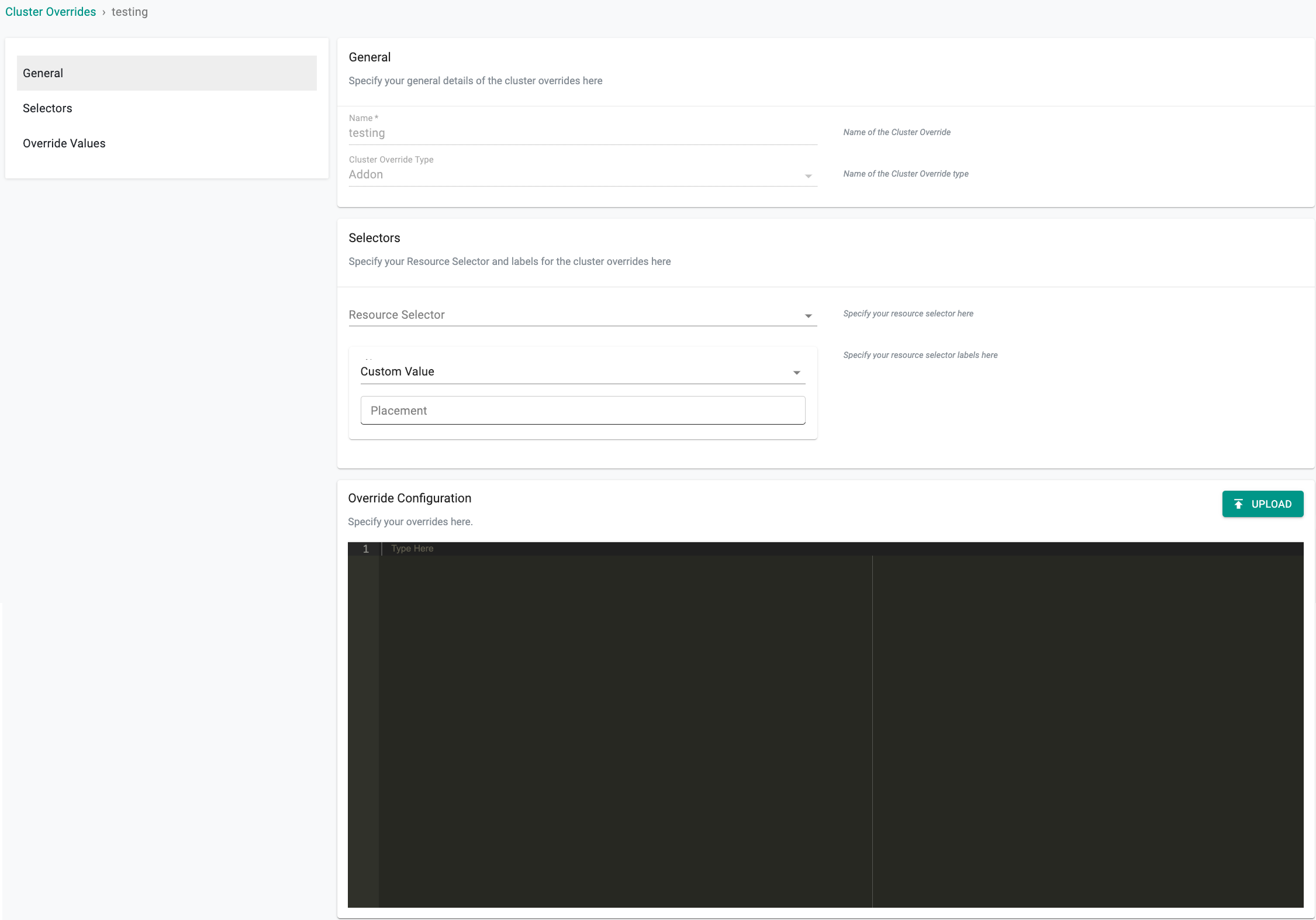

Cluster Overrides¶

In addition to using the RCTL CLI, users can now use the Console to "manage" the lifecycle for cluster overrides for both "workloads" and "addons" in a cluster blueprint.

Swagger APIs¶

The REST APIs have been enhanced to add support for repositories, CD agent, users, groups.

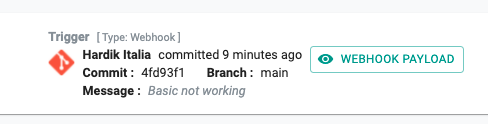

GitOps¶

Webhook payload details for pipeline received as part of a trigger from an external Git repository are now available for users to view and analyze for troubleshooting purposes.

White Labeling¶

Enhancements to white labeling for partners and service providers

Product Docs URL¶

The product docs URL can now be white labeled ensuring that only their branding is presented to their end users/customers.

Bug Fixes¶

| Bug ID | Description |

|---|---|

| RC-10678 | Shared volume in the workload wizard is not working as the PVC's access mode is set to RWO and multiple pods cannot mount them |

| RC-10621 | Git repo based workload failed to deploy if the commit message has special character |

| RC-10485 | SSO User login with remember me causes the login button to stuck after logout |

| RC-10371 | When adding a new blueprint version, the addon version dropdown always shows only the current selected version |

| RC-10276 | Failed to run conjurer in Ubuntu Server 18.04.5 in Bare metal node |

| RC-10272 | Unknown cluster health count is not shown in the Org and Project dashboard cluster card |

| RC-10078 | Staging: gitops job UI displayed issue when 1 job took a long time to finish |

| RC-9691 | Infrastructure -> PSP -> When we exceed the name field length, no error is raised |

| RC-8407 | Inconsistent data show in Rafay for cpu and memory resources of the node |

| RC-8350 | Improve overall page loading performance for the Console |

v1.5 Patch 2¶

21 May, 2021

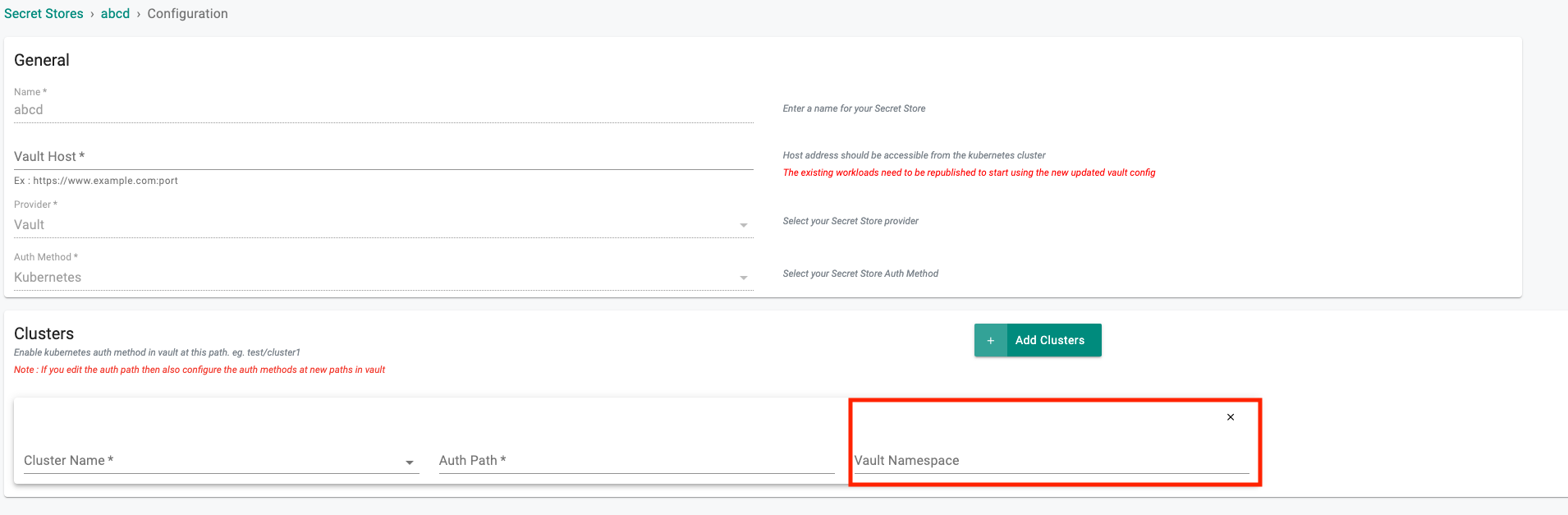

Vault Namespaces¶

Organizations that use Hashicorp Vault Enterprise and HCP Vault that implement Vault as a "service" internally can use "vault namespaces" to provide tenant level isolation across teams/BU's or applications. The controller's integration with Hashicorp Vault now supports the use of vault namespaces.

v1.5 Patch 1¶

17 May, 2021

Bug Fixes¶

| Bug ID | Description |

|---|---|

| RC-9615 | White Labeling fixes for Ingress configuration in workload wizard |

| RC-9616 | White Labeling fixes for container configuration in workload wizard |

| RC-9617 | White Labeling fixes for container auto scaling configuration in workload wizard |

| RC-9618 | Description for Minimal blueprint needs to be corrected |

| RC-10272 | Unknown cluster health count is not shown in the Org and Project dashboard cluster card |

v1.5.0¶

07 May, 2021

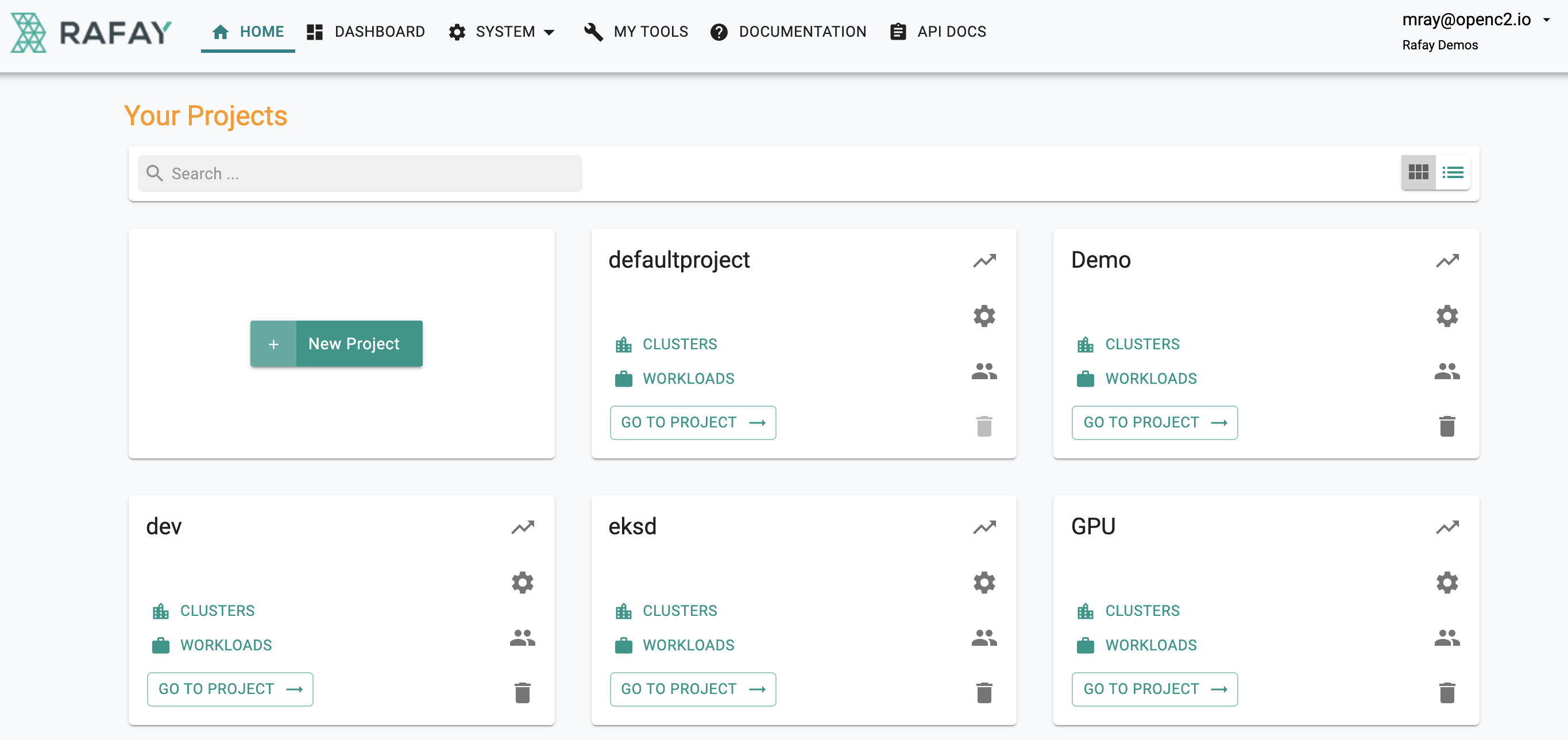

Home Page¶

When users login into the console, they are now presented with a new home page with navigation options to quickly navigate to the project(s) they want to access.

Dashboards¶

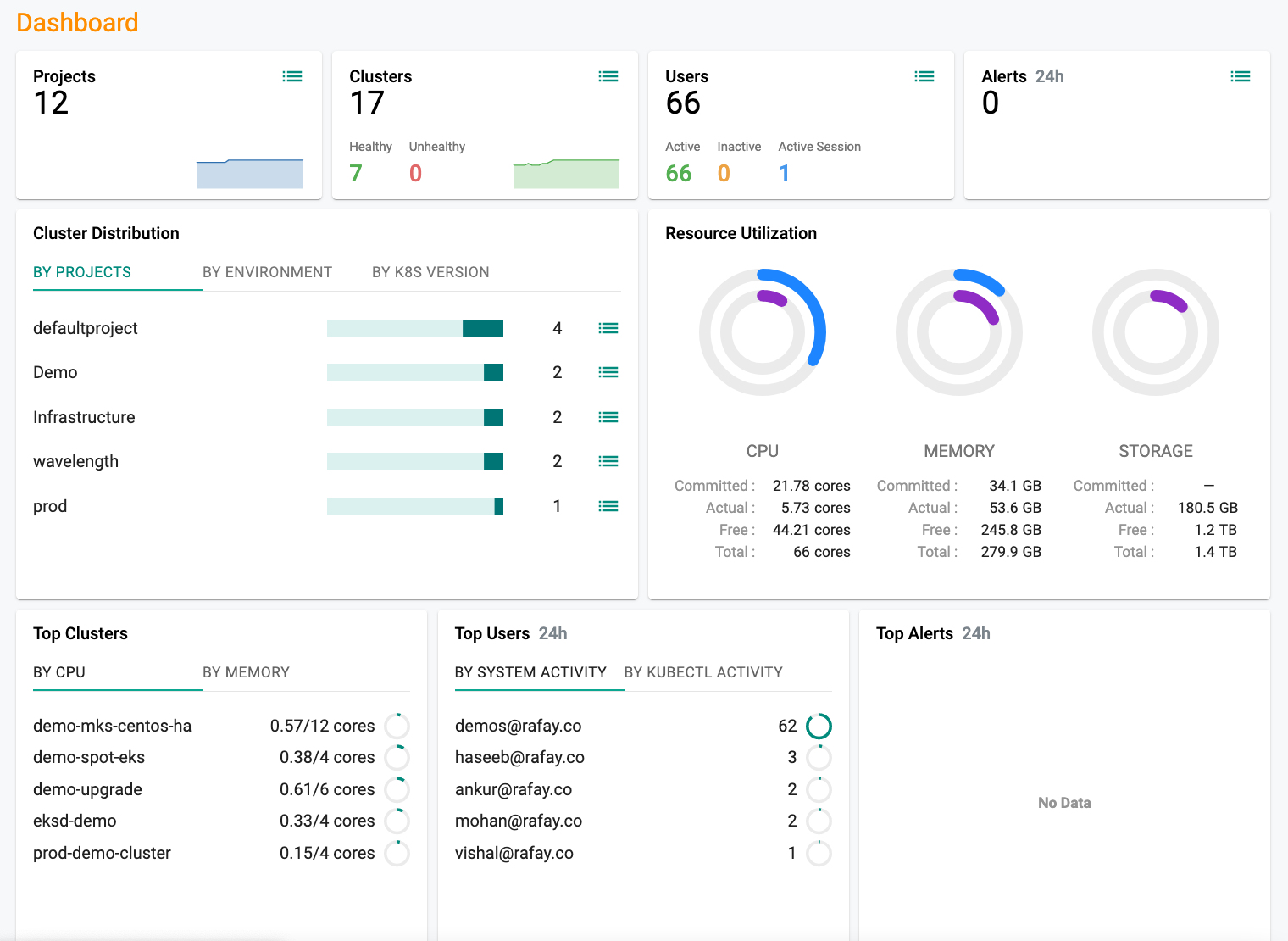

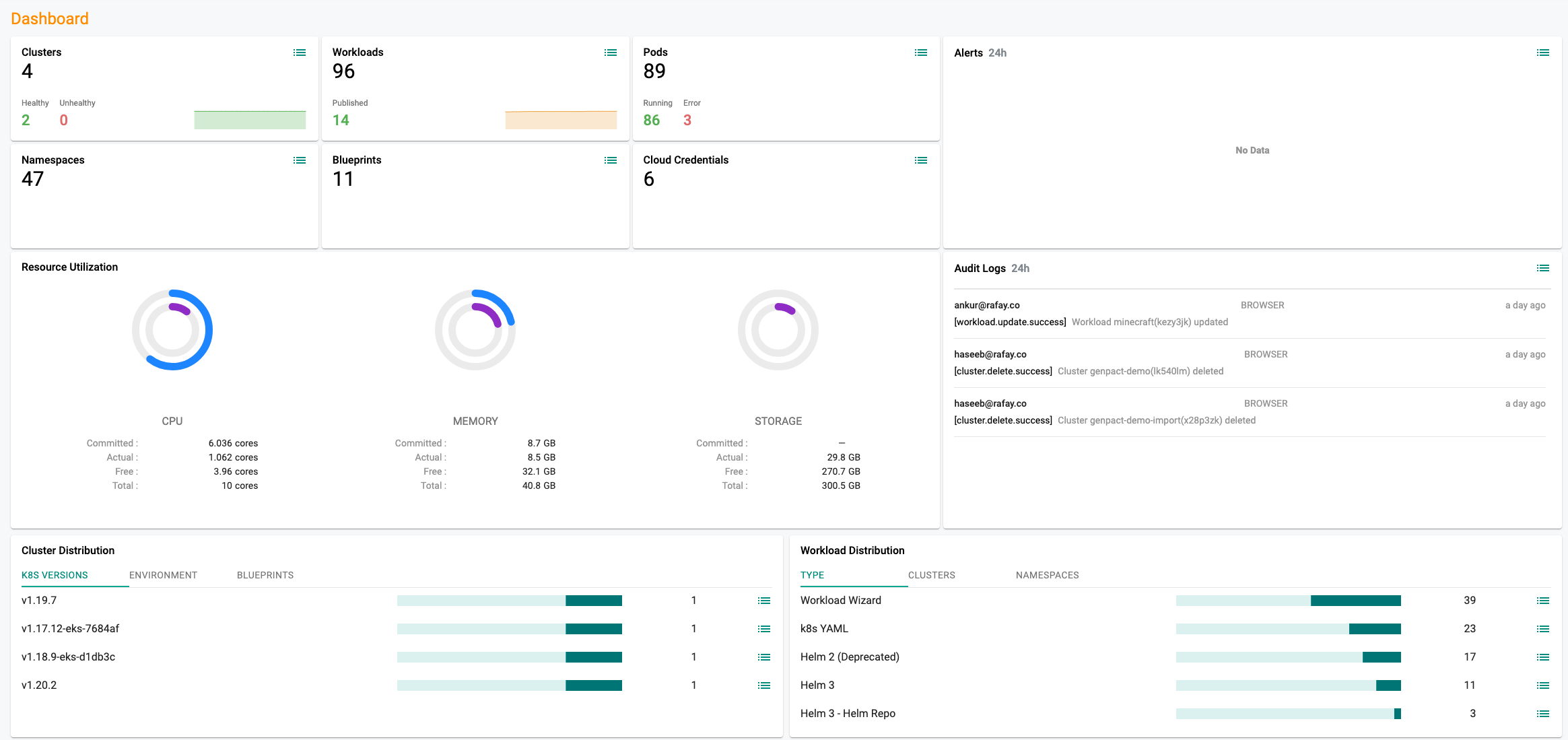

Organization dashboard¶

Organization Administrators will have access to an organization wide dashboard that will provide a bird’s eye view of resources across all projects.

Project dashboard¶

Project admins will have access to a project wide dashboard providing a bird’s eye view into all resources in the project.

Upstream Kubernetes¶

Customizable Retry Thresholds¶

For cluster provisioning and node additions in remote edge environments with slow or unreliable network connectivity, administrators can now specify retry thresholds for initial cluster provisioning and addition of new nodes. This ensures that provisioning and node additions will keep retrying until it is successful or if the specified threshold is met.

New Kubernetes Versions¶

In addition to Kubernetes 1.17.x, 1.18.x and 1.19.x, customers can now provision clusters based on Kubernetes 1.20.x (based on the containerd cri).

Existing upstream Kubernetes clusters can be seamlessly upgraded to 1.20.x

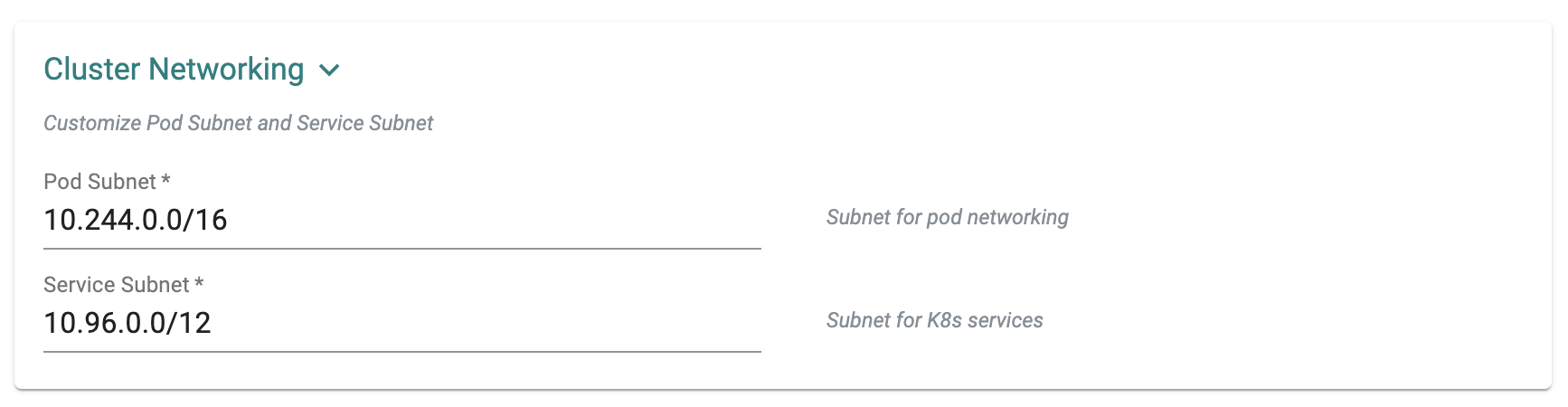

Custom Pod and Service Subnets¶

For scenarios where the customer's internal LAN subnet is the same as the default CIDR for the CNI, administrators can now specify a custom CIDR block for pod and service subnets during cluster provisioning.

Amazon EKS¶

Kubernetes Versions¶

Amazon EKS clusters can now be provisioned based on Kubernetes 1.19. Existing EKS clusters based on older versions of Kubernetes that are managed by the controller can be seamlessly upgraded to Kubernetes 1.19.

Storage Classes¶

Worker nodes can now be provisioned with support for Amazon’s gp3 storage class

Spot Instances¶

Managed node groups can now be provisioned to use spot instances for significant cost savings.

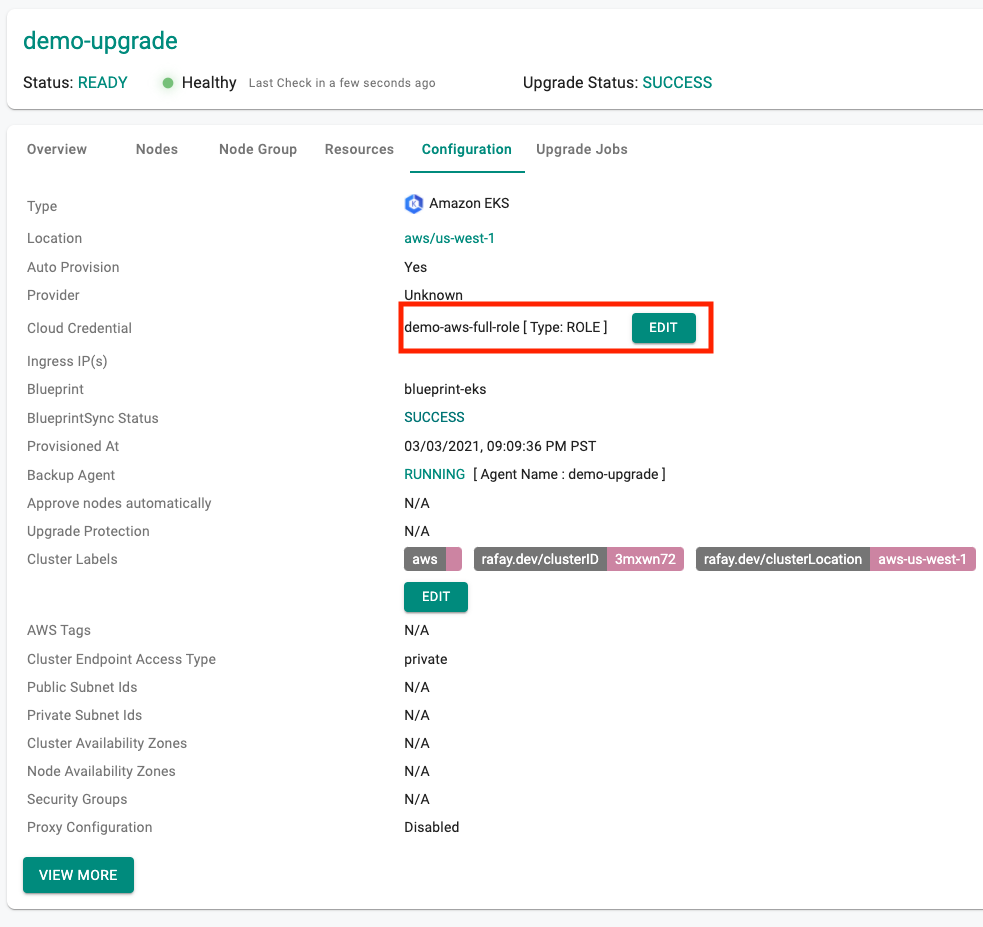

Cloud Credentials¶

Administrators can quickly identify the cloud credentials associated with a managed EKS Cluster on the web console. They are also provided with an intuitive workflow to replace/switch cloud credentials after a cluster has been provisioned providing them with flexibility with ongoing operations.

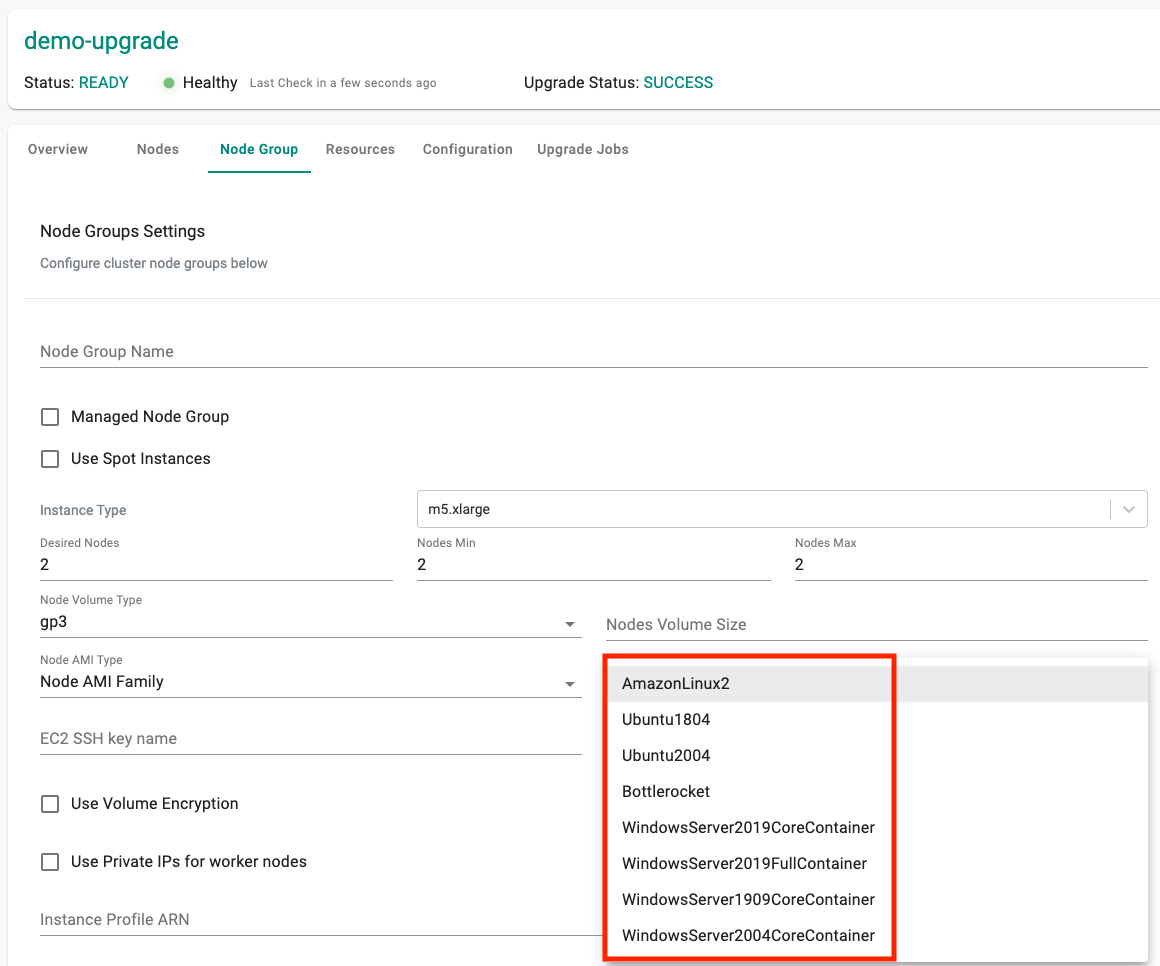

Windows Node group Support¶

Administrators can now provision and manage self managed Windows node groups allowing them to deploy and operate Windows based containers on managed EKS clusters.

Advanced Customization¶

Administrators can also now optionally view, edit and perform advanced customization of the cluster’s configuration on the controller to provision a cluster or to add a new node group. They can also programmatically download and save the cluster specification of an active cluster in a version controlled Git repository. Examples for advanced customization options are available for Fargate profiles and user data for customization of ec2 based worker nodes.

# Usage: rctl create cluster eks -f ./test-eks-cluster.yaml

kind: Cluster

metadata:

labels:

env: dev

type: eks-workloads

name: test-eks

project: defaultproject

spec:

type: eks

cloudprovider: dev-aws

blueprint: standard-blueprint

---

apiVersion: rafay.io/v1alpha5

kind: ClusterConfig

metadata:

name: test-eks

region: us-west-1

tags:

'app': 'demo'

'owner': 'myowner'

vpc:

subnets:

private:

us-west-1b:

id: subnet-xxxxxxxxxxxxxxxxx

us-west-1c:

id: subnet-xxxxxxxxxxxxxxxxx

public:

us-west-1b:

id: subnet-xxxxxxxxxxxxxxxxx

us-west-1c:

id: subnet-xxxxxxxxxxxxxxxxx

iam:

serviceRoleARN: arn:aws:iam::xxxxxxxxxxxx:role/<IAM_ROLE_NAME>

nodeGroups:

- name: nodegroup-4

instanceType: t3.xlarge

desiredCapacity: 1

minSize: 1

maxSize: 3

iam:

instanceProfileARN: arn:aws:iam::xxxxxxxxxxxx:instance-profile/<IAM_INSTANCE_PROFILE_NAME>

instanceRoleARN: arn:aws:iam::xxxxxxxxxxxx:role/<IAM_ROLE_NAME>

volumeType: gp3

volumeSize: 50

privateNetworking: true

volumeEncrypted: true

volumeKmsKeyID: xxxxxxxx-xxxx-xxxx-xxxx-xxxxxxxxxxxx

labels:

'app': 'myapp'

'owner': 'myowner'

ssh:

allow: true

publicKeyName: demo

securityGroups:

attachIDs:

- sg-abc134

- sg-def345

secretsEncryption:

# ARN of the KMS key

keyARN: "arn:aws:kms:us-west-1:000000000000:key/00000000-0000-0000-0000-

In-Place Upgrade Enhancements¶

Keep your worker node OS patched and up to date. Perform seamless AMI updates for both managed and self managed node groups for both EKS Optimized AMIs and Custom AMIs.

Force Delete¶

For scenarios where the underlying infrastructure in AWS has been deleted out-of-band or if the access credentials have been revoked, the administrator can now force delete the cluster in the controller.

Backup and Restore¶

Turnkey workflows for cluster disaster recovery use cases such as cluster migration and cluster cloning ensuring that these can be performed by admins in a reliable and standardized manner. Users are now provided with a 1-click workflow to initiate and perform restore operations from a backup.

Cluster Blueprints¶

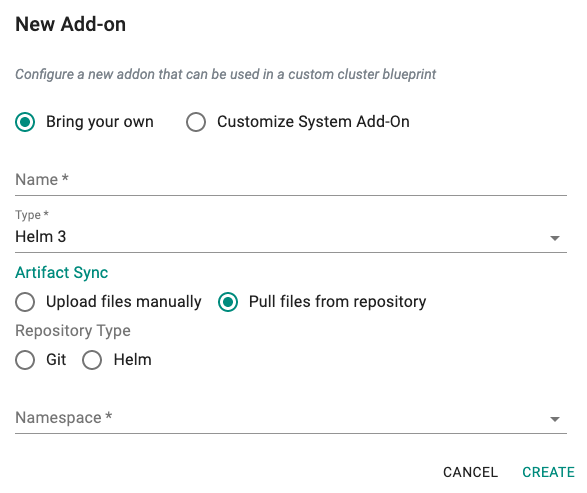

Addons from repository¶

In addition to being able to upload Helm and k8s yaml artifacts for addons to the controller, they can now also be created referencing the artifacts from Git and Helm repositories in conformance with the GitOps paradigm.

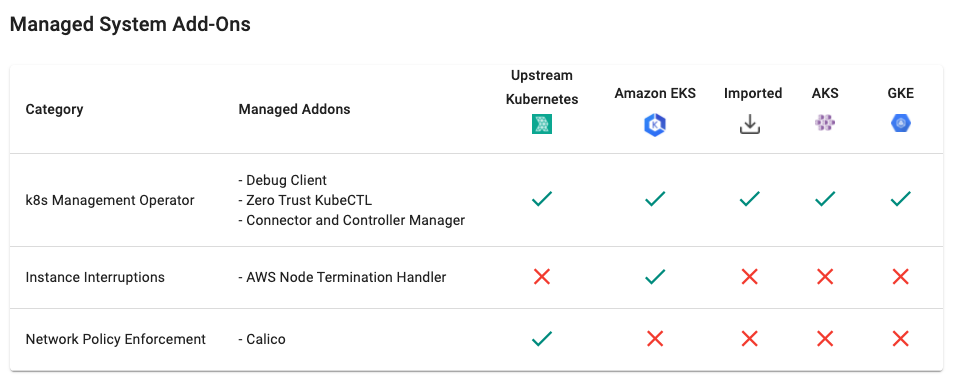

Minimal Cluster Blueprint¶

In addition to the default cluster blueprint, administrators now also have the option to select a minimal cluster blueprint. This is a lightweight blueprint that does not come with addons for monitoring, logging etc and is well suited for resource constrained Kubernetes deployments and environments where organizations have existing solutions for critical capabilities such as monitoring, logging etc. Note that for clusters with the minimal blueprint, the cluster dashboards will provide significantly scaled down visualization and metrics.

Search for Addons and Blueprints¶

It is common for organizations to have 100s of addons and blueprints. Administrators can now leverage the builtin search capability to quickly find the addons they are looking for from the available list of Addons and Blueprints. Further, while adding specific addons to a cluster blueprint, administrators can use the search functionality to quickly find and select the relevant addons resulting in increased productivity and better user experience.

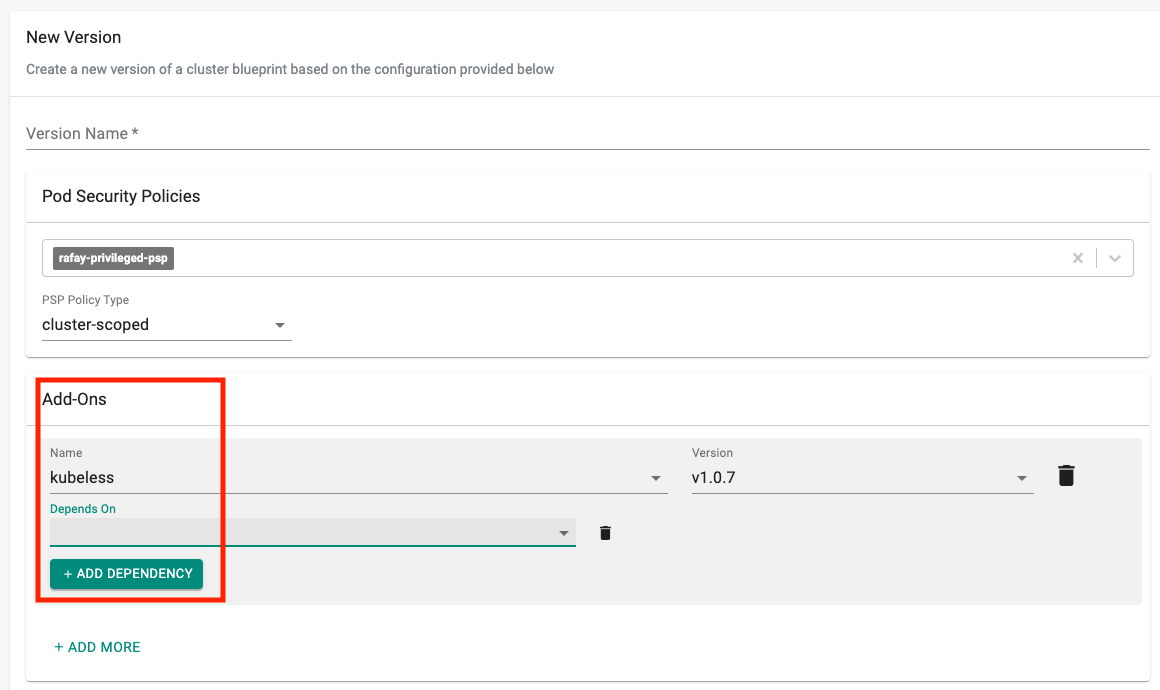

Addon Dependency Management¶

In a blueprint that comprises multiple add-ons, there are situations where certain addons can be applied if and only when certain addons are already deployed and operational on the cluster. This calls for an acyclic graph execution model wherein certain components in a blueprint can be created/updated in parallel and certain ones based on availability of pre-requisites. Administrators can now specify and configure dependencies while creating a blueprint and the controller will take care of implementing dependency management across addons in cluster management operations.

Cluster overrides for addons¶

When deploying addons to a fleet of clusters, there can be situations where certain resources need to be customized at cluster level (customizable to a single cluster or group of clusters). With cluster overrides, the same addon can now be deployed on a fleet of clusters with customizable configurations that can differ on a cluster to cluster basis. Internally, this feature uses the generic capability of k8s labels and label selectors to match resources, replaceable values, target clusters. This makes it immensely flexible and powerful to be applied on a wide range of customer scenarios.

Workloads¶

Cluster overrides for workloads¶

When deploying workloads to a fleet of clusters, there can be situations where certain resources need to be customized at a cluster level. With cluster overrides, the same workload can now be deployed on a fleet of clusters with customizable configurations that can differ on a cluster to cluster basis. The cluster overrides feature internally uses the generic capability of k8s labels and label selectors to match resources, replaceable values, target clusters. This makes it immensely flexible and powerful to be applied on a wide range of customer scenarios.

Debug and Troubleshooting enhancements¶

An intuitive and detailed debug workflow powered by the underlying zero trust control channel is now available for workloads. This provides users with end-to-end traceability and detailed visibility into all k8s resources associated with a workload. Users can also efficiently debug and troubleshoot issues using built in conveniences for viewing “k8s events”, “logs” and even perform remote kubectl exec operations on remote containers at the click of a button. In addition to current state, users are also provided insight into trends of critical k8s resources associated with their workloads.

Multiple values in Helm3 workloads¶

Support for linking of multiple value files with a single Helm chart so as to facilitate advanced Helm3 chart customizations.

GitOps¶

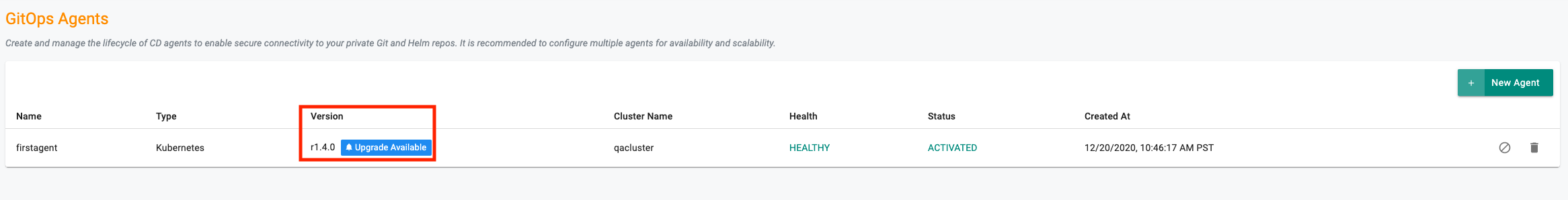

CD Agent Lifecycle Management¶

Support of multiple versions of agents on the same cluster and the ability to activate/deactivate specific agents. Administrators can now see the exact version of the cd agents and manage the lifecycle of each agent individually providing them fine-grained control over the lifecycle.

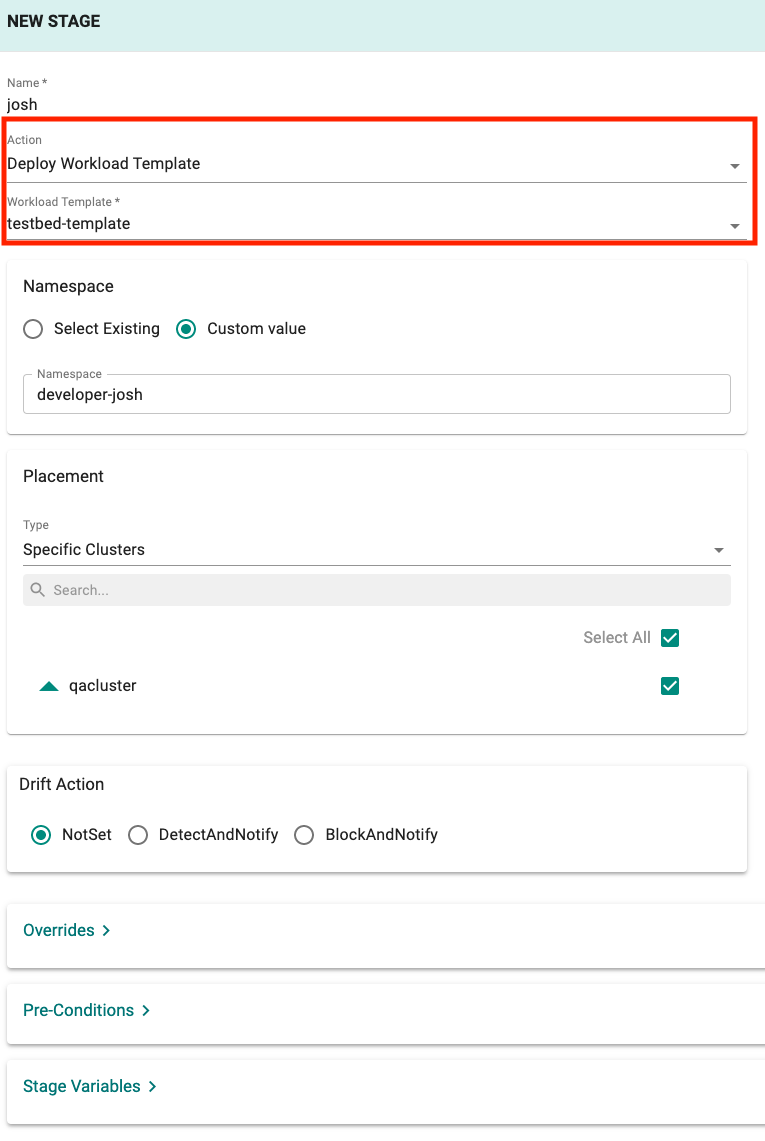

Workload templates¶

In some cases, organizations need to associate the same workload with different pipelines. For example, separate pipelines for dev, staging and production. Instead of creating and maintaining separate workloads per pipeline, users can create a workload template that can be associated with one or more pipelines with customizable values. The customizable values can be either provided at configuration time or can be dynamically populated by the system based on evaluation of custom variables and expressions.

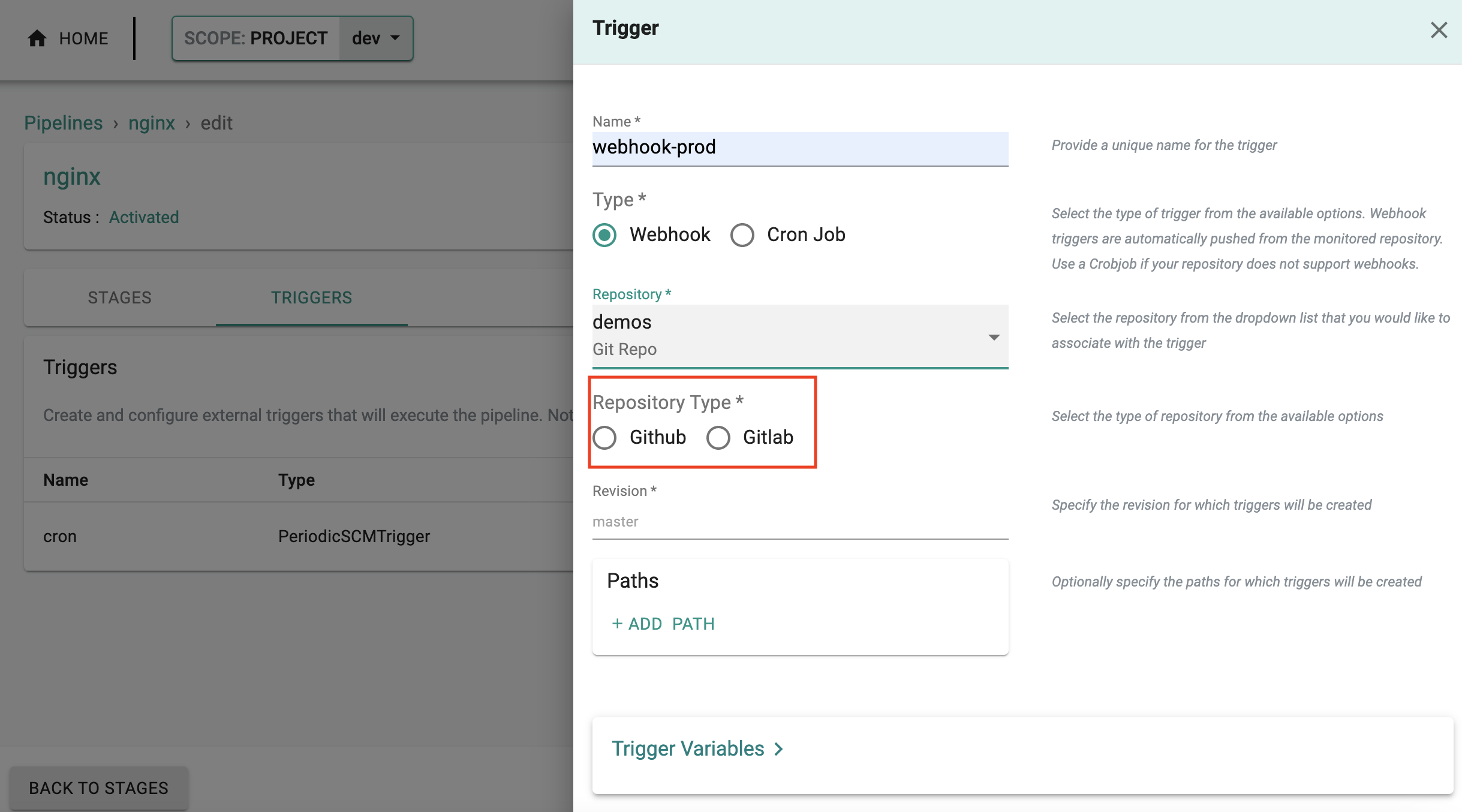

Webhooks for GitLab repository¶

Adds first class support for managing webhooks from GitLab repositories for GitOps pipeline triggers.

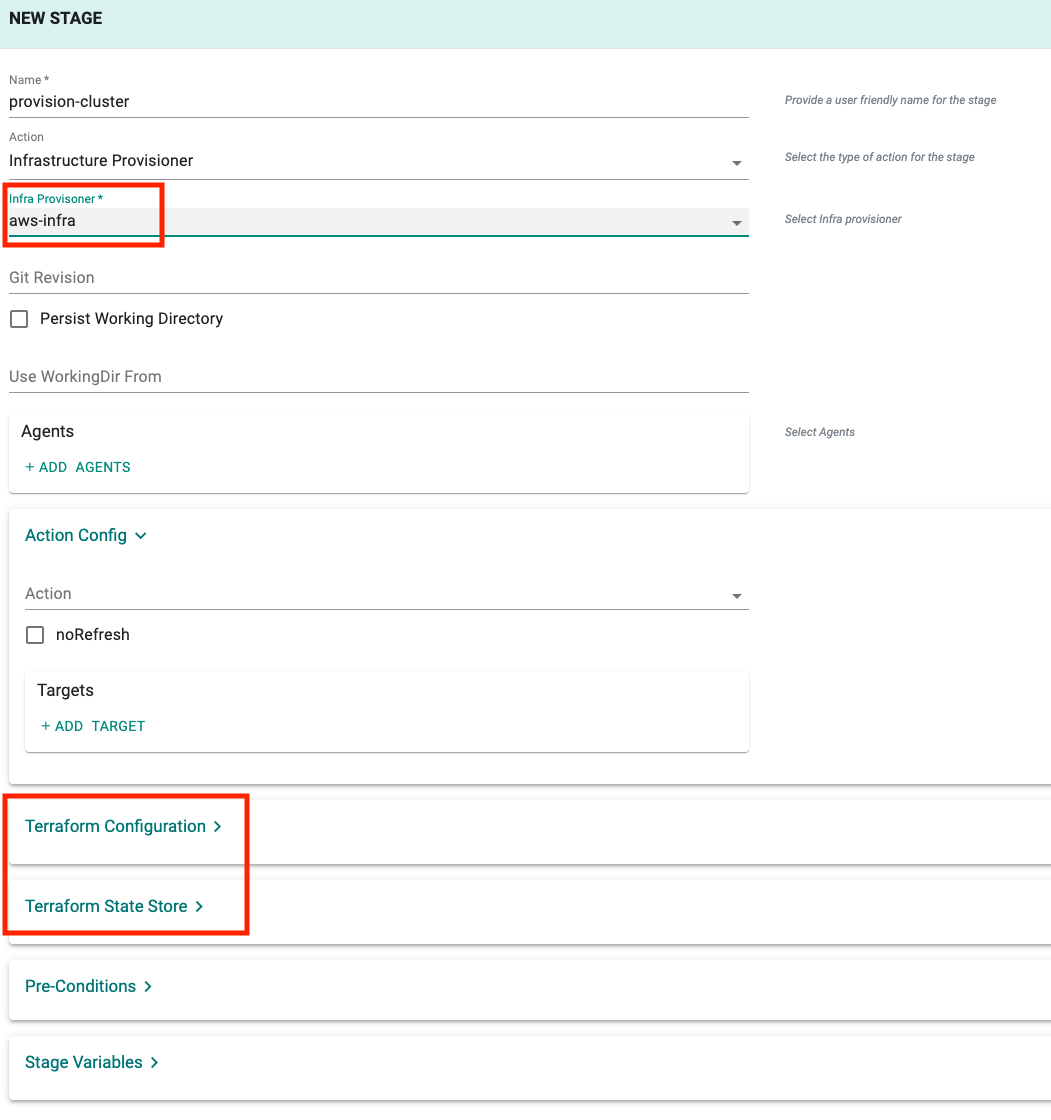

Infra Provisioning Stage¶

Support for a generic terraform provisioning stage to plan and apply infrastructure changes as part of the GitOps continuous delivery pipeline. Users will have the option to configure terraform stages and link that with approval and workload deployment stages to realize a highly customizable and effective continuous delivery pipeline

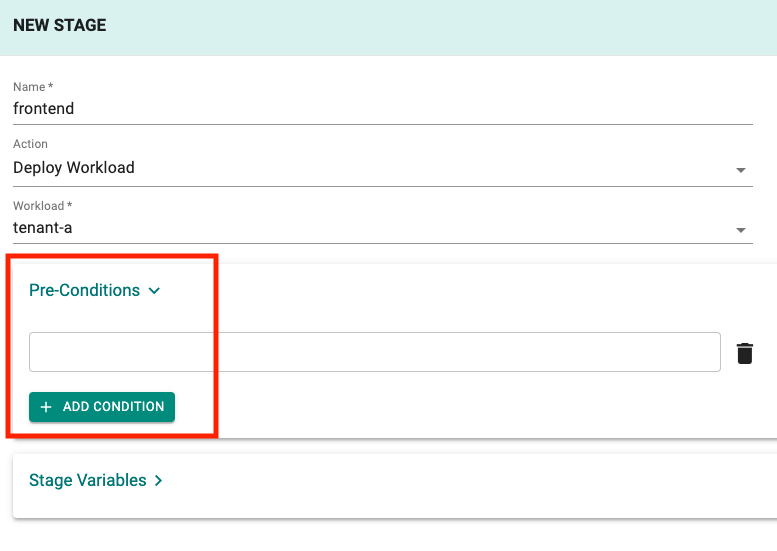

Stage preconditions¶

Facilitates conditional execution of stages in a pipeline. Users can attach one or more conditions to a stage in their GitOps pipeline. The pipeline will make sure that the runtime execution of stage happens only if the conditions are satisfied at runtime.

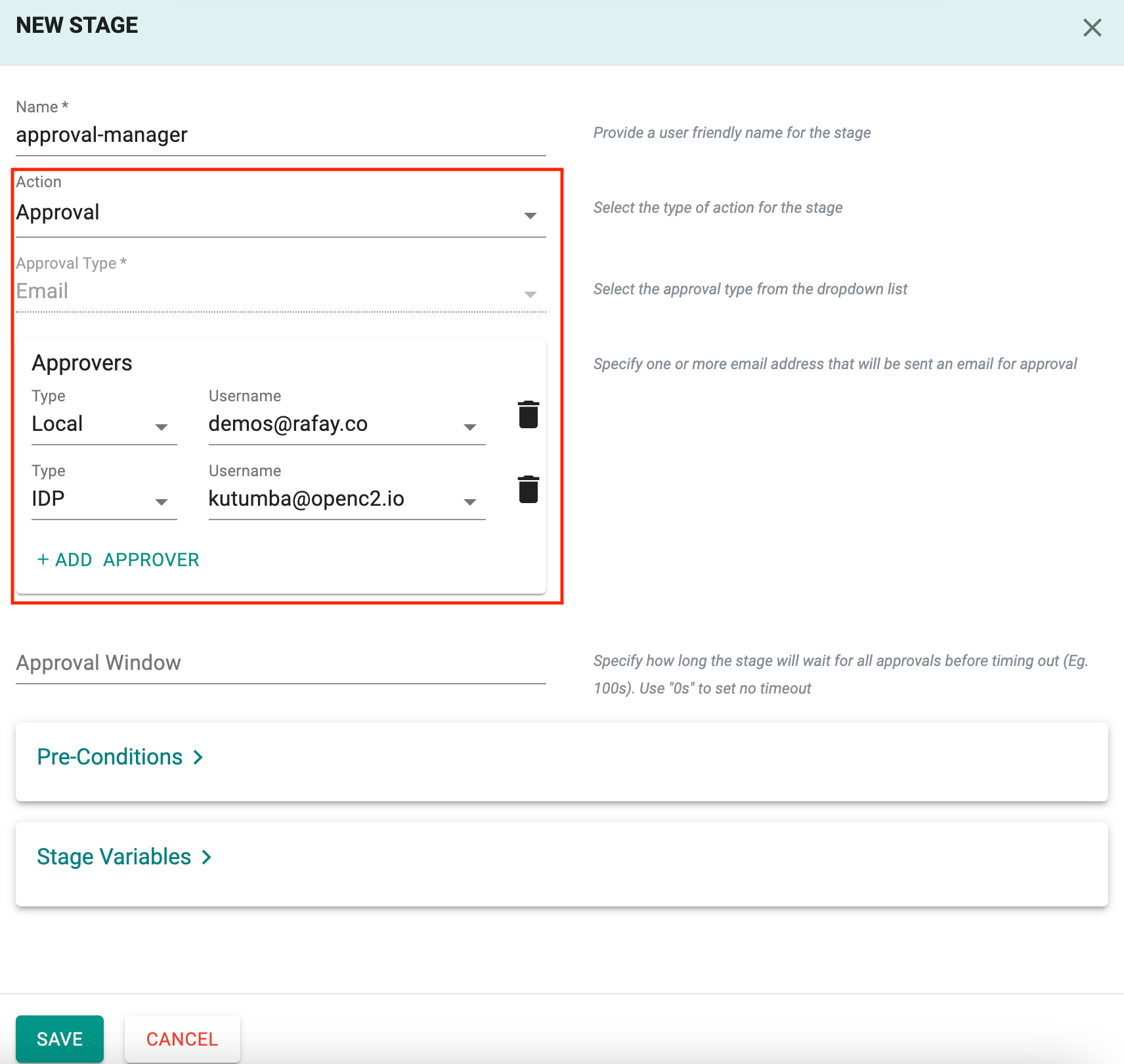

Approval workflow enhancements¶

In the approval stage, customers can now specify one or more users as approvers. Only these users will have the privilege to approve once workflow reaches the identified approval stage. If more than one user is present in approver’s list, approval from any one of them will be sufficient. If customers want to model a workflow where multiple approvals are mandatory, they can link multiple approval stages in a sequence with specific users.

Role Based Access Control¶

Resource sharing and Governance¶

Create, manage and share organization-level objects such as cloud credentials, clusters, blueprints and addons with all or specifically identified projects in the Organization. This workflow enables organizations to implement and centralize standards across all projects in their organization, achieve governance and enforce policies.

API keys for SSO users¶

Support for management of API keys for Single Sign On (SSO) users. SSO users can now use the RCTL CLI which uses API keys to make REST API calls to the controller for day to day operations.

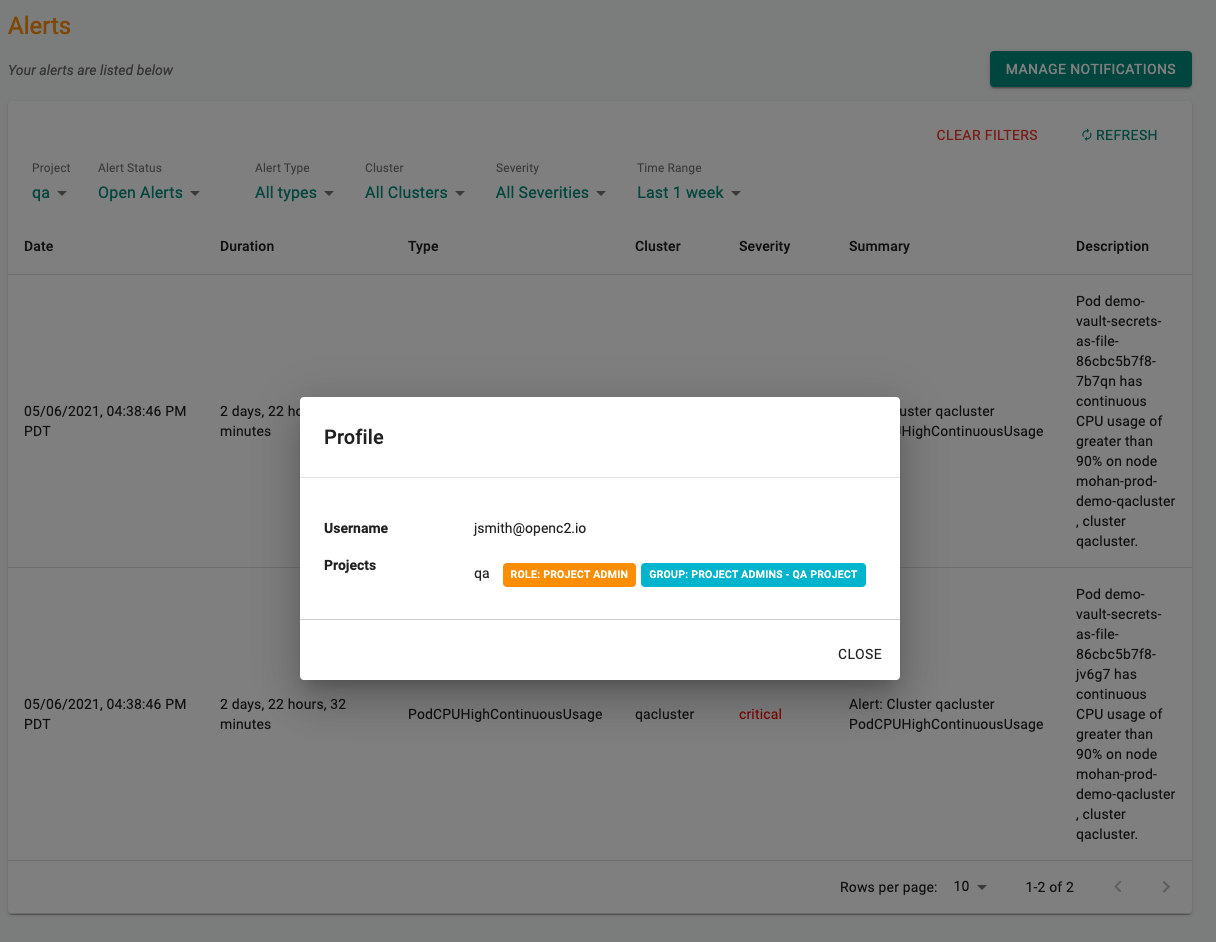

Alerts for Project Users¶

Users with "project admin" or "read only project admin" roles are now provided visibility to pre-filtered audit logs for the projects they have access to.

Networking¶

Forward Proxy Support¶

Organizations that require the use of an explicit forward proxy for all outbound https requests to the Internet can now explicitly specify the forward proxy details for ongoing control channel communications between the managed cluster and the SaaS controller.

Alerts & Audits¶

Whitelabel support for email communications¶

Details in email notification templates for alerts and approvals for GitOps pipelines can now be configured and customized per partner.

Pipeline agent health checks¶

Alerts are now automatically generated if the CD agent health deteriorates and requires immediate attention.

Audit trail for namespaces¶

Audit events are generated and captured for administrative actions on namespaces.

Bug Fixes¶

| Bug ID | Description |

|---|---|

| RC-10110 | API only Namespace Admin user is not able to download kubeconfig via API or RCTL |

| RC-10104 | CPU and Memory Utilization query issue with Multi container Pods - should include the pod name along with container name |

| RC-9982 | Pipeline approval email is not working for partners |

| RC-9962 | For helm repo workload/addon, if there is no Chart Version provided, pull the latest version of the helm chart |

| RC-9955 | Crictl warning for runtime with K8S 1.20.5 |

| RC-9826 | Create Project: Do not Allow use of Capitals in Name |

| RC-9743 | When there are multiple resources in the template with helm hook all the resources in the file are not getting installed in the cluster |

| RC-9706 | Root filled in one day by syslog |

| RC-9705 | Opening a project shows overlapping names |

| RC-9703 | Alert emails have typos in the Possible Impact section |

| RC-9702 | Cluster -> Resources -> Workloads -> Published Date is not correct |

| RC-9700 | Workload Publish Success false positive when updating workload |

| RC-9698 | Pod email alert message for the Possible Impact section should be corrected |

| RC-9697 | Swagger documentation update for delete cluster operation |

| RC-9696 | Alert emails -> When cluster/pod health is restored the email has wrong |

| RC-9692 | Infrastructure -> PSP -> Unable to delete PSP which name is more than 63 characters |

| RC-9691 | Infrastructure -> PSP -> When we exceed the name field length, no error is raised |

| RC-9689 | GitOps Pipelines -> Create new pipeline -> Description field doesn't seem to have a char limit |

| RC-9687 | Integrations -> Registry -> When we exceed the name field length, incorrect error is raised |

| RC-9686 | System -> Projects -> When we exceed the name or description field length, incorrect error is raised |

| RC-9685 | System -> Users -> Unable to search by firstname or lastname |

| RC-9684 | System -> Users -> When we exceed the fields length, incorrect error is raised |

| RC-9683 | System -> Users -> Alerts Recipients -> Email field should have limit on max character |

| RC-9656 | Search for Workload with hostname does not work if giving the whole link of the hostname |

| RC-9653 | Workload -> Debug : Age is not shown in days |

| RC-9652 | Placement policy using value only label does not work for workload |

| RC-9651 | Rafay Clusters - blueprint filter is not returning the same results |

| RC-9647 | Remove Monitors setting for the cluster from UI |

| RC-9646 | Remove maintenance mode from UI |

| RC-9645 | Pods, Events and Trends icons are not working |

| RC-9644 | PV are not displayed in the cluster resources tab |

| RC-9641 | Systems > Alerts are not filtered correctly by severity |

| RC-9640 | Workload count from the list view is not displaying the correct values |

| RC-9639 | System -> Audit logs -> Kubectl logs filter issues |

| RC-9636 | Empty Error red message box displayed when creating the invalid user |

| RC-9635 | Right after the workload is published, check the events in the Cluster Dashboard > Resource > Namespace, the "Age" show weird time "-1y-1d" for the 1st minute |

| RC-9591 | Zero trust kubectl access to cluster lost for 15 mins |

| RC-9449 | Managed ingress doesn't get enabled from RCTL |

| RC-9394 | Enable ALB and EFS additional IAM roles for EKS cluster does not take effect |

| RC-9390 | UI: Hide EKS NodeGroup Node AMI Family option depend on version selected for the EKS cluster |

| RC-9375 | Exclude kube-system, rafay-system, rafay-infra from secret admission webhook |

| RC-9357 | Not able to create an API key for org admin user using partner admin API Key |

| RC-9277 | Kubectl console from the UI is overlapping with the dashboard |

| RC-9276 | Pagination is not reset when changing filter in the cluster Resources Dashboard page |

| RC-9269 | Swagger-API: Cleanup warnings/errors during generation of SDK |

| RC-9234 | Workload with registry integration is failed to publish due to client pool timed out |

| RC-9231 | Should validate addon name to under 63 characters to avoid blueprint sync status stuck in In Progress forever |

| RC-9192 | Workload in Publish tab shows Config changed republish though no config is changed; is_dirty flag is getting set to true |

| RC-8935 | Run 'vault-init' and 'vault-sidecar' container as non privileged, nonroot user |

| RC-8409 | Clusters view is not displayed correctly in window mode |

| RC-7702 | End of Life Software =IP.I.15 for openresty |

v1.4.3.1¶

05 Apr, 2021

No new features were introduced in this patch.

Bug Fixes¶

| Bug ID | Description |

|---|---|

| RC-9743 | For helm3 workloads, when there are multiple resources defined in a template, helm hooks are not getting executed |

QCOW and OVA Image Updates¶

01 Apr, 2021

QCOW Image Update¶

Updated qcow and ova images (v1.4) are now available to customers. This is primarily an ongoing security update that incorporates the latest OS kernel updates, container images and refreshes the OS packages.

This is packaging only release focused on ensuring that newly provisioned clusters based on the qcow and ova images will not require post provisioning kernel level security patches to be applied requiring reboots etc.

Bug Fixes¶

This does not incorporate any new features or bug fixes. Exact list of file changes in the updated qcow image will be provided to customers and partners upon request.

v1.4.0 QCOW Image¶

24 Feb, 2021

QCOW Image Update¶

An updated qcow image (v1.4) is now available to customers. This is primarily an ongoing security update that incorporates the latest OS kernel updates, container images and refreshes the OS packages.

This is packaging only release focused on ensuring that newly provisioned qcow image based clusters will not require kernel level security patches to be applied post cluster provisioning.

Bug Fixes¶

This does not incorporate any new features or bug fixes. Exact list of file changes in the updated qcow image will be provided to customers and partners upon request.

v1.4.3¶

19 Feb, 2021

Amazon EKS¶

The RCTL CLI based lifecycle management of Amazon EKS clusters has been enhanced to add support for "Volume Encryption", "GP3" and "Envelope Encryption of Secrets in etcd". All customers are recommended to update to the latest version of RCTL. View additional details here.

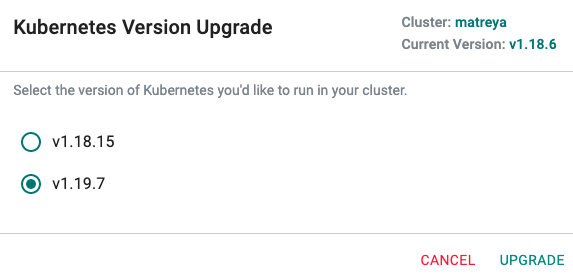

Kubernetes Patches¶

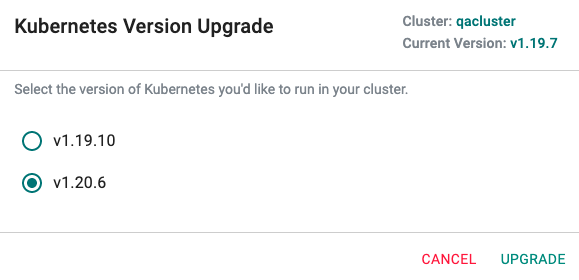

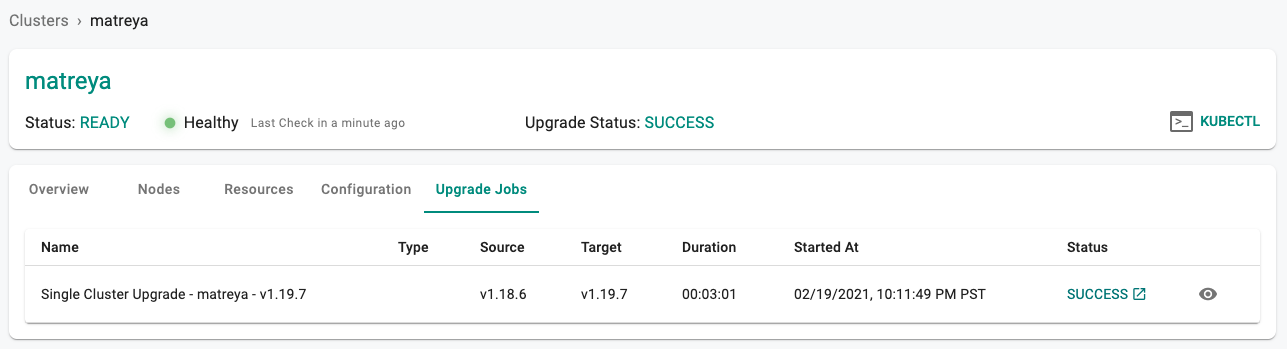

Support for latest updates of upstream k8s: v1.19.7, 1.18.15, 1.17.17. Customers are recommended to upgrade their managed clusters as quickly as possible to ensure they have the latest related updates.

Upgrades of managed upstream k8s clusters are performed "in-place" with "zero downtime" and are completed in just a few minutes. See screenshot below for an example.

Bug Fixes¶

None

v1.4.2¶

9 Feb, 2021

Options for Blueprints¶

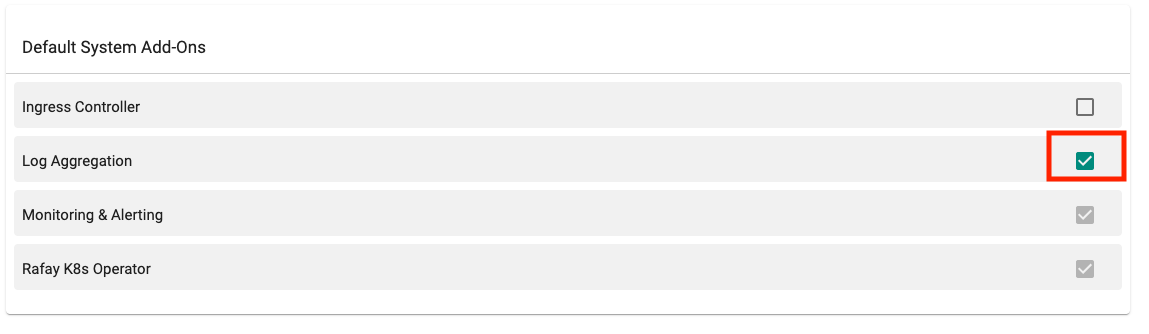

The log aggregation addon is no longer mandatory in the default cluster blueprint. Users can optionally deselect this addon from their custom blueprints. This can be useful for deployments where organizations may have standardized on an alternate log aggregation technology.

Defaults for OVA based Clusters¶

Default settings for the OVA based cluster provisioning wizard have been updated to streamline the user experience. With this update, users can provision OVA image based clusters in a single click.

Bug Fixes¶

None

v1.4.1¶

27 Jan, 2021

No new features were introduced in this patch.

Bug Fixes¶

| Bug ID | Description |

|---|---|

| RC-9331 | UI sets the wrong IP address format when the interface name is long causing cluster provisioning failures |

| RC-9233 | Change the UI labels to reflect the right units for the workload custom container image |

| RC-9543 | Blank page on session expiry at console login page |